HTTP/3 and QUIC: The Future of Web

The Last-Mile Problem

Your company just deployed HTTP/2 across all services. Page loads are 40% faster. Success! Then you check mobile metrics: users on 4G still experience stuttering video calls and slow API responses. The culprit? A single lost packet blocking ALL your HTTP/2 streams, waiting for TCP retransmission. You've hit TCP's fundamental limitation, and HTTP/3 was born to break through it.

In 2013, Google engineers asked a radical question: "What if we built a modern transport protocol from scratch, keeping TCP's reliability but removing its constraints?" The answer was QUIC (Quick UDP Internet Connections), and it's now the foundation of HTTP/3. Today, 25% of all internet traffic uses QUIC, including 75% of Google Chrome's HTTPS connections. The future isn't just faster—it's fundamentally different.

Let's understand why HTTP/3 is revolutionary and how QUIC achieves what seemed impossible.

TCP's Architectural Constraints

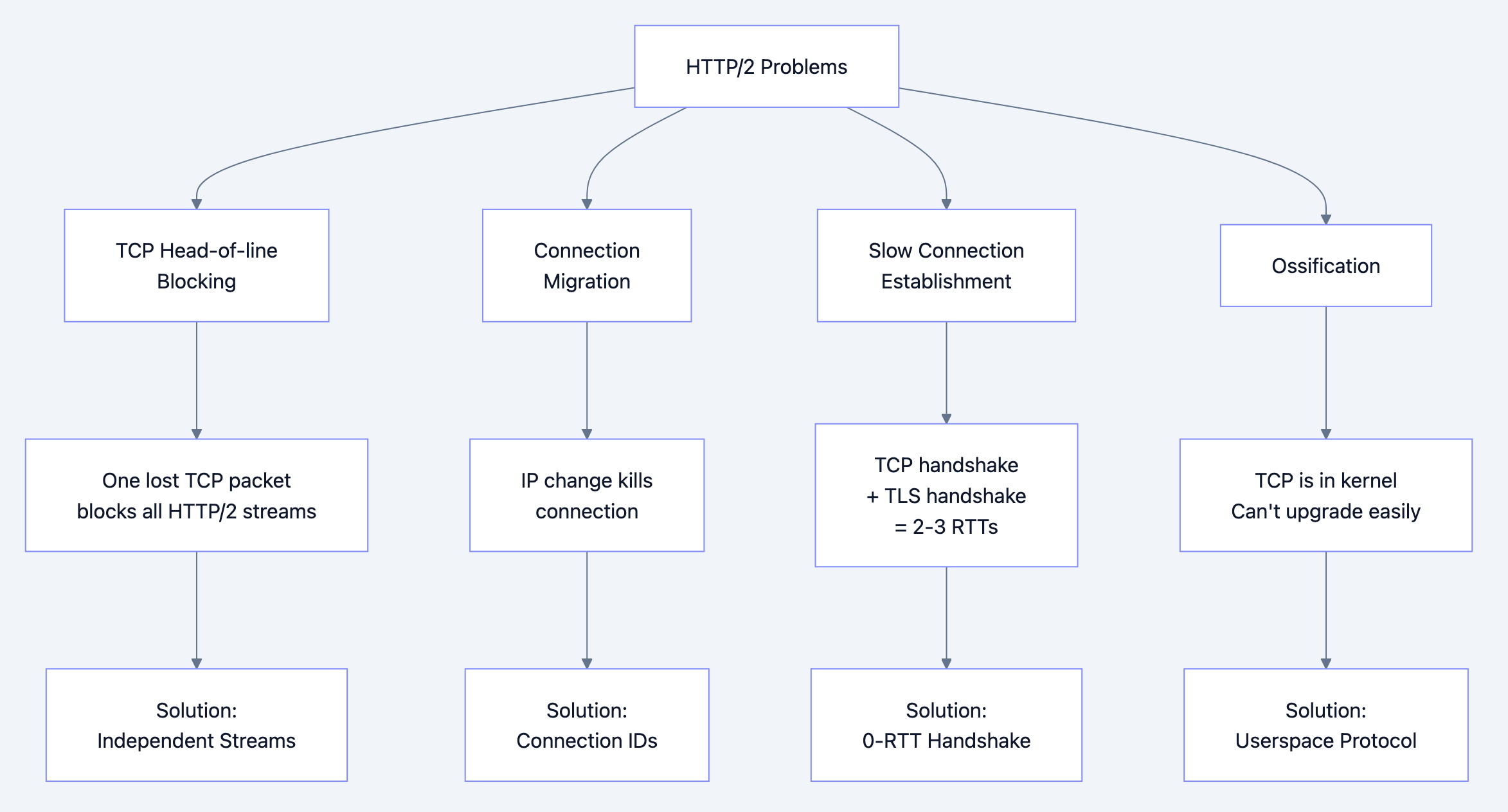

What Problem Does HTTP/3 Solve?

HTTP/2's remaining bottleneck: TCP head-of-line blocking

HTTP/2 solved HTTP-level head-of-line blocking with multiplexing, but introduced a new problem:

HTTP/2 Multiplexing (Application Layer): Stream 1: [Frame A1][Frame A2][Frame A3] No blocking Stream 3: [Frame B1][Frame B2][Frame B3] No blocking Stream 5: [Frame C1][Frame C2][Frame C3] No blocking TCP Layer (Transport): [Packet 1][Packet 2][LOST!][Packet 4][Packet 5] ↓ ALL STREAMS BLOCKED! ❌ Waiting for retransmission...

The core problems:

- TCP head-of-line blocking: One lost packet blocks all streams

- Connection migration: Can't survive IP address changes (mobile networks)

- Ossification: TCP is in kernel, impossible to upgrade

- Handshake latency: TCP + TLS = 2-3 RTTs before data transfer

- Slow start: New connections start conservatively

Historical context:

- 1981: TCP standardized (RFC 793)

- 1999: HTTP/1.1 uses TCP effectively

- 2015: HTTP/2 hits TCP's limits

- 2013: Google starts QUIC experiment

- 2021: HTTP/3 standardized (RFC 9114)

- 2024: 25% of web traffic uses HTTP/3

Why was QUIC needed?

TCP is perfect for single-stream connections (FTP, SSH). For multiplexed protocols (HTTP/2), TCP's ordered delivery guarantee becomes a bug:

- Stream 1 loses a packet → Stream 3 must wait (they're independent!)

- Mobile network switches (WiFi → 4G) → TCP connection dies

- Want to upgrade TCP congestion control → Need OS update (years!)

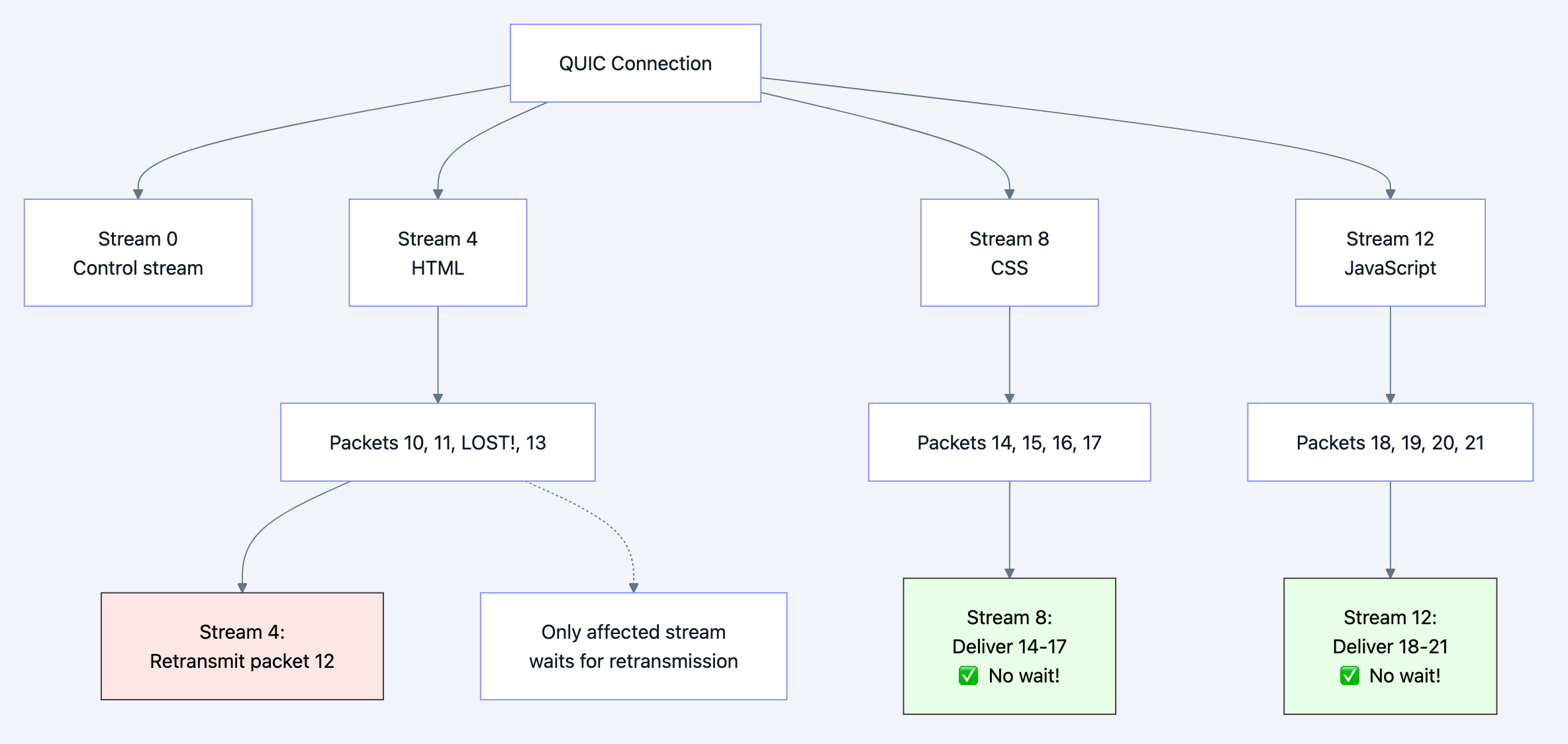

Flow diagram showing process

Real-World Analogy

Think of TCP vs QUIC like elevator vs escalator in a building:

TCP (Elevator):

- All passengers share one car

- Stops for everyone, everyone waits

- If elevator breaks, everyone stuck

- Fixed route, can't change mid-ride

QUIC (Escalator):

- Independent steps (streams)

- One step breaks, others keep moving

- Can switch between escalators mid-ride

- Newer escalators can be installed without replacing building

Just like escalators allow independent movement, QUIC allows independent stream delivery.

QUIC's UDP-Based Revolution

How Does HTTP/3 Solve These Problems?

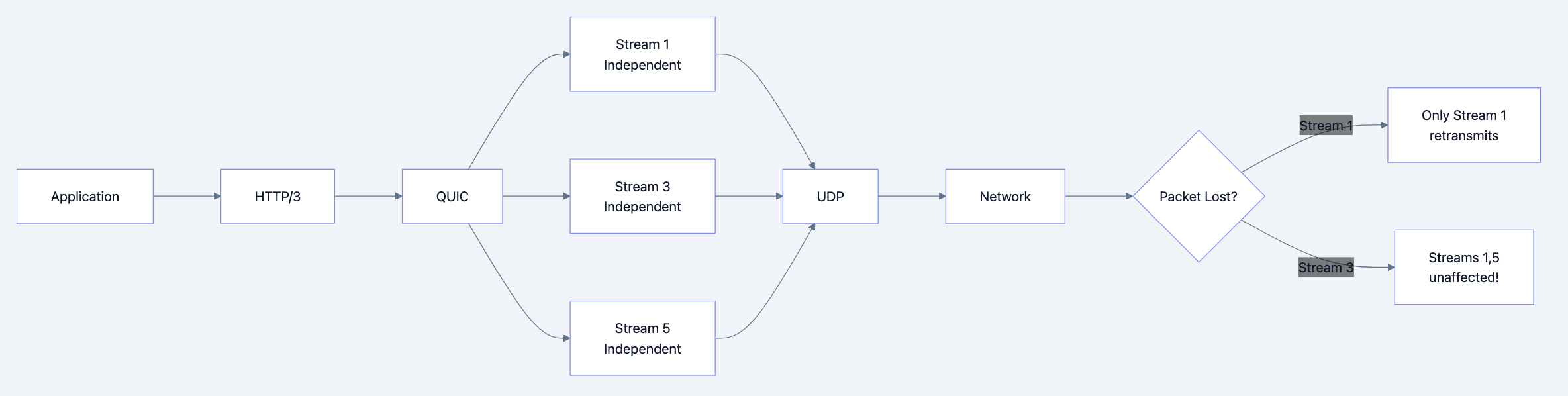

HTTP/3 = HTTP/2 semantics + QUIC transport (instead of TCP)

QUIC's radical innovations:

-

Built on UDP (not TCP)

- Why: UDP has no built-in ordering → QUIC controls it

- Userspace implementation → Fast iteration

- Bypasses TCP ossification

-

Stream independence

- Each stream has its own reliability

- Lost packet blocks only that stream

- Other streams continue unaffected

-

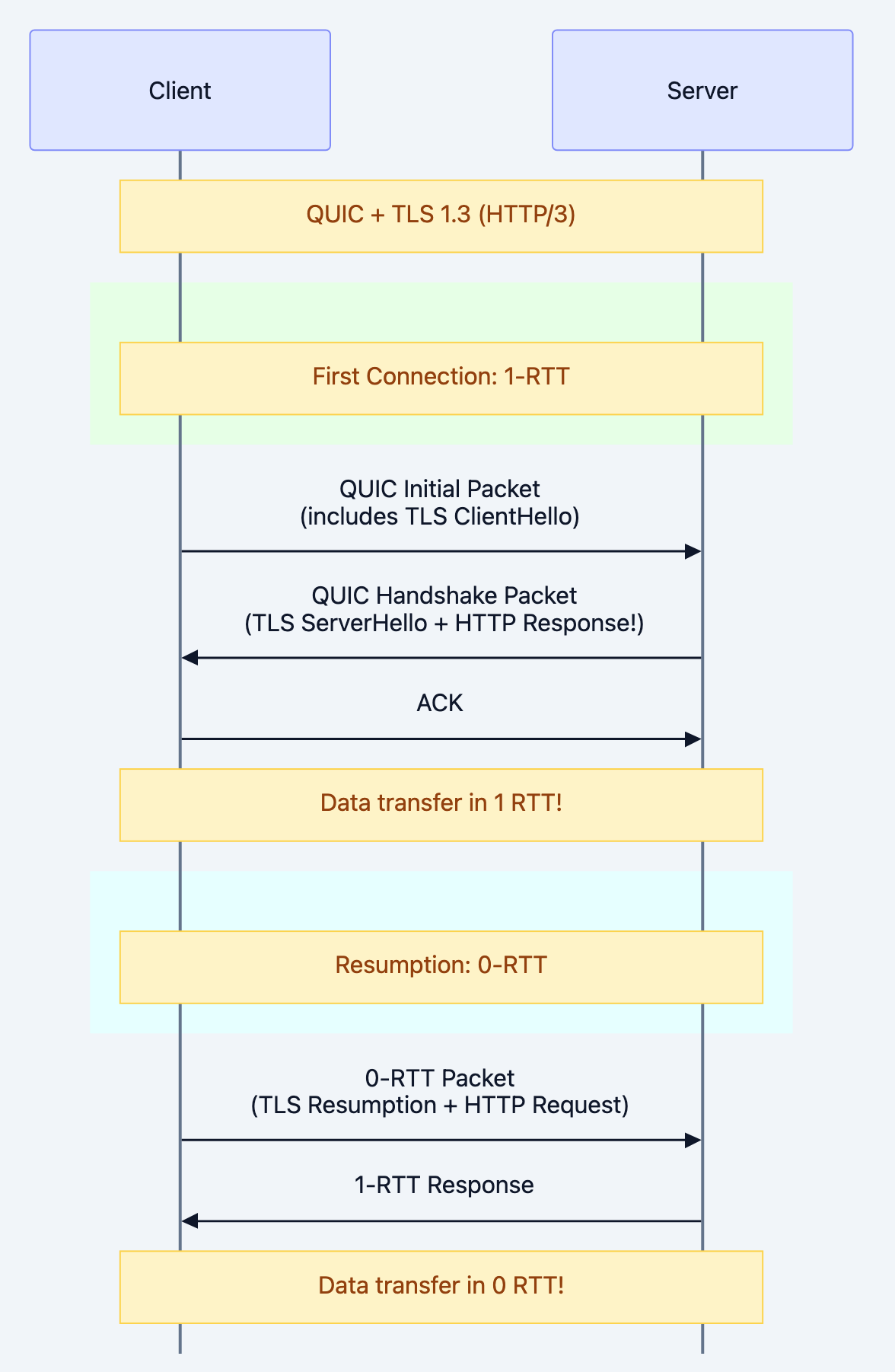

0-RTT connection establishment

- First connection: 1-RTT (vs TCP+TLS: 3-RTT)

- Resume connection: 0-RTT (send data immediately!)

- Massive latency reduction

-

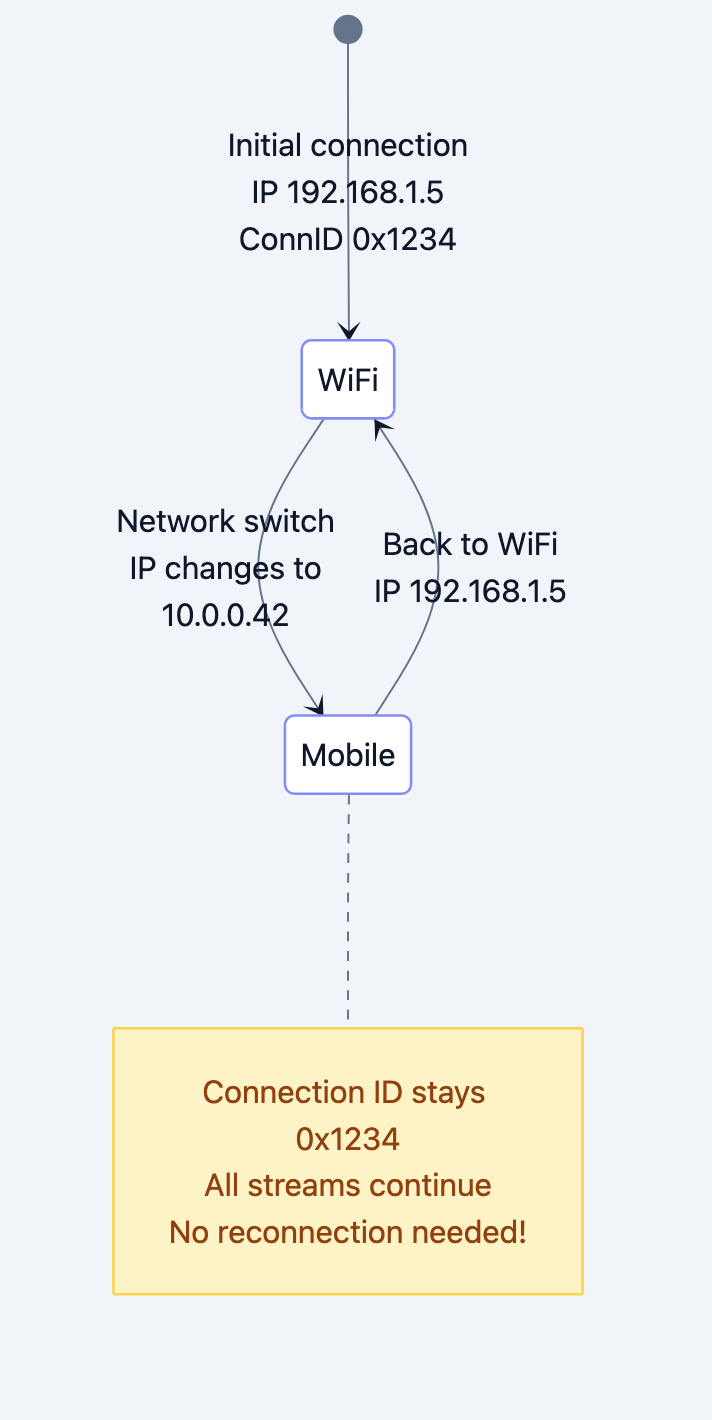

Connection migration

- Connection ID (not 4-tuple: IP, port)

- Switch WiFi → 4G seamlessly

- Mobile-first design

-

Built-in encryption

- TLS 1.3 integrated (not layered)

- Always encrypted

- Security by default

Flow diagram showing process

Why this approach wins:

No head-of-line blocking: Streams truly independent

Faster handshakes: 0-RTT for returning visitors

Mobile-friendly: Survives network changes

Rapid evolution: Userspace = quick updates

Always secure: TLS 1.3 mandatory

Understanding QUIC's Architecture

QUIC Connection Establishment

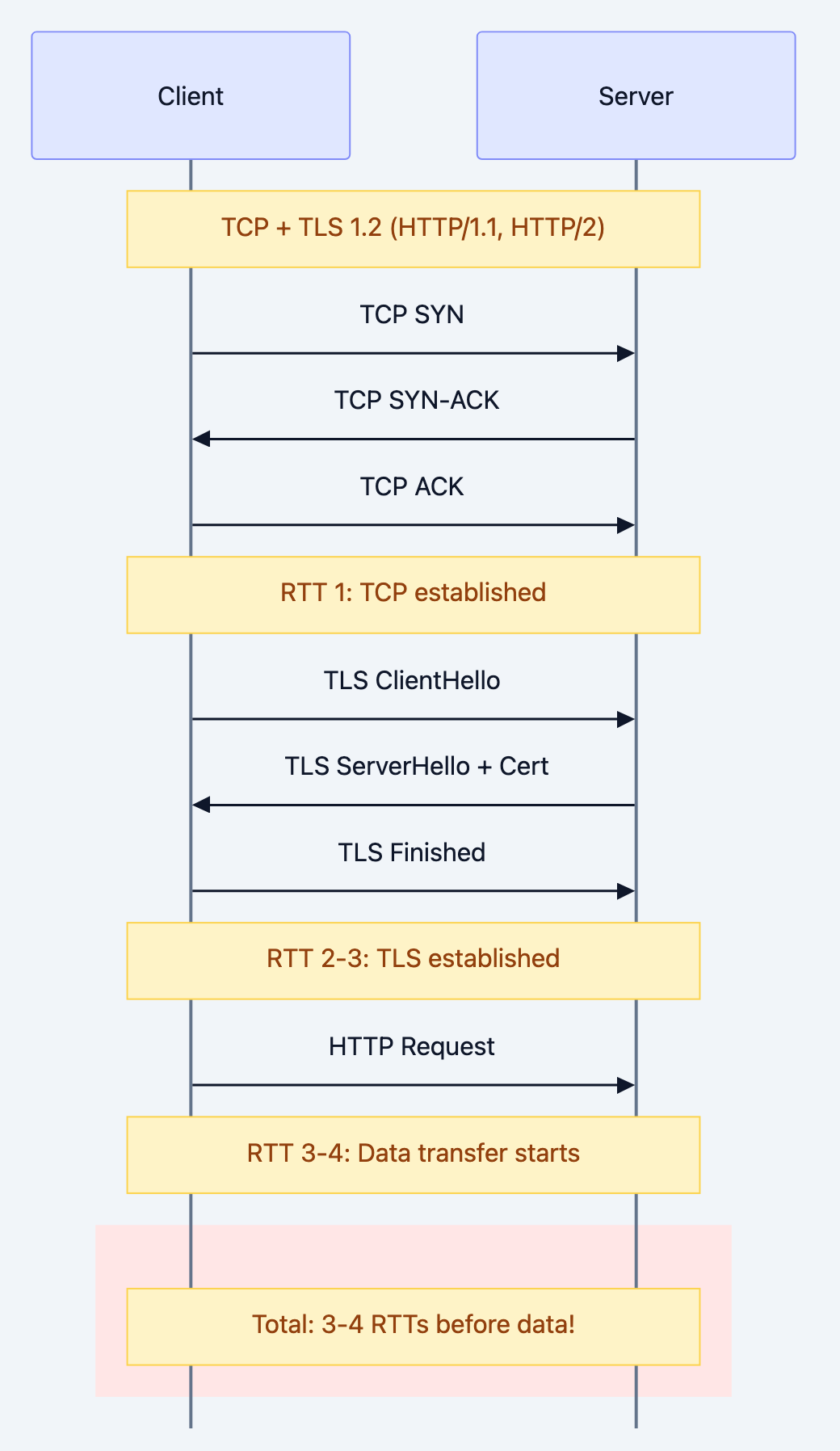

Compare handshakes:

TCP + TLS 1.2 (HTTP/1.1, HTTP/2)

QUIC + TLS 1.3 (HTTP/3)

Latency savings:

| Scenario | TCP+TLS | QUIC | Savings |

|---|---|---|---|

| First connection | 3 RTT | 1 RTT | 67% faster |

| Resumption | 2 RTT | 0 RTT | 100% faster |

| On 100ms network | 300ms | 100ms | 200ms saved |

| On mobile (200ms) | 600ms | 200ms | 400ms saved |

QUIC Packet Structure

QUIC Packet: +------------------+ | Header | | - Connection ID | (unlike TCP's 4-tuple) | - Packet Number | (always increasing) | - Packet Type | (Initial, Handshake, 0-RTT, 1-RTT) +------------------+ | Frames (multiple)| | - STREAM frame | | - ACK frame | | - CRYPTO frame | +------------------+ | Auth Tag | (AEAD encryption) +------------------+

Key differences from TCP:

-

Connection ID (not source IP/port)

- Survives IP changes

- 64-bit random identifier

- Client and server can change IPs mid-connection

-

Packet Number (not sequence number)

- Always increases (never wraps)

- Eliminates retransmission ambiguity

- Simplifies loss detection

-

Frames (not segments)

- Multiple frames per packet

- Multiple streams per packet

- Flexible multiplexing

Stream Independence in QUIC

Flow diagram showing process

Mental model: Each QUIC stream is like an independent TCP connection, but all share congestion control and connection state.

Connection Migration

Flow diagram showing process

Compare with TCP:

TCP (4-tuple: src_ip, src_port, dst_ip, dst_port): WiFi: 192.168.1.5:12345 → server:443 ↓ (IP changes) 4G: 10.0.0.42:12345 → server:443 New 4-tuple = New connection = Dropped streams QUIC (Connection ID): WiFi: 192.168.1.5:12345, ConnID=0x1234 ↓ (IP changes) 4G: 10.0.0.42:12345, ConnID=0x1234 Same ConnID = Same connection = Streams continue!

Deep Technical Dive

QUIC Frame Types

QUIC uses frames (not a continuous byte stream like TCP):

go// STREAM frame: Carries application data type StreamFrame struct { StreamID uint64 // Which stream Offset uint64 // Byte offset in stream Length uint64 // Frame length Fin bool // Last frame in stream? Data []byte // Actual payload } // ACK frame: Acknowledges received packets type AckFrame struct { LargestAcked uint64 // Highest packet number received AckDelay uint64 // Delay since packet received AckRanges []AckRange // Ranges of acked packets } // CRYPTO frame: TLS handshake data type CryptoFrame struct { Offset uint64 Data []byte // TLS 1.3 messages } // CONNECTION_CLOSE frame: Close connection type ConnectionCloseFrame struct { ErrorCode uint64 ReasonPhrase string }

Code Deep Dive: HTTP/3 Client in Go

Example 1: Basic HTTP/3 Client

gopackage main import ( "context" "crypto/tls" "fmt" "io" "log" "github.com/quic-go/quic-go" "github.com/quic-go/quic-go/http3" ) func main() { // HTTP/3 client // Uses QUIC transport under the hood client := &http3.Client{ // QUIC configuration QuicConfig: &quic.Config{ // Enable 0-RTT for returning connections // Why? Eliminates handshake latency Allow0RTT: true, // Maximum idle timeout MaxIdleTimeout: 30 * time.Second, // Keep connections alive KeepAlivePeriod: 10 * time.Second, }, // TLS configuration TLSClientConfig: &tls.Config{ // QUIC requires TLS 1.3 MinVersion: tls.VersionTLS13, // Server name for SNI ServerName: "quic.tech", }, } // Make HTTP/3 request // Protocol negotiation via QUIC ALPN: h3 resp, err := client.Get("https://quic.tech:8080/") if err != nil { log.Fatal(err) } defer resp.Body.Close() fmt.Printf("Protocol: %s\n", resp.Proto) // HTTP/3.0 fmt.Printf("Status: %s\n", resp.Status) body, _ := io.ReadAll(resp.Body) fmt.Printf("Body: %s\n", body) }

What happens under the hood:

- DNS Resolution: Resolve domain to IP

- QUIC Handshake (1-RTT):

Client → Server: Initial packet (TLS ClientHello + QUIC params) Server → Client: Handshake packet (TLS ServerHello + cert) Client → Server: Handshake packet (TLS Finished) Connection established! - HTTP/3 Request:

- Sent on QUIC stream

- QPACK-compressed headers

- Similar to HTTP/2, but different compression

- Response:

- Received on same stream

- Multiple DATA frames

- Connection stays open for more requests

Example 2: HTTP/3 Server with 0-RTT

gopackage main import ( "context" "fmt" "log" "net/http" "time" "github.com/quic-go/quic-go/http3" ) func main() { // HTTP/3 server server := &http3.Server{ Addr: ":8080", // QUIC configuration QuicConfig: &quic.Config{ // Allow 0-RTT data // Why? Returning clients can send data immediately Allow0RTT: true, // Token-based validation // Prevents address spoofing in 0-RTT MaxIncomingStreams: 1000, }, Handler: http.HandlerFunc(handler), } // Start server // Requires TLS cert log.Println("HTTP/3 server listening on :8080") err := server.ListenAndServeTLS("cert.pem", "key.pem") if err != nil { log.Fatal(err) } } func handler(w http.ResponseWriter, r *http.Request) { // Check if 0-RTT was used // r.TLS.HandshakeComplete == false for 0-RTT if !r.TLS.HandshakeComplete { fmt.Fprintf(w, "0-RTT request! Instant data transfer!\n") // WARNING: 0-RTT requests can be replayed // Only use for idempotent operations (GET, not POST) if r.Method != "GET" { http.Error(w, "0-RTT not allowed for non-GET", http.StatusMethodNotAllowed) return } } else { fmt.Fprintf(w, "1-RTT request (standard handshake)\n") } // Handle request normally w.Header().Set("Content-Type", "text/plain") fmt.Fprintf(w, "Hello from HTTP/3!\n") fmt.Fprintf(w, "Protocol: %s\n", r.Proto) }

0-RTT Security Consideration:

go// 0-RTT allows replay attacks // An attacker can capture and replay the encrypted packet // DANGEROUS with 0-RTT: func dangerousHandler(w http.ResponseWriter, r *http.Request) { if r.Method == "POST" { // Transfer $1000 transferMoney(1000) // Can be replayed! } } // SAFE with 0-RTT: func safeHandler(w http.ResponseWriter, r *http.Request) { if !r.TLS.HandshakeComplete { // Reject non-idempotent operations in 0-RTT if r.Method != "GET" && r.Method != "HEAD" { http.Error(w, "0-RTT not allowed", 400) return } } // Safe: GET is idempotent sendData(w) }

Example 3: Connection Migration Simulation

gopackage main import ( "context" "fmt" "net" "time" "github.com/quic-go/quic-go" ) // Simulate mobile device switching networks func main() { // Create QUIC connection conn, err := quic.DialAddr( context.Background(), "quic.tech:8080", &tls.Config{MinVersion: tls.VersionTLS13}, &quic.Config{ // Enable connection migration EnableMigration: true, }, ) if err != nil { panic(err) } defer conn.CloseWithError(0, "") fmt.Printf("Connected from: %s\n", conn.LocalAddr()) fmt.Printf("Connection ID: %x\n", conn.ConnectionState().TLS.ConnectionID) // Open stream and send data stream, _ := conn.OpenStreamSync(context.Background()) stream.Write([]byte("Request from WiFi")) // Simulate network change (WiFi → Mobile) time.Sleep(2 * time.Second) fmt.Println("\n🔄 Switching from WiFi to Mobile network...") // In real scenario, OS would change IP // QUIC automatically handles this via Connection ID // No need to reconnect! // Continue using same stream stream.Write([]byte("Request from Mobile")) // Read response buf := make([]byte, 1024) n, _ := stream.Read(buf) fmt.Printf("Response: %s\n", buf[:n]) fmt.Printf("\nConnection survived network change!") fmt.Printf("Same Connection ID: %x\n", conn.ConnectionState().TLS.ConnectionID) }

What this demonstrates:

- Connection ID stays constant despite IP change

- Streams continue uninterrupted

- No reconnection overhead

- Critical for mobile apps

Loss Detection and Recovery

QUIC improves on TCP's loss detection:

go// TCP: Retransmission ambiguity // Did ACK acknowledge original or retransmission? // Sequence numbers wrap, causing confusion // Original send: Seq 100 // Retransmit: Seq 100 (SAME!) // ACK: Ack 101 // ❓ Was it for original or retransmit? // QUIC: No ambiguity // Packet numbers NEVER reused // Original send: Packet #100 // Retransmit: Packet #150 (NEW!) // ACK: Ack for #150 // Clear: ACK is for retransmission

Loss detection algorithm:

gotype LossDetection struct { sentPackets map[uint64]SentPacket largestAcked uint64 } func (ld *LossDetection) DetectLoss(acks []uint64) []uint64 { var lostPackets []uint64 for packetNum := range ld.sentPackets { // Loss detection criteria: // 1. Packet is older than largest acked // 2. Threshold exceeded (3 packets or time-based) if packetNum < ld.largestAcked { // Check if 3 packets ahead were acked if ld.largestAcked - packetNum >= 3 { lostPackets = append(lostPackets, packetNum) } // Or time-based: sent > RTT * 9/8 ago sent := ld.sentPackets[packetNum].SentTime if time.Since(sent) > ld.smoothedRTT * 9 / 8 { lostPackets = append(lostPackets, packetNum) } } } return lostPackets }

Benefits & Why HTTP/3 Matters

Performance Gains

Real-world measurements:

| Scenario | HTTP/2 | HTTP/3 | Improvement |

|---|---|---|---|

| Low packet loss (0.1%) | 1.2s | 1.1s | 8% faster |

| Medium loss (1%) | 2.1s | 1.4s | 33% faster |

| High loss (3%) | 4.5s | 1.9s | 58% faster |

| Mobile network switch | ∞ (reconnect) | 0ms | Infinite |

| Handshake (first visit) | 300ms | 100ms | 67% faster |

| Handshake (returning) | 200ms | 0ms | 100% faster |

Why these gains?

-

No TCP head-of-line blocking

HTTP/2: 1 lost packet → ALL streams wait HTTP/3: 1 lost packet → Only 1 stream waits -

Faster handshake

HTTP/2: TCP (1.5 RTT) + TLS (1.5 RTT) = 3 RTT HTTP/3: QUIC + TLS integrated = 1 RTT (0 RTT for resumption!) -

Connection migration

HTTP/2: Network change = new connection = lost requests HTTP/3: Network change = same connection = continue seamlessly -

Better congestion control

QUIC can update congestion algorithm without OS update Experiments: BBR, Cubic, Reno (userspace!)

Mobile Performance

Why HTTP/3 shines on mobile:

-

High latency (50-300ms)

- 0-RTT saves 100-600ms per connection

- Critical for perceived performance

-

Packet loss (1-5% typical)

- Independent streams avoid cascading delays

- 30-60% faster page loads

-

Network switching (WiFi ↔ 4G ↔ 5G)

- Connection migration = seamless

- No dropped requests, no reconnect

-

Battery life

- Fewer reconnections = less radio usage

- Estimated 5-10% battery savings

Trade-offs & Gotchas

When to Use HTTP/3

Perfect for:

-

Mobile applications

- High packet loss tolerance

- Network switching common

- Latency-sensitive

-

Video streaming

- Independent streams for quality levels

- Adaptive bitrate benefits

- Loss doesn't cascade

-

Real-time applications

- Low latency critical

- 0-RTT for quick reconnections

- WebRTC over QUIC

-

Modern web apps

- Many concurrent requests

- API calls + assets

- Progressive enhancement

When HTTP/3 Might Not Help

⚠️ Limited benefits:

-

Single large file download

- No multiplexing benefit

- UDP overhead slightly worse than TCP

- HTTP/2 performs equally

-

Perfect networks

- 0% packet loss

- No latency variation

- HTTP/2 performs equally

-

Very old networks

- UDP blocked by firewalls

- Must fallback to HTTP/2

- Adds complexity

Common Mistakes

Mistake 1: Not Implementing Fallback

go// BAD: HTTP/3 only client := &http3.Client{} resp, err := client.Get(url) // Fails if server doesn't support HTTP/3! // GOOD: Try HTTP/3, fallback to HTTP/2 func robustGet(url string) (*http.Response, error) { // Try HTTP/3 first h3Client := &http3.Client{} resp, err := h3Client.Get(url) if err == nil { return resp, nil } // Fallback to HTTP/2 h2Client := &http.Client{} return h2Client.Get(url) }

Mistake 2: Using 0-RTT for Non-Idempotent Operations

go// DANGEROUS: 0-RTT POST func handler(w http.ResponseWriter, r *http.Request) { if r.Method == "POST" { processPayment() // Can be replayed! } } // SAFE: Check handshake completion func handler(w http.ResponseWriter, r *http.Request) { if !r.TLS.HandshakeComplete && r.Method != "GET" { http.Error(w, "0-RTT not allowed for POST", 400) return } processPayment() // Safe now }

Mistake 3: Ignoring UDP Blockage

go// BAD: No fallback strategy server := &http3.Server{Addr: ":443"} server.ListenAndServeTLS("cert", "key") // Clients behind UDP-blocking firewalls can't connect! // GOOD: Alt-Svc header for discovery http2Server := &http.Server{ Handler: http.HandlerFunc(func(w http.ResponseWriter, r *http.Request) { // Advertise HTTP/3 availability w.Header().Set("Alt-Svc", `h3=":443"; ma=2592000`) // Serve via HTTP/2 serveContent(w, r) }), } // Run both servers go http2Server.ListenAndServeTLS(":443", "cert", "key") go http3Server.ListenAndServeTLS(":443", "cert", "key")

Mistake 4: Not Tuning QUIC Parameters

go// BAD: Default settings for all scenarios client := &http3.Client{} // GOOD: Tune for use case client := &http3.Client{ QuicConfig: &quic.Config{ // High-latency networks MaxIdleTimeout: 60 * time.Second, // Many concurrent downloads MaxIncomingStreams: 1000, // Enable datagram for WebRTC EnableDatagrams: true, }, }

Performance Considerations

UDP Processing Overhead:

CPU usage per packet: TCP: ~1,000 cycles (kernel optimized over 40 years) QUIC: ~2,000 cycles (userspace, newer) Trade-off: 2x CPU for better latency and features Modern servers: Negligible impact

Memory Usage:

go// QUIC maintains more state than TCP type QUICConnection struct { streams map[uint64]*Stream // Per-stream state sentPackets map[uint64]*SentPacket // Loss detection recvPackets map[uint64]*RecvPacket // Reordering cryptoState *CryptoState // TLS state congestionCtrl *CongestionControl // CC state } // Typical: 64-128 KB per connection // vs TCP: 4-8 KB per connection // Mitigation: Connection pooling, timeouts

Monitoring:

go// Track QUIC metrics type QUICMetrics struct { ConnectionsMigrated atomic.Int64 ZeroRTTAccepted atomic.Int64 ZeroRTTRejected atomic.Int64 PacketsLost atomic.Int64 StreamsBlocked atomic.Int64 } func logMetrics(m *QUICMetrics) { log.Printf("Migrations: %d, 0-RTT: %d/%d, Loss: %d", m.ConnectionsMigrated.Load(), m.ZeroRTTAccepted.Load(), m.ZeroRTTAccepted.Load() + m.ZeroRTTRejected.Load(), m.PacketsLost.Load()) }

Security Considerations

1. Amplification Attacks

QUIC includes anti-amplification:

go// Server limits response size until address validated // Initial packet from unknown address: // Server responds with max 3x the request size type AddressValidation struct { validated bool bytesSent int bytesRecv int } func (av *AddressValidation) CanSend(size int) bool { if av.validated { return true } // 3x amplification limit return av.bytesSent + size <= av.bytesRecv * 3 }

2. Connection ID Encryption

go// Connection IDs can be encrypted to prevent tracking type ConnectionID struct { Raw []byte Encrypted bool } // Rotate IDs periodically func rotateConnectionID(conn *quic.Connection) { newID := generateRandomID() conn.SetConnectionID(newID) }

3. 0-RTT Replay Protection

go// Use unique tokens per connection type ZeroRTTToken struct { IssuedAt time.Time ClientIP net.IP Nonce [32]byte // Random, single-use } func validateToken(token *ZeroRTTToken) bool { // Check expiry if time.Since(token.IssuedAt) > 24*time.Hour { return false } // Check if already used (store in cache) if seenNonce(token.Nonce) { return false // Replay attack! } markNonceSeen(token.Nonce) return true }

Comparison with Alternatives

| Feature | HTTP/2 (TCP) | HTTP/3 (QUIC) | WebSocket | WebRTC |

|---|---|---|---|---|

| Transport | TCP | UDP (QUIC) | TCP | UDP |

| Multiplexing | Yes | Yes | No | Yes |

| Head-of-line Blocking | TCP level | None | TCP level | None |

| Connection Migration | No | Yes | No | ⚠️ Limited |

| 0-RTT Handshake | No | Yes | No | ⚠️ Partial |

| Bidirectional | Yes | Yes | Yes | Yes |

| Browser Support | 97% | 75% | 98% | 95% |

| Use Case | Modern web | Mobile/lossy networks | Real-time chat | Video calls |

Interview Preparation

Question 1: Why was QUIC built on UDP instead of improving TCP?

Answer:

TCP is ossified - impossible to change meaningfully:

-

Kernel implementation

- TCP is in OS kernel

- Updating requires OS updates

- Takes years to deploy changes

-

Middlebox interference

- Routers, firewalls, NATs expect TCP behavior

- They drop or modify unexpected TCP options

- Example: Google tried TCP Fast Open - blocked by middleboxes

-

Backward compatibility burden

- Any TCP change must work with 40 years of implementations

- Innovation is glacially slow

UDP advantages:

-

Userspace implementation

- QUIC runs in application

- Update via software deployment

- Chrome updates QUIC every 6 weeks!

-

Clean slate

- Build exactly what's needed

- No legacy baggage

- Modern congestion control, loss detection

-

Bypass ossification

- Middleboxes pass UDP through

- Application controls everything

Why they ask: Tests understanding of protocol evolution constraints

Red flags:

- "UDP is faster" (not the reason)

- Not mentioning ossification

- Thinking it's just about performance

Pro tip: "QUIC proves that sometimes the best way forward is to build on a simpler foundation (UDP) rather than trying to fix a complex one (TCP). This is a valuable lesson in system design."

Question 2: How does QUIC achieve 0-RTT connection establishment?

Answer:

0-RTT uses session resumption:

First connection (1-RTT):

1. Client → Server: ClientHello 2. Server → Client: ServerHello + Session Ticket 3. Connection established

Resumption (0-RTT):

1. Client → Server: Encrypted 0-RTT data + Session Ticket (Application data sent immediately!) 2. Server validates ticket, processes data 3. Server → Client: Response

Session ticket contains:

- Encryption keys (PSK - Pre-Shared Key)

- Transport parameters

- Expiration time (24-48 hours typical)

Code example:

go// First connection: Client saves ticket ticket := conn.SessionTicket() saveTicket(ticket) // Later connection: Client uses ticket conn := quic.DialAddr(addr, tlsConfig, &quic.Config{ SessionTicket: loadTicket(), }) // Can send data immediately! stream.Write(data) // 0-RTT!

Security trade-off:

- 0-RTT is vulnerable to replay attacks

- Only use for idempotent operations (GET, not POST)

- Server must detect replays

Why they ask: Core QUIC innovation, tests security awareness

Red flags:

- Not mentioning replay risk

- Confusing with TCP Fast Open

- Not explaining session tickets

Pro tip: "0-RTT is perfect for CDNs and API calls (GET requests), but dangerous for payments. Always check

TLS.HandshakeComplete before processing sensitive operations."Question 3: How does QUIC handle connection migration?

Answer:

Connection migration allows seamless network changes:

Problem with TCP:

TCP connection = (src_ip, src_port, dst_ip, dst_port) WiFi: 192.168.1.5:12345 → server:443 ↓ Network change Mobile: 10.0.0.42:12345 → server:443 Different 4-tuple = New connection = Lost state

QUIC solution: Connection IDs

QUIC connection = Connection ID (64-bit) WiFi: 192.168.1.5:12345, ConnID=0xABCD ↓ Network change Mobile: 10.0.0.42:54321, ConnID=0xABCD Same ConnID = Same connection = Streams continue!

Migration process:

- Client detects network change (OS event)

- Client sends packet from new IP with same Connection ID

- Server validates via PATH_CHALLENGE frame (prevents spoofing)

- Connection continues seamlessly

Code simulation:

go// Server receives packet from new address if packet.ConnectionID == existingConn.ID { // Same connection, new path newPath := Path{ RemoteAddr: newAddr, LocalAddr: localAddr, } // Validate new path conn.SendPathChallenge(newPath) // If validated, migrate connection conn.MigrateTo(newPath) }

Why this matters:

- Mobile users switch networks constantly

- No reconnection overhead

- Better user experience (no interruptions)

Why they ask: Unique QUIC feature, tests mobile understanding

Red flags:

- Not explaining Connection IDs

- Ignoring security (path validation)

- Not mentioning why TCP can't do this

Pro tip: "Connection migration is crucial for mobile apps - a YouTube video shouldn't buffer when you walk from WiFi to your car (switching to 4G). This is why YouTube uses QUIC."

Question 4: What is the main difference between HTTP/2 and HTTP/3?

Answer:

Transport layer:

- HTTP/2: Built on TCP

- HTTP/3: Built on QUIC (over UDP)

But they're almost identical at HTTP level:

- Same semantics (methods, headers, status codes)

- Same multiplexing concept

- Different header compression (HPACK vs QPACK)

Key differences:

-

Head-of-line blocking

HTTP/2: TCP packet lost → All streams wait HTTP/3: UDP packet lost → Only affected stream waits -

Connection establishment

HTTP/2: TCP (1.5 RTT) + TLS (1.5 RTT) = 3 RTT HTTP/3: Integrated = 1 RTT (0 RTT with resumption) -

Connection migration

HTTP/2: IP change = reconnect HTTP/3: IP change = seamless continuation -

Protocol evolution

HTTP/2: TCP changes need OS updates HTTP/3: QUIC changes via app updates

HTTP/3 = HTTP/2 semantics + QUIC transport = Keep the good (multiplexing) + Fix the bad (TCP limitations)

Why they ask: Core understanding of both protocols

Red flags:

- "HTTP/3 is just faster" (explain HOW and WHEN)

- Not mentioning QUIC

- Thinking they're completely different

Pro tip: "Think of HTTP/3 as HTTP/2 'done right' - same ideas, but without TCP's constraints. This is why migration from HTTP/2 to HTTP/3 is relatively easy."

Question 5: When would you choose HTTP/2 over HTTP/3?

Answer:

Choose HTTP/2 when:

-

Maximum compatibility required

HTTP/2: 97% browser support HTTP/3: 75% browser support Corporate networks: Often block UDP -

Perfect networks

0% packet loss: HTTP/2 ≈ HTTP/3 performance Data center networks: Often perfect No benefit from QUIC's loss recovery -

Simpler deployment

HTTP/2: Standard TLS, well-understood HTTP/3: Requires UDP firewall rules HTTP/3: More complex monitoring -

Single long-lived connections

gRPC streaming: Connection migration not needed WebSocket: Already handles reconnection

Choose HTTP/3 when:

-

Mobile-first application

- High packet loss

- Frequent network switches

- Latency-sensitive

-

Global audience

- Variable network quality

- Long-distance connections

- 0-RTT benefits

-

Modern infrastructure

- UDP-friendly networks

- Monitoring tools support QUIC

- Ability to maintain fallback

Best practice: Support both

go// Run both servers go http2Server.ListenAndServeTLS(":443", "cert", "key") go http3Server.ListenAndServeTLS(":443", "cert", "key") // Advertise HTTP/3 via Alt-Svc w.Header().Set("Alt-Svc", `h3=":443"; ma=2592000`)

Why they ask: Tests practical decision-making

Red flags:

- "Always use HTTP/3" (ignores compatibility)

- "Never use HTTP/3" (ignores benefits)

- Not mentioning fallback strategy

Pro tip: "Major sites like Google, Facebook, Cloudflare run both HTTP/2 and HTTP/3, using Alt-Svc header for discovery. Clients try HTTP/3, fallback to HTTP/2 if needed. This is the industry standard approach."

Key Takeaways

🔑 QUIC solves TCP's architectural constraints - By building on UDP, QUIC achieves independent stream delivery, connection migration, and faster handshakes impossible with TCP.

🔑 0-RTT eliminates handshake latency - Returning visitors can send data immediately (0 round-trips), compared to 2-3 RTTs for TCP+TLS, critical for mobile and API performance.

🔑 Connection migration enables seamless network switching - Connection IDs (not IP addresses) allow mobile devices to switch from WiFi to 4G without dropping connections or losing streams.

🔑 Stream independence prevents cascading delays - One lost packet blocks only its stream, not all streams like HTTP/2 over TCP, making HTTP/3 dramatically faster on lossy networks (30-60%).

🔑 Userspace implementation enables rapid evolution - QUIC can be updated via application deployment (weeks) instead of OS updates (years), allowing continuous performance improvements.

Insights & Reflection

The Philosophy of QUIC

QUIC embodies a radical principle: when you can't fix the foundation, build on a simpler one. TCP couldn't be fixed due to 40 years of ossification, so QUIC started fresh on UDP's blank slate.

This isn't just about networking—it's a lesson in system design. Sometimes refactoring is harder than rebuilding. Sometimes the best path forward is to abandon technical debt.

Connection to Broader Concepts

Ossification: Technology can become impossible to change. TCP, IPv4, BGP all suffer from this. The lesson: design for evolution from day one, or accept eventual replacement.

End-to-end principle revisited: QUIC moves transport logic from kernel (middle) to application (end). This violates traditional layering but proves pragmatic for modern needs.

Security by default: QUIC mandates encryption (TLS 1.3). This shows the industry learning - optional security leads to insecurity. Make the secure path the default path.

Evolution: The Transport Layer Revolution

The journey from TCP to QUIC mirrors other technology evolutions:

- Single-threaded → Multi-threaded (parallel processing)

- Monolith → Microservices (independent scaling)

- TCP → QUIC (independent streams)

All represent the same insight: independence enables resilience and performance.

The future: QUIC is becoming the foundation for more than HTTP. WebRTC over QUIC, DNS over QUIC, even SSH over QUIC are being explored. QUIC isn't just the future of web—it's the future of transport.

The deeper lesson: Sometimes the best innovation is removing constraints, not adding features. QUIC doesn't add much to UDP—it just builds the right abstractions without TCP's baggage.