Designing a Banking Database at Scale

The Stakes Are Different Here

When you design a database for a social media app and something goes wrong, users see a stale post. When you design a database for a bank and something goes wrong, people lose money. Careers end. Lawsuits happen. Trust evaporates.

Bank processes over 10 million transactions daily. That's roughly 115 transactions per second on average, with peaks hitting 1000+ TPS during salary days, festive seasons, and market hours. Every single transaction must be:

- Atomic: Either complete fully or not at all

- Consistent: Never violate business rules (negative balance, duplicate transfers)

- Isolated: Concurrent transactions don't interfere

- Durable: Once confirmed, never lost—even if the data center burns down

This guide covers everything: the schema design, transaction management, scaling strategies, disaster recovery, and the operational practices that keep a bank running.

Part 1: Understanding Banking Requirements

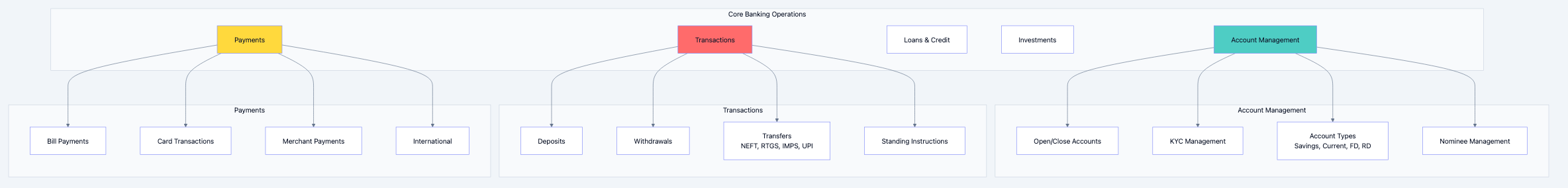

Core Banking Operations

Before designing anything, understand what a bank actually does:

Banking database diagram 1

Scale Parameters

| Metric | Value | Implication |

|---|---|---|

| Total Customers | 80+ million | Massive user table |

| Daily Transactions | 10+ million | ~115 TPS average, 1000+ TPS peak |

| Accounts | 100+ million | Multiple accounts per customer |

| Branches | 6000+ | Geographic distribution |

| ATMs | 18000+ | Real-time sync required |

| Digital Users | 50+ million | Mobile/Web scale |

| Data Retention | 10+ years | Regulatory requirement |

Regulatory Requirements

Banking databases must comply with:

- RBI Guidelines: Transaction logs, audit trails, data retention

- PCI-DSS: Card data security

- Data Localization: Customer data must stay in India

- Right to Audit: Regulators can demand any data anytime

- Disaster Recovery: RPO near-zero, RTO < 4 hours

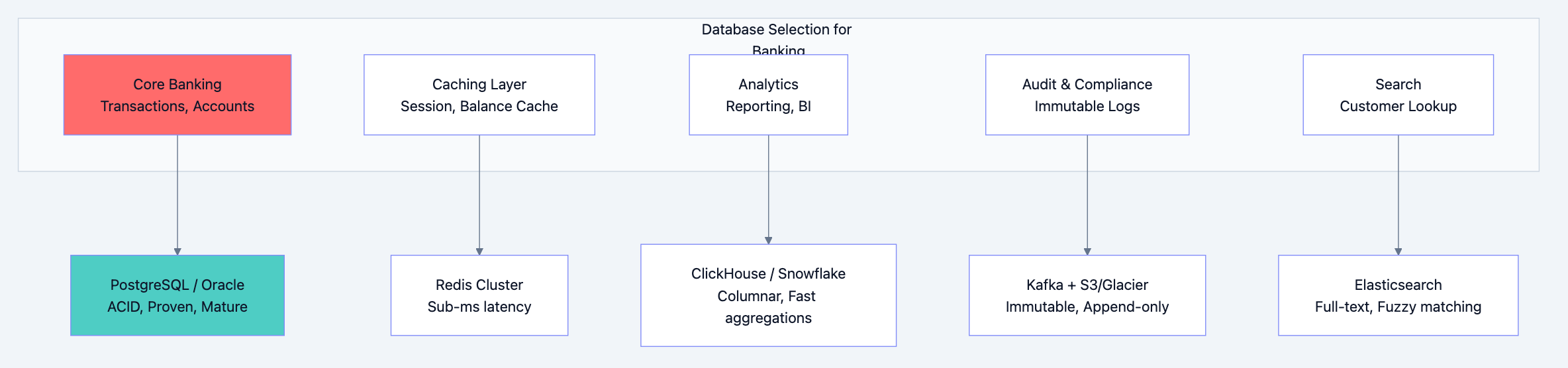

Part 2: Database Technology Choices

The Primary Database: Why PostgreSQL (or Oracle)

For the core banking system, you need a rock-solid ACID-compliant relational database.

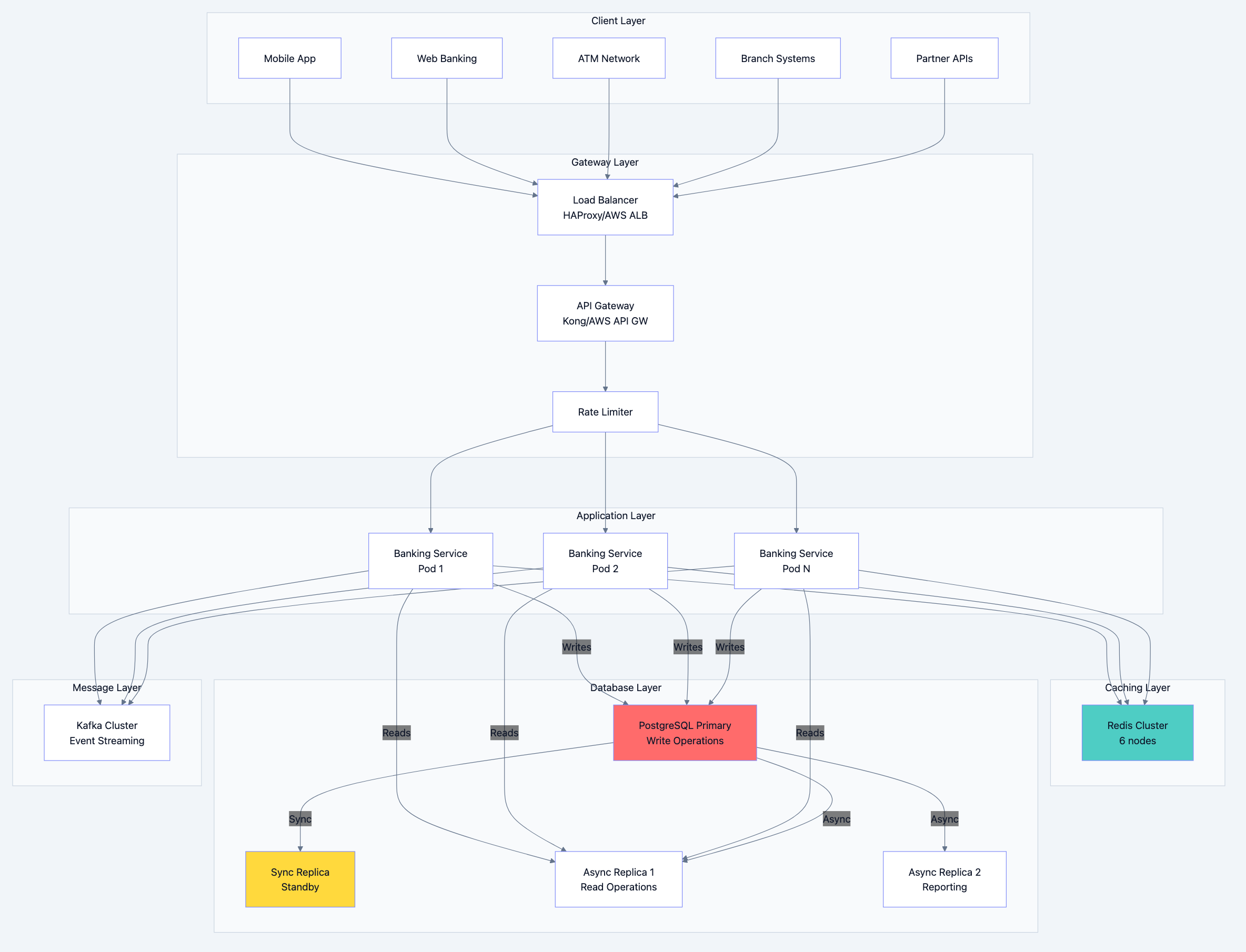

Banking database diagram 2

Why PostgreSQL for Core Banking:

- Proven ACID Compliance: Decades of battle-testing

- Serializable Isolation: Prevents all anomalies

- Row-Level Locking: High concurrency without blocking

- Point-in-Time Recovery: Restore to any second

- Logical Replication: Zero-downtime migrations

- Extensions: PostGIS for branch locations, pg_partman for partitioning

Why NOT NoSQL for Transactions:

- Eventual consistency is unacceptable for money

- Complex joins needed (account + customer + branch)

- ACID transactions across multiple tables required

- Regulatory audits expect relational structure

The Supporting Cast

| Use Case | Technology | Why |

|---|---|---|

| Session/Cache | Redis Cluster | Sub-ms reads, atomic operations |

| Message Queue | Apache Kafka | Durable, ordered, replayable |

| Analytics | ClickHouse | Columnar, fast aggregations |

| Search | Elasticsearch | Customer name search, fuzzy matching |

| Document Store | MongoDB | KYC documents, unstructured data |

| Time-Series | TimescaleDB | Transaction metrics, monitoring |

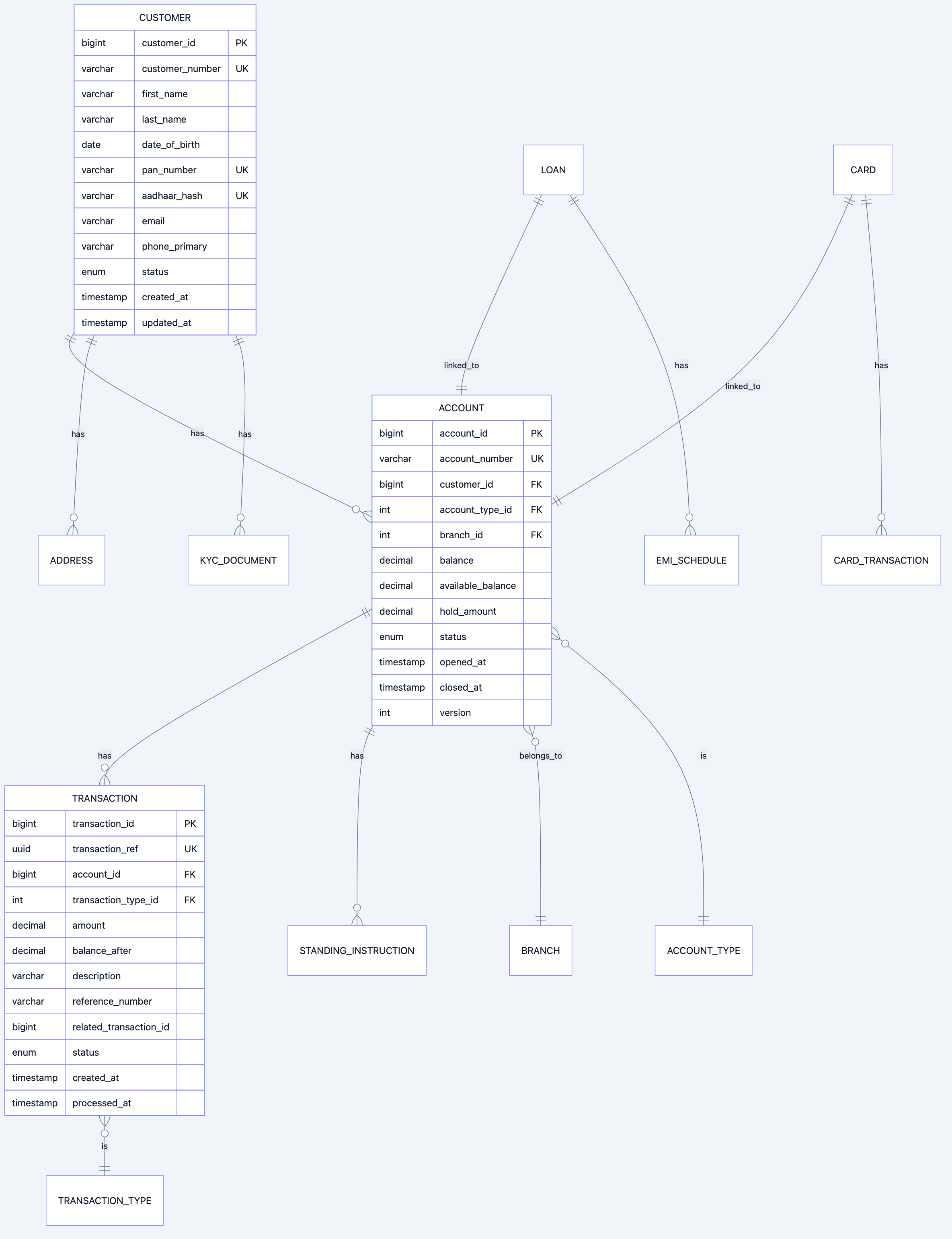

Part 3: Schema Design

Core Entities

Banking database diagram 3

Detailed Schema with Partitioning

sql-- ============================================ -- CUSTOMER TABLE -- ============================================ -- Customers are rarely updated, frequently read -- Partition by customer_id range for even distribution CREATE TABLE customers ( customer_id BIGSERIAL PRIMARY KEY, customer_number VARCHAR(20) NOT NULL UNIQUE, -- Personal Information first_name VARCHAR(100) NOT NULL, middle_name VARCHAR(100), last_name VARCHAR(100) NOT NULL, date_of_birth DATE NOT NULL, gender CHAR(1) CHECK (gender IN ('M', 'F', 'O')), -- Identity (Hashed for security) pan_number VARCHAR(10) UNIQUE, -- Encrypted at rest aadhaar_hash VARCHAR(64) UNIQUE, -- Only hash stored -- Contact email VARCHAR(255), phone_primary VARCHAR(15) NOT NULL, phone_secondary VARCHAR(15), -- Status status VARCHAR(20) NOT NULL DEFAULT 'ACTIVE' CHECK (status IN ('PENDING_KYC', 'ACTIVE', 'SUSPENDED', 'CLOSED')), kyc_status VARCHAR(20) NOT NULL DEFAULT 'PENDING' CHECK (kyc_status IN ('PENDING', 'VERIFIED', 'EXPIRED', 'REJECTED')), kyc_verified_at TIMESTAMP, -- Risk Classification risk_category VARCHAR(20) DEFAULT 'NORMAL' CHECK (risk_category IN ('LOW', 'NORMAL', 'HIGH', 'PEP')), -- Audit created_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, updated_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, created_by BIGINT NOT NULL, updated_by BIGINT NOT NULL, -- Soft delete is_deleted BOOLEAN NOT NULL DEFAULT FALSE, deleted_at TIMESTAMP ); -- Indexes for common queries CREATE INDEX idx_customers_phone ON customers(phone_primary); CREATE INDEX idx_customers_email ON customers(email) WHERE email IS NOT NULL; CREATE INDEX idx_customers_status ON customers(status) WHERE status != 'CLOSED'; CREATE INDEX idx_customers_name ON customers(last_name, first_name); -- ============================================ -- ACCOUNT TABLE -- ============================================ -- Critical table - balance updates are hot path -- Version column for optimistic locking CREATE TABLE accounts ( account_id BIGSERIAL PRIMARY KEY, account_number VARCHAR(20) NOT NULL UNIQUE, -- Relationships customer_id BIGINT NOT NULL REFERENCES customers(customer_id), account_type_id INT NOT NULL REFERENCES account_types(account_type_id), branch_id INT NOT NULL REFERENCES branches(branch_id), -- Balance Information balance DECIMAL(18,2) NOT NULL DEFAULT 0.00 CHECK (balance >= 0 OR account_type_id IN (SELECT account_type_id FROM account_types WHERE allows_overdraft = TRUE)), available_balance DECIMAL(18,2) NOT NULL DEFAULT 0.00, hold_amount DECIMAL(18,2) NOT NULL DEFAULT 0.00 CHECK (hold_amount >= 0), minimum_balance DECIMAL(18,2) NOT NULL DEFAULT 0.00, -- Overdraft (for current accounts) overdraft_limit DECIMAL(18,2) DEFAULT 0.00, overdraft_used DECIMAL(18,2) DEFAULT 0.00, -- Interest interest_rate DECIMAL(5,4), -- e.g., 0.0350 = 3.5% last_interest_calc DATE, accrued_interest DECIMAL(18,2) DEFAULT 0.00, -- Status status VARCHAR(20) NOT NULL DEFAULT 'ACTIVE' CHECK (status IN ('PENDING', 'ACTIVE', 'DORMANT', 'FROZEN', 'CLOSED')), dormant_since DATE, -- Limits daily_withdrawal_limit DECIMAL(18,2) DEFAULT 100000.00, daily_transfer_limit DECIMAL(18,2) DEFAULT 500000.00, -- Dates opened_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, closed_at TIMESTAMP, -- Optimistic Locking version INT NOT NULL DEFAULT 1, -- Audit created_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, updated_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, -- Constraints CONSTRAINT chk_available_balance CHECK (available_balance = balance - hold_amount + COALESCE(overdraft_limit, 0) - COALESCE(overdraft_used, 0)) ); -- Critical indexes CREATE INDEX idx_accounts_customer ON accounts(customer_id); CREATE INDEX idx_accounts_branch ON accounts(branch_id); CREATE INDEX idx_accounts_status ON accounts(status) WHERE status = 'ACTIVE'; CREATE UNIQUE INDEX idx_accounts_number_active ON accounts(account_number) WHERE status != 'CLOSED'; -- ============================================ -- TRANSACTION TABLE (PARTITIONED) -- ============================================ -- This is the highest volume table -- Partitioned by date for performance and archival -- Write-heavy, read for recent transactions CREATE TABLE transactions ( transaction_id BIGSERIAL, transaction_ref UUID NOT NULL DEFAULT gen_random_uuid(), -- Account Reference account_id BIGINT NOT NULL, -- Transaction Details transaction_type_id INT NOT NULL REFERENCES transaction_types(transaction_type_id), amount DECIMAL(18,2) NOT NULL CHECK (amount > 0), -- Direction direction CHAR(1) NOT NULL CHECK (direction IN ('C', 'D')), -- Credit/Debit -- Running Balance (Snapshot) balance_before DECIMAL(18,2) NOT NULL, balance_after DECIMAL(18,2) NOT NULL, -- Description description VARCHAR(500), narration VARCHAR(100), -- Short narration for statement -- Reference reference_number VARCHAR(50), -- UTR, RRN, etc. related_txn_id BIGINT, -- For transfer pairs -- Channel channel VARCHAR(20) NOT NULL CHECK (channel IN ('BRANCH', 'ATM', 'NETBANKING', 'MOBILE', 'UPI', 'NEFT', 'RTGS', 'IMPS', 'API')), -- Status status VARCHAR(20) NOT NULL DEFAULT 'PENDING' CHECK (status IN ('PENDING', 'PROCESSING', 'COMPLETED', 'FAILED', 'REVERSED')), -- Timestamps created_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, processed_at TIMESTAMP, -- Audit initiated_by BIGINT, -- User/System ID approved_by BIGINT, -- For high-value transactions ip_address INET, device_id VARCHAR(100), PRIMARY KEY (transaction_id, created_at) ) PARTITION BY RANGE (created_at); -- Create monthly partitions CREATE TABLE transactions_2024_01 PARTITION OF transactions FOR VALUES FROM ('2024-01-01') TO ('2024-02-01'); CREATE TABLE transactions_2024_02 PARTITION OF transactions FOR VALUES FROM ('2024-02-01') TO ('2024-03-01'); -- ... continue for all months -- Create future partitions automatically (pg_partman or cron job) -- Indexes on partitioned table CREATE INDEX idx_txn_account_date ON transactions(account_id, created_at DESC); CREATE INDEX idx_txn_ref ON transactions(transaction_ref); CREATE INDEX idx_txn_reference_number ON transactions(reference_number) WHERE reference_number IS NOT NULL; CREATE INDEX idx_txn_status ON transactions(status) WHERE status IN ('PENDING', 'PROCESSING'); -- ============================================ -- TRANSACTION LEDGER (DOUBLE-ENTRY) -- ============================================ -- Every transaction has two entries: debit and credit -- This ensures books always balance CREATE TABLE ledger_entries ( entry_id BIGSERIAL, transaction_id BIGINT NOT NULL, -- Account account_id BIGINT NOT NULL, -- Entry Type entry_type CHAR(1) NOT NULL CHECK (entry_type IN ('D', 'C')), -- Debit/Credit -- Amount amount DECIMAL(18,2) NOT NULL CHECK (amount > 0), -- GL Account for reconciliation gl_account_id INT NOT NULL REFERENCES gl_accounts(gl_account_id), -- Timestamp created_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, PRIMARY KEY (entry_id, created_at) ) PARTITION BY RANGE (created_at); -- ============================================ -- AUDIT LOG TABLE -- ============================================ -- Immutable audit trail for all changes -- Required by regulators CREATE TABLE audit_logs ( audit_id BIGSERIAL, -- What changed table_name VARCHAR(100) NOT NULL, record_id BIGINT NOT NULL, operation CHAR(1) NOT NULL CHECK (operation IN ('I', 'U', 'D')), -- Change details old_values JSONB, new_values JSONB, changed_fields TEXT[], -- Who changed user_id BIGINT, user_type VARCHAR(20), -- CUSTOMER, EMPLOYEE, SYSTEM -- When/Where created_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP, ip_address INET, user_agent TEXT, session_id VARCHAR(100), PRIMARY KEY (audit_id, created_at) ) PARTITION BY RANGE (created_at); -- Immutable - no updates or deletes allowed REVOKE UPDATE, DELETE ON audit_logs FROM PUBLIC;

Supporting Tables

sql-- ============================================ -- ACCOUNT TYPES -- ============================================ CREATE TABLE account_types ( account_type_id SERIAL PRIMARY KEY, code VARCHAR(20) NOT NULL UNIQUE, name VARCHAR(100) NOT NULL, category VARCHAR(20) NOT NULL CHECK (category IN ('SAVINGS', 'CURRENT', 'FD', 'RD', 'LOAN')), -- Rules minimum_balance DECIMAL(18,2) NOT NULL DEFAULT 0, allows_overdraft BOOLEAN NOT NULL DEFAULT FALSE, interest_rate DECIMAL(5,4), -- Limits max_daily_withdrawal DECIMAL(18,2), max_daily_transfer DECIMAL(18,2), is_active BOOLEAN NOT NULL DEFAULT TRUE ); INSERT INTO account_types (code, name, category, minimum_balance, interest_rate) VALUES ('SAV_REGULAR', 'Regular Savings', 'SAVINGS', 10000.00, 0.0300), ('SAV_SALARY', 'Salary Account', 'SAVINGS', 0.00, 0.0300), ('SAV_SENIOR', 'Senior Citizen Savings', 'SAVINGS', 10000.00, 0.0350), ('CUR_REGULAR', 'Current Account', 'CURRENT', 25000.00, 0.0000), ('CUR_PREMIUM', 'Premium Current', 'CURRENT', 100000.00, 0.0000); -- ============================================ -- BRANCHES -- ============================================ CREATE TABLE branches ( branch_id SERIAL PRIMARY KEY, branch_code VARCHAR(10) NOT NULL UNIQUE, -- IFSC suffix ifsc_code VARCHAR(11) NOT NULL UNIQUE, name VARCHAR(200) NOT NULL, address TEXT NOT NULL, city VARCHAR(100) NOT NULL, state VARCHAR(100) NOT NULL, pincode VARCHAR(6) NOT NULL, -- Contact phone VARCHAR(15), email VARCHAR(255), -- Manager manager_employee_id BIGINT, -- Geo location (for nearby branch search) latitude DECIMAL(10, 8), longitude DECIMAL(11, 8), -- Status is_active BOOLEAN NOT NULL DEFAULT TRUE, -- Audit created_at TIMESTAMP NOT NULL DEFAULT CURRENT_TIMESTAMP ); CREATE INDEX idx_branches_city ON branches(city); CREATE INDEX idx_branches_geo ON branches USING GIST ( ST_SetSRID(ST_MakePoint(longitude, latitude), 4326) ); -- Requires PostGIS -- ============================================ -- TRANSACTION TYPES -- ============================================ CREATE TABLE transaction_types ( transaction_type_id SERIAL PRIMARY KEY, code VARCHAR(30) NOT NULL UNIQUE, name VARCHAR(100) NOT NULL, category VARCHAR(30) NOT NULL, -- Rules requires_approval BOOLEAN NOT NULL DEFAULT FALSE, approval_threshold DECIMAL(18,2), -- Amount above which approval needed -- GL Mapping debit_gl_account INT, credit_gl_account INT, is_active BOOLEAN NOT NULL DEFAULT TRUE ); INSERT INTO transaction_types (code, name, category, requires_approval, approval_threshold) VALUES ('CASH_DEPOSIT', 'Cash Deposit', 'DEPOSIT', FALSE, NULL), ('CASH_WITHDRAWAL', 'Cash Withdrawal', 'WITHDRAWAL', TRUE, 200000.00), ('NEFT_CREDIT', 'NEFT Credit', 'TRANSFER_IN', FALSE, NULL), ('NEFT_DEBIT', 'NEFT Debit', 'TRANSFER_OUT', TRUE, 1000000.00), ('RTGS_CREDIT', 'RTGS Credit', 'TRANSFER_IN', FALSE, NULL), ('RTGS_DEBIT', 'RTGS Debit', 'TRANSFER_OUT', TRUE, 500000.00), ('IMPS_CREDIT', 'IMPS Credit', 'TRANSFER_IN', FALSE, NULL), ('IMPS_DEBIT', 'IMPS Debit', 'TRANSFER_OUT', FALSE, NULL), ('UPI_CREDIT', 'UPI Credit', 'TRANSFER_IN', FALSE, NULL), ('UPI_DEBIT', 'UPI Debit', 'TRANSFER_OUT', FALSE, NULL), ('ATM_WITHDRAWAL', 'ATM Withdrawal', 'WITHDRAWAL', FALSE, NULL), ('POS_PURCHASE', 'POS Purchase', 'PURCHASE', FALSE, NULL), ('INTEREST_CREDIT', 'Interest Credit', 'INTEREST', FALSE, NULL), ('CHARGE_DEBIT', 'Service Charge', 'CHARGE', FALSE, NULL);

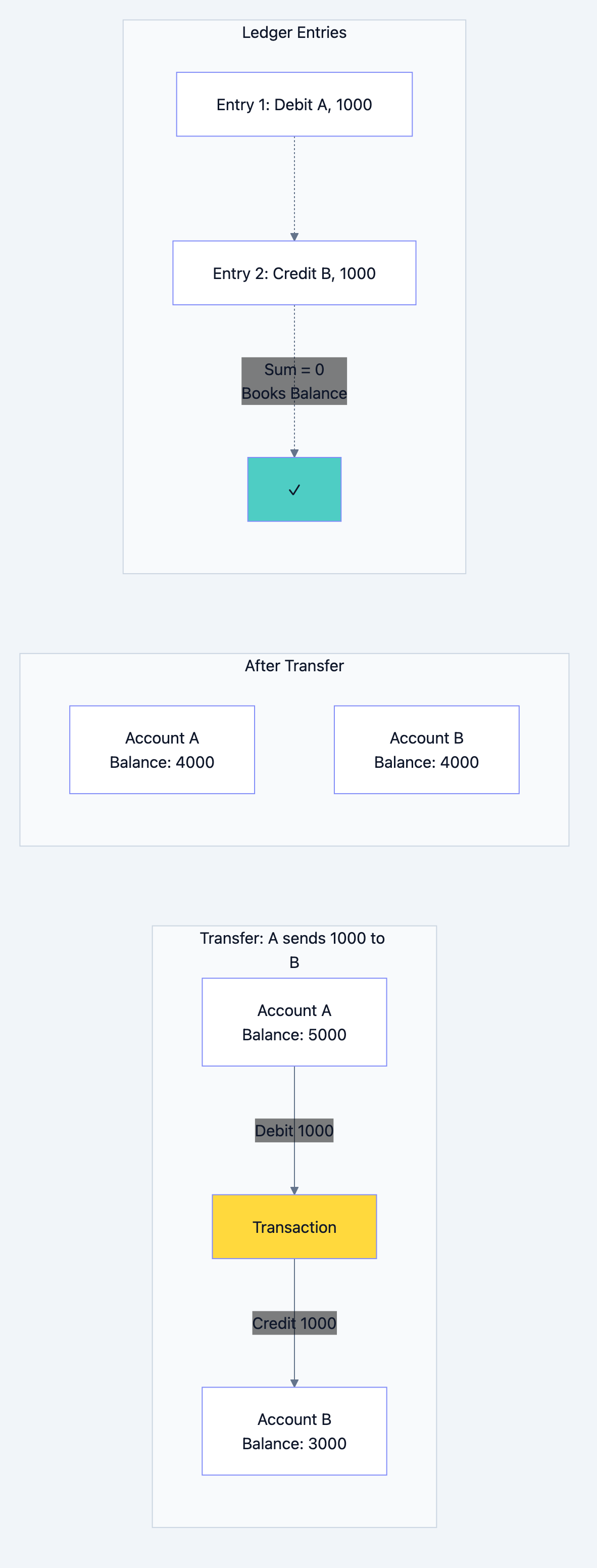

Part 4: Transaction Management

The Golden Rule: Double-Entry Bookkeeping

Every money movement has two sides. Money doesn't appear or disappear—it moves.

Banking database diagram 4

Transaction Processing with Proper Isolation

go// transaction_service.go package banking import ( "context" "database/sql" "fmt" "time" "github.com/google/uuid" "github.com/jackc/pgx/v5" "github.com/jackc/pgx/v5/pgxpool" ) type TransactionService struct { db *pgxpool.Pool cache *RedisClient kafka *KafkaProducer } type TransferRequest struct { FromAccountID int64 ToAccountID int64 Amount float64 Description string Channel string IdempotencyKey string // Prevents duplicate processing InitiatedBy int64 IPAddress string } type TransferResult struct { TransactionRef string FromBalance float64 ToBalance float64 ProcessedAt time.Time } // Transfer executes a fund transfer with full ACID compliance func (s *TransactionService) Transfer(ctx context.Context, req TransferRequest) (*TransferResult, error) { // 1. Idempotency Check - Prevent duplicate transactions existing, err := s.checkIdempotency(ctx, req.IdempotencyKey) if err != nil { return nil, fmt.Errorf("idempotency check failed: %w", err) } if existing != nil { return existing, nil // Return cached result } // 2. Validate request if err := s.validateTransfer(ctx, req); err != nil { return nil, err } // 3. Generate transaction reference txnRef := uuid.New().String() // 4. Execute transfer in serializable transaction var result *TransferResult err = s.executeInTransaction(ctx, pgx.Serializable, func(tx pgx.Tx) error { // 4a. Lock accounts in consistent order (prevent deadlock) fromAccount, toAccount, err := s.lockAccountsForTransfer(ctx, tx, req.FromAccountID, req.ToAccountID) if err != nil { return err } // 4b. Validate balances if fromAccount.AvailableBalance < req.Amount { return ErrInsufficientBalance } // 4c. Check daily limits if err := s.checkDailyLimits(ctx, tx, fromAccount, req.Amount); err != nil { return err } // 4d. Debit from source account fromBalanceAfter, err := s.debitAccount(ctx, tx, fromAccount, req.Amount, txnRef) if err != nil { return err } // 4e. Credit to destination account toBalanceAfter, err := s.creditAccount(ctx, tx, toAccount, req.Amount, txnRef) if err != nil { return err } // 4f. Create transaction records if err := s.createTransactionRecords(ctx, tx, req, txnRef, fromAccount, toAccount, fromBalanceAfter, toBalanceAfter); err != nil { return err } // 4g. Create ledger entries (double-entry) if err := s.createLedgerEntries(ctx, tx, req, txnRef, fromAccount.AccountID, toAccount.AccountID); err != nil { return err } result = &TransferResult{ TransactionRef: txnRef, FromBalance: fromBalanceAfter, ToBalance: toBalanceAfter, ProcessedAt: time.Now(), } return nil }) if err != nil { // Log failure for monitoring s.logTransactionFailure(ctx, req, txnRef, err) return nil, err } // 5. Post-transaction actions (outside main transaction) go s.postTransactionActions(context.Background(), req, result) // 6. Cache idempotency result s.cacheIdempotencyResult(ctx, req.IdempotencyKey, result) return result, nil } // lockAccountsForTransfer locks both accounts in consistent order to prevent deadlocks func (s *TransactionService) lockAccountsForTransfer(ctx context.Context, tx pgx.Tx, fromID, toID int64) (*Account, *Account, error) { // CRITICAL: Always lock in ascending ID order to prevent deadlocks var firstID, secondID int64 if fromID < toID { firstID, secondID = fromID, toID } else { firstID, secondID = toID, fromID } // Lock first account firstAccount, err := s.lockAccountForUpdate(ctx, tx, firstID) if err != nil { return nil, nil, err } // Lock second account secondAccount, err := s.lockAccountForUpdate(ctx, tx, secondID) if err != nil { return nil, nil, err } // Return in from/to order if fromID < toID { return firstAccount, secondAccount, nil } return secondAccount, firstAccount, nil } // lockAccountForUpdate acquires a row-level lock with optimistic locking check func (s *TransactionService) lockAccountForUpdate(ctx context.Context, tx pgx.Tx, accountID int64) (*Account, error) { query := ` SELECT account_id, account_number, customer_id, balance, available_balance, hold_amount, status, version, daily_withdrawal_limit, daily_transfer_limit FROM accounts WHERE account_id = $1 FOR UPDATE NOWAIT ` // NOWAIT: Fail immediately if lock not available (don't wait) // This prevents long lock chains var account Account err := tx.QueryRow(ctx, query, accountID).Scan( &account.AccountID, &account.AccountNumber, &account.CustomerID, &account.Balance, &account.AvailableBalance, &account.HoldAmount, &account.Status, &account.Version, &account.DailyWithdrawalLimit, &account.DailyTransferLimit, ) if err == pgx.ErrNoRows { return nil, ErrAccountNotFound } if err != nil { // Check if it's a lock timeout if isLockError(err) { return nil, ErrAccountLocked } return nil, err } if account.Status != "ACTIVE" { return nil, ErrAccountNotActive } return &account, nil } // debitAccount decreases balance with optimistic locking func (s *TransactionService) debitAccount(ctx context.Context, tx pgx.Tx, account *Account, amount float64, txnRef string) (float64, error) { newBalance := account.Balance - amount newAvailable := account.AvailableBalance - amount query := ` UPDATE accounts SET balance = $1, available_balance = $2, version = version + 1, updated_at = CURRENT_TIMESTAMP WHERE account_id = $3 AND version = $4 RETURNING balance ` var balanceAfter float64 err := tx.QueryRow(ctx, query, newBalance, newAvailable, account.AccountID, account.Version).Scan(&balanceAfter) if err == pgx.ErrNoRows { // Version mismatch - concurrent modification return 0, ErrConcurrentModification } if err != nil { return 0, err } return balanceAfter, nil } // creditAccount increases balance func (s *TransactionService) creditAccount(ctx context.Context, tx pgx.Tx, account *Account, amount float64, txnRef string) (float64, error) { newBalance := account.Balance + amount newAvailable := account.AvailableBalance + amount query := ` UPDATE accounts SET balance = $1, available_balance = $2, version = version + 1, updated_at = CURRENT_TIMESTAMP WHERE account_id = $3 AND version = $4 RETURNING balance ` var balanceAfter float64 err := tx.QueryRow(ctx, query, newBalance, newAvailable, account.AccountID, account.Version).Scan(&balanceAfter) if err == pgx.ErrNoRows { return 0, ErrConcurrentModification } if err != nil { return 0, err } return balanceAfter, nil } // createTransactionRecords creates the transaction pair func (s *TransactionService) createTransactionRecords( ctx context.Context, tx pgx.Tx, req TransferRequest, txnRef string, fromAccount, toAccount *Account, fromBalanceAfter, toBalanceAfter float64, ) error { // Create debit transaction debitQuery := ` INSERT INTO transactions ( transaction_ref, account_id, transaction_type_id, amount, direction, balance_before, balance_after, description, channel, status, processed_at, initiated_by, ip_address ) VALUES ( $1, $2, (SELECT transaction_type_id FROM transaction_types WHERE code = 'TRANSFER_DEBIT'), $3, 'D', $4, $5, $6, $7, 'COMPLETED', CURRENT_TIMESTAMP, $8, $9 ) RETURNING transaction_id ` var debitTxnID int64 err := tx.QueryRow(ctx, debitQuery, txnRef, fromAccount.AccountID, req.Amount, fromAccount.Balance, fromBalanceAfter, req.Description, req.Channel, req.InitiatedBy, req.IPAddress, ).Scan(&debitTxnID) if err != nil { return err } // Create credit transaction creditQuery := ` INSERT INTO transactions ( transaction_ref, account_id, transaction_type_id, amount, direction, balance_before, balance_after, description, related_txn_id, channel, status, processed_at, initiated_by, ip_address ) VALUES ( $1, $2, (SELECT transaction_type_id FROM transaction_types WHERE code = 'TRANSFER_CREDIT'), $3, 'C', $4, $5, $6, $7, $8, 'COMPLETED', CURRENT_TIMESTAMP, $9, $10 ) ` _, err = tx.Exec(ctx, creditQuery, txnRef, toAccount.AccountID, req.Amount, toAccount.Balance, toBalanceAfter, req.Description, debitTxnID, req.Channel, req.InitiatedBy, req.IPAddress, ) return err } // createLedgerEntries creates double-entry bookkeeping records func (s *TransactionService) createLedgerEntries( ctx context.Context, tx pgx.Tx, req TransferRequest, txnRef string, fromAccountID, toAccountID int64, ) error { query := ` INSERT INTO ledger_entries ( transaction_id, account_id, entry_type, amount, gl_account_id ) SELECT t.transaction_id, $1, 'D', $2, (SELECT gl_account_id FROM gl_accounts WHERE code = 'CUSTOMER_DEPOSITS') FROM transactions t WHERE t.transaction_ref = $3 AND t.direction = 'D' UNION ALL SELECT t.transaction_id, $4, 'C', $2, (SELECT gl_account_id FROM gl_accounts WHERE code = 'CUSTOMER_DEPOSITS') FROM transactions t WHERE t.transaction_ref = $3 AND t.direction = 'C' ` _, err := tx.Exec(ctx, query, fromAccountID, req.Amount, txnRef, toAccountID) return err } // executeInTransaction executes a function within a database transaction func (s *TransactionService) executeInTransaction( ctx context.Context, isolationLevel pgx.TxIsoLevel, fn func(tx pgx.Tx) error, ) error { tx, err := s.db.BeginTx(ctx, pgx.TxOptions{ IsoLevel: isolationLevel, }) if err != nil { return err } defer func() { if p := recover(); p != nil { tx.Rollback(ctx) panic(p) } }() if err := fn(tx); err != nil { tx.Rollback(ctx) return err } return tx.Commit(ctx) } // postTransactionActions handles non-critical post-transaction work func (s *TransactionService) postTransactionActions(ctx context.Context, req TransferRequest, result *TransferResult) { // Publish to Kafka for downstream systems s.kafka.Publish("transactions", TransactionEvent{ TransactionRef: result.TransactionRef, FromAccount: req.FromAccountID, ToAccount: req.ToAccountID, Amount: req.Amount, Timestamp: result.ProcessedAt, }) // Invalidate balance cache s.cache.Del(fmt.Sprintf("balance:%d", req.FromAccountID)) s.cache.Del(fmt.Sprintf("balance:%d", req.ToAccountID)) // Send notifications (async) s.sendTransactionNotifications(ctx, req, result) } // checkDailyLimits verifies transaction doesn't exceed daily limits func (s *TransactionService) checkDailyLimits(ctx context.Context, tx pgx.Tx, account *Account, amount float64) error { query := ` SELECT COALESCE(SUM(amount), 0) FROM transactions WHERE account_id = $1 AND direction = 'D' AND status = 'COMPLETED' AND created_at >= CURRENT_DATE ` var todayTotal float64 err := tx.QueryRow(ctx, query, account.AccountID).Scan(&todayTotal) if err != nil { return err } if todayTotal+amount > account.DailyTransferLimit { return ErrDailyLimitExceeded } return nil }

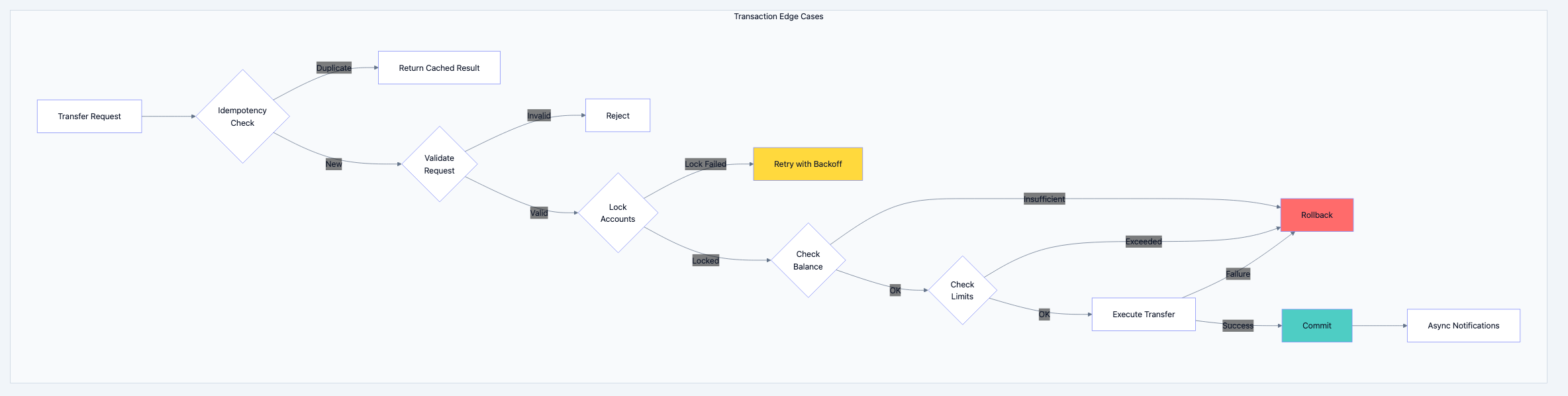

Handling Edge Cases

Banking database diagram 5

Idempotency: Preventing Double Charges

go// idempotency.go // Critical for preventing duplicate transactions when clients retry type IdempotencyStore struct { redis *redis.Client db *pgxpool.Pool } // CheckAndLock atomically checks if request was processed and locks if new func (s *IdempotencyStore) CheckAndLock(ctx context.Context, key string, ttl time.Duration) (*TransferResult, bool, error) { // Use Redis for fast check + lock script := ` local existing = redis.call('GET', KEYS[1]) if existing then return existing end redis.call('SET', KEYS[1], 'PROCESSING', 'NX', 'EX', ARGV[1]) return nil ` result, err := s.redis.Eval(ctx, script, []string{key}, int(ttl.Seconds())).Result() if err != nil && err != redis.Nil { return nil, false, err } if result != nil && result != "PROCESSING" { // Already processed, return cached result var cached TransferResult if err := json.Unmarshal([]byte(result.(string)), &cached); err != nil { return nil, false, err } return &cached, false, nil } // New request, lock acquired return nil, true, nil } // Store saves the result for future duplicate requests func (s *IdempotencyStore) Store(ctx context.Context, key string, result *TransferResult, ttl time.Duration) error { data, err := json.Marshal(result) if err != nil { return err } // Store in Redis (fast reads) if err := s.redis.Set(ctx, key, data, ttl).Err(); err != nil { return err } // Also persist to database (durability for long-running idempotency) query := ` INSERT INTO idempotency_keys (key, result, created_at, expires_at) VALUES ($1, $2, CURRENT_TIMESTAMP, CURRENT_TIMESTAMP + $3::interval) ON CONFLICT (key) DO UPDATE SET result = $2 ` _, err = s.db.Exec(ctx, query, key, data, ttl.String()) return err }

Part 5: Scaling Strategies

Architecture Overview

Banking database diagram 6

Read Scaling with Replicas

go// db_router.go // Routes queries to appropriate database based on operation type type DBRouter struct { primary *pgxpool.Pool readReplicas []*pgxpool.Pool current int32 } func NewDBRouter(primaryDSN string, replicaDSNs []string) (*DBRouter, error) { primary, err := pgxpool.New(context.Background(), primaryDSN) if err != nil { return nil, err } replicas := make([]*pgxpool.Pool, len(replicaDSNs)) for i, dsn := range replicaDSNs { replica, err := pgxpool.New(context.Background(), dsn) if err != nil { return nil, err } replicas[i] = replica } return &DBRouter{ primary: primary, readReplicas: replicas, }, nil } // Primary returns the primary database for writes func (r *DBRouter) Primary() *pgxpool.Pool { return r.primary } // Replica returns a read replica using round-robin func (r *DBRouter) Replica() *pgxpool.Pool { if len(r.readReplicas) == 0 { return r.primary } // Atomic round-robin idx := atomic.AddInt32(&r.current, 1) return r.readReplicas[int(idx)%len(r.readReplicas)] } // ReadAfterWrite returns primary if recent write, otherwise replica func (r *DBRouter) ReadAfterWrite(ctx context.Context, accountID int64) *pgxpool.Pool { // Check if this account had recent write key := fmt.Sprintf("recent_write:%d", accountID) if exists, _ := redisClient.Exists(ctx, key).Result(); exists > 0 { return r.primary // Read from primary to see own writes } return r.Replica() }

Connection Pooling Configuration

go// pool_config.go // Proper connection pool sizing is critical for performance func CreateDatabasePool(dsn string, config PoolConfig) (*pgxpool.Pool, error) { poolConfig, err := pgxpool.ParseConfig(dsn) if err != nil { return nil, err } // Pool sizing // Rule of thumb: (core_count * 2) + effective_spindle_count // For SSD: core_count * 2-4 poolConfig.MaxConns = 100 // Maximum connections poolConfig.MinConns = 10 // Minimum idle connections // Timeouts poolConfig.MaxConnLifetime = 1 * time.Hour // Recycle connections poolConfig.MaxConnIdleTime = 30 * time.Minute // Close idle connections poolConfig.HealthCheckPeriod = 1 * time.Minute // Check connection health // Connection timeout poolConfig.ConnConfig.ConnectTimeout = 5 * time.Second // Statement cache (prepared statements) poolConfig.ConnConfig.DefaultQueryExecMode = pgx.QueryExecModeCacheStatement return pgxpool.NewWithConfig(context.Background(), poolConfig) }

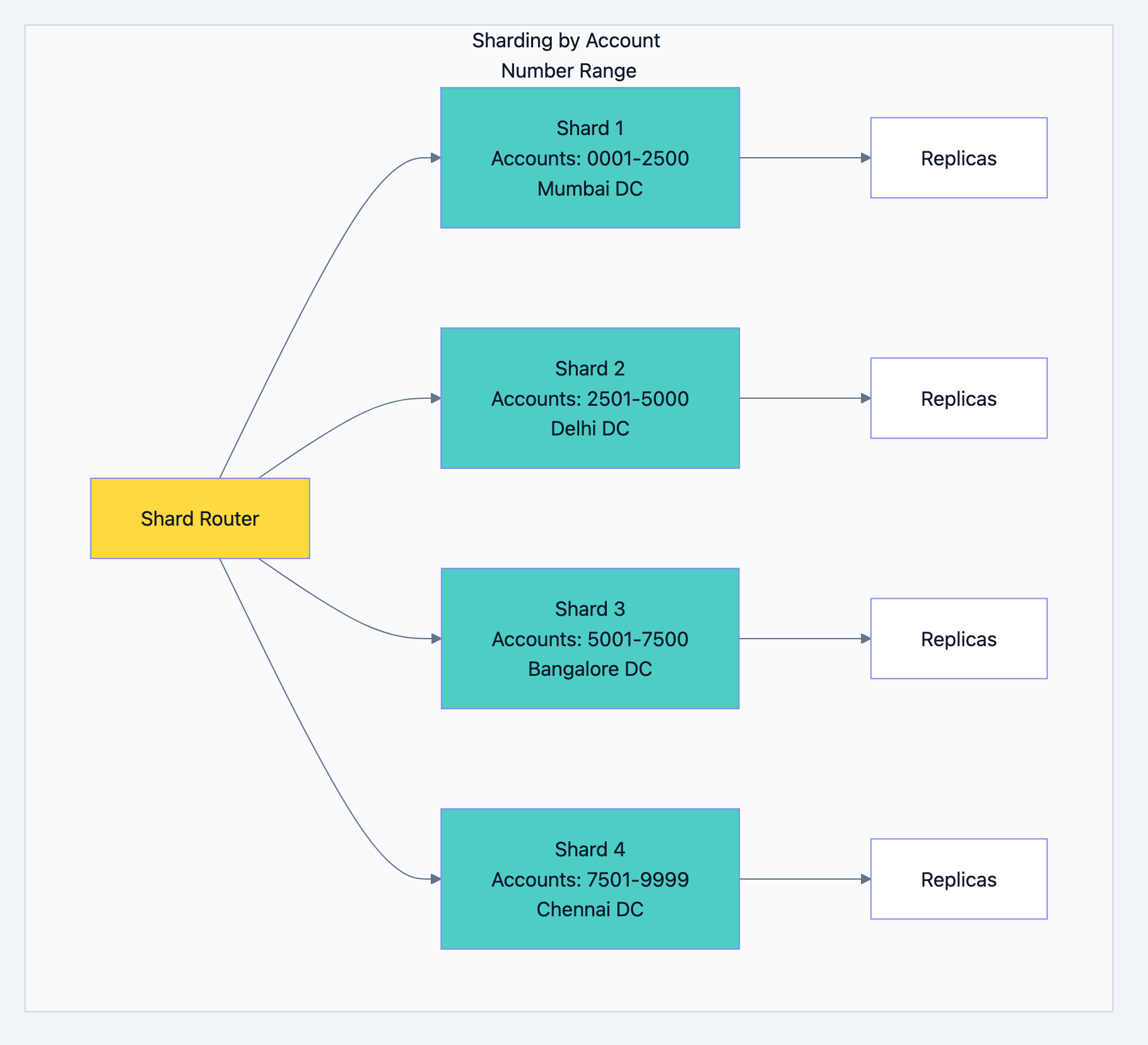

Sharding Strategy for Extreme Scale

For a bank a scale, eventually you'll need sharding. Here's the approach:

Banking database diagram 7

go// shard_router.go // Deterministic routing based on account number type ShardRouter struct { shards map[int]*pgxpool.Pool // shard_id -> connection pool config ShardConfig } type ShardConfig struct { Ranges []ShardRange } type ShardRange struct { ShardID int StartAcc string EndAcc string DSN string } // GetShardForAccount determines which shard an account belongs to func (r *ShardRouter) GetShardForAccount(accountNumber string) (*pgxpool.Pool, error) { for _, rang := range r.config.Ranges { if accountNumber >= rang.StartAcc && accountNumber <= rang.EndAcc { return r.shards[rang.ShardID], nil } } return nil, fmt.Errorf("no shard found for account: %s", accountNumber) } // Cross-shard transfer requires distributed transaction func (r *ShardRouter) CrossShardTransfer(ctx context.Context, req TransferRequest) (*TransferResult, error) { fromShard, _ := r.GetShardForAccount(req.FromAccountNumber) toShard, _ := r.GetShardForAccount(req.ToAccountNumber) if fromShard == toShard { // Same shard - normal transaction return r.singleShardTransfer(ctx, fromShard, req) } // Different shards - use Saga pattern return r.crossShardSagaTransfer(ctx, fromShard, toShard, req) } // Saga pattern for cross-shard transfers func (r *ShardRouter) crossShardSagaTransfer(ctx context.Context, fromShard, toShard *pgxpool.Pool, req TransferRequest) (*TransferResult, error) { txnRef := uuid.New().String() // Step 1: Hold funds in source account holdID, err := r.holdFunds(ctx, fromShard, req.FromAccountNumber, req.Amount, txnRef) if err != nil { return nil, err } // Step 2: Credit destination account err = r.creditAccount(ctx, toShard, req.ToAccountNumber, req.Amount, txnRef) if err != nil { // Compensate: Release hold r.releaseHold(ctx, fromShard, holdID) return nil, err } // Step 3: Confirm debit (release hold and deduct) err = r.confirmDebit(ctx, fromShard, holdID, req.FromAccountNumber, req.Amount, txnRef) if err != nil { // Compensate: Reverse credit r.reverseCredit(ctx, toShard, req.ToAccountNumber, req.Amount, txnRef) r.releaseHold(ctx, fromShard, holdID) return nil, err } return &TransferResult{ TransactionRef: txnRef, ProcessedAt: time.Now(), }, nil }

Caching Strategy

go// cache_strategy.go // Multi-level caching for banking operations type BankingCache struct { local *sync.Map // L1: In-process cache (hot data) redis *redis.ClusterClient // L2: Distributed cache } // GetBalance with multi-level caching func (c *BankingCache) GetBalance(ctx context.Context, accountID int64) (*BalanceInfo, error) { key := fmt.Sprintf("balance:%d", accountID) // L1: Check local cache (microseconds) if cached, ok := c.local.Load(key); ok { entry := cached.(*CacheEntry) if time.Since(entry.CachedAt) < 100*time.Millisecond { return entry.Data.(*BalanceInfo), nil } } // L2: Check Redis (milliseconds) data, err := c.redis.Get(ctx, key).Bytes() if err == nil { var balance BalanceInfo json.Unmarshal(data, &balance) // Populate L1 c.local.Store(key, &CacheEntry{ Data: &balance, CachedAt: time.Now(), }) return &balance, nil } // Cache miss: Query database balance, err := c.queryBalanceFromDB(ctx, accountID) if err != nil { return nil, err } // Populate both caches data, _ = json.Marshal(balance) c.redis.Set(ctx, key, data, 5*time.Minute) c.local.Store(key, &CacheEntry{ Data: balance, CachedAt: time.Now(), }) return balance, nil } // InvalidateBalance removes balance from all cache levels func (c *BankingCache) InvalidateBalance(ctx context.Context, accountID int64) { key := fmt.Sprintf("balance:%d", accountID) c.local.Delete(key) c.redis.Del(ctx, key) // Publish invalidation to other nodes c.redis.Publish(ctx, "cache_invalidation", key) }

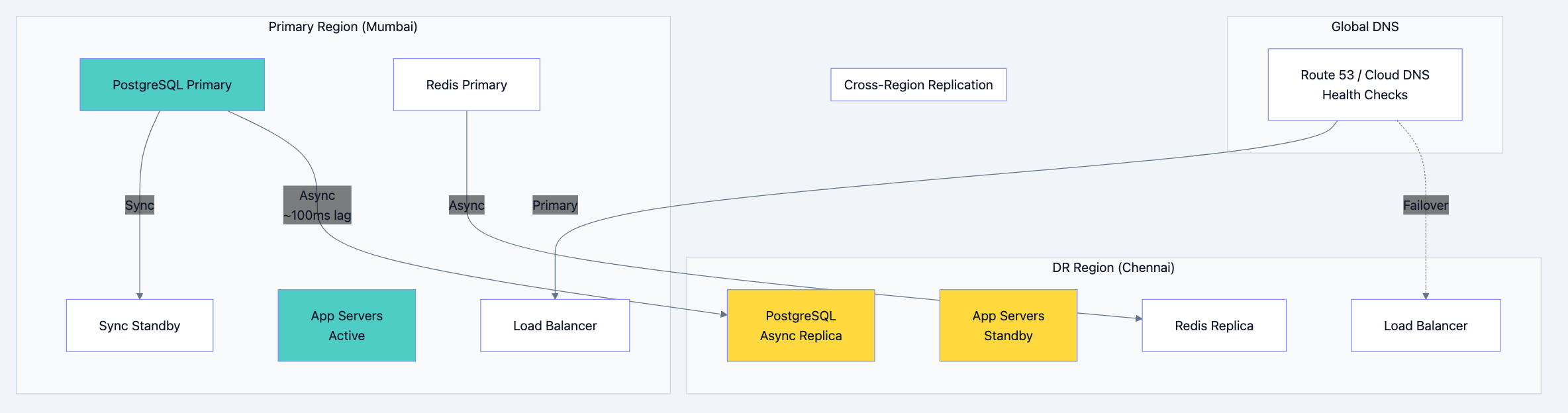

Part 6: High Availability & Disaster Recovery

Multi-Region Architecture

Banking database diagram 8

PostgreSQL High Availability

yaml# patroni.yml # Patroni manages PostgreSQL HA with automatic failover scope: banking-cluster namespace: /banking/ name: pg-node-1 restapi: listen: 0.0.0.0:8008 connect_address: pg-node-1:8008 etcd3: hosts: - etcd-1:2379 - etcd-2:2379 - etcd-3:2379 bootstrap: dcs: ttl: 30 loop_wait: 10 retry_timeout: 10 maximum_lag_on_failover: 1048576 # 1MB - don't promote if more lag synchronous_mode: true # Sync replication for zero data loss synchronous_mode_strict: true postgresql: use_pg_rewind: true parameters: max_connections: 200 shared_buffers: 8GB effective_cache_size: 24GB maintenance_work_mem: 2GB checkpoint_completion_target: 0.9 wal_buffers: 64MB default_statistics_target: 100 random_page_cost: 1.1 effective_io_concurrency: 200 work_mem: 20MB min_wal_size: 2GB max_wal_size: 8GB max_worker_processes: 8 max_parallel_workers_per_gather: 4 max_parallel_workers: 8 max_parallel_maintenance_workers: 4 # Replication wal_level: replica hot_standby: "on" max_wal_senders: 10 max_replication_slots: 10 hot_standby_feedback: "on" # Logging log_checkpoints: "on" log_connections: "on" log_disconnections: "on" log_lock_waits: "on" log_temp_files: 0 log_autovacuum_min_duration: 0 log_statement: ddl postgresql: listen: 0.0.0.0:5432 connect_address: pg-node-1:5432 data_dir: /var/lib/postgresql/data authentication: replication: username: replicator password: secret superuser: username: postgres password: secret rewind: username: rewind password: secret

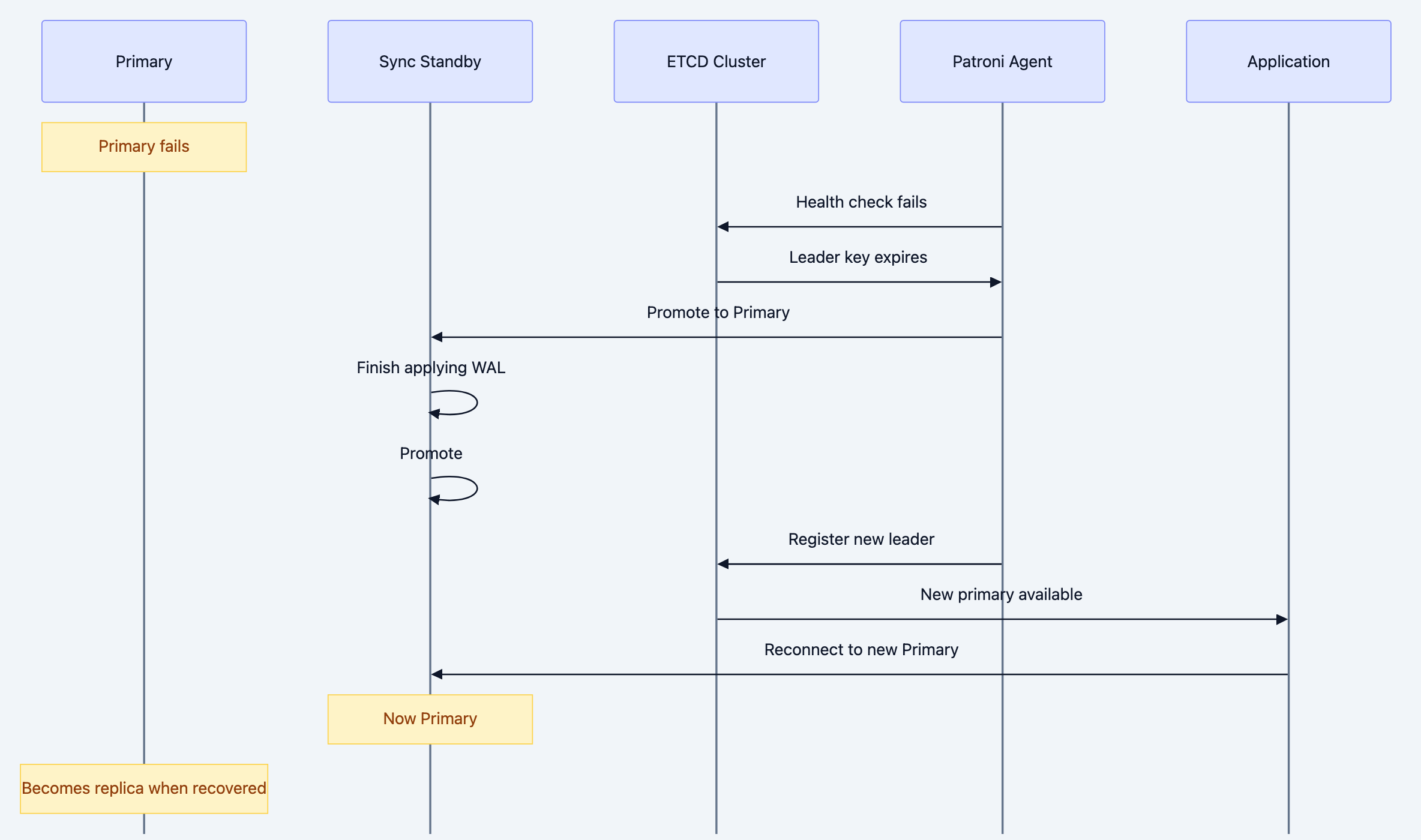

Automated Failover Process

Banking database diagram 9

Backup Strategy

bash#!/bin/bash # backup_strategy.sh # Multi-tier backup approach for banking data # ============================================ # TIER 1: Continuous WAL Archiving (RPO: ~0) # ============================================ # postgresql.conf # archive_mode = on # archive_command = 'pgbackrest --stanza=banking archive-push %p' # ============================================ # TIER 2: Daily Full Backups # ============================================ backup_full() { DATE=$(date +%Y%m%d) # Use pgBackRest for efficient backups pgbackrest --stanza=banking \ --type=full \ --compress-type=zst \ --compress-level=6 \ backup # Upload to S3 Glacier for long-term retention aws s3 cp /var/lib/pgbackrest/backup/banking/$DATE \ s3://bank-backups/banking/full/$DATE/ \ --storage-class GLACIER } # ============================================ # TIER 3: Hourly Incremental Backups # ============================================ backup_incremental() { pgbackrest --stanza=banking \ --type=incr \ backup } # ============================================ # TIER 4: Cross-Region Backup Replication # ============================================ replicate_to_dr() { # Sync to DR region aws s3 sync s3://bank-backups/banking/ \ s3://bank-backups-dr/banking/ \ --source-region ap-south-1 \ --region ap-southeast-1 } # ============================================ # Point-in-Time Recovery # ============================================ restore_to_point() { TARGET_TIME=$1 # e.g., "2024-01-15 14:30:00" # Stop PostgreSQL systemctl stop postgresql # Restore to specific point in time pgbackrest --stanza=banking \ --type=time \ --target="$TARGET_TIME" \ --target-action=promote \ restore # Start PostgreSQL systemctl start postgresql }

Recovery Time Objectives

| Scenario | RTO | RPO | Approach |

|---|---|---|---|

| Server crash | < 30 seconds | 0 | Automatic failover to sync standby |

| Data center failure | < 5 minutes | < 1 second | Failover to DR region |

| Data corruption | < 4 hours | Point-in-time | Restore from backup |

| Regional disaster | < 4 hours | < 5 minutes | DR activation |

| Complete rebuild | < 24 hours | Last backup | Full restore |

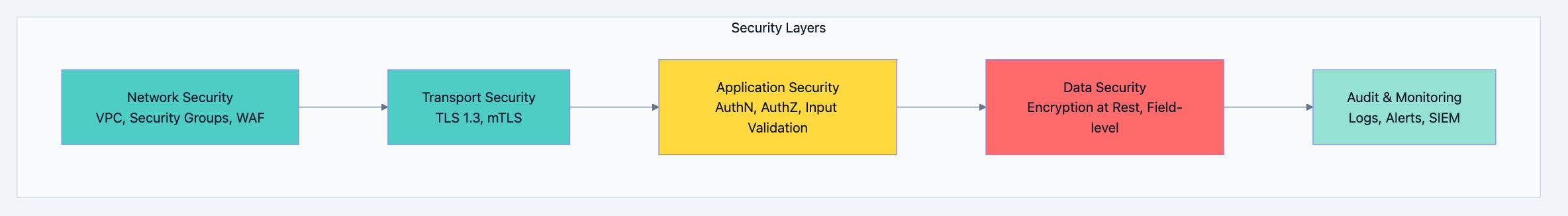

Part 7: Security & Compliance

Data Security Architecture

Banking database diagram 10

Encryption Implementation

go// encryption.go // Field-level encryption for sensitive data import ( "crypto/aes" "crypto/cipher" "crypto/rand" "encoding/base64" "io" ) type FieldEncryptor struct { key []byte // 256-bit key from HSM } // Encrypt sensitive field (PAN, Aadhaar, etc.) func (e *FieldEncryptor) Encrypt(plaintext string) (string, error) { block, err := aes.NewCipher(e.key) if err != nil { return "", err } gcm, err := cipher.NewGCM(block) if err != nil { return "", err } nonce := make([]byte, gcm.NonceSize()) if _, err := io.ReadFull(rand.Reader, nonce); err != nil { return "", err } ciphertext := gcm.Seal(nonce, nonce, []byte(plaintext), nil) return base64.StdEncoding.EncodeToString(ciphertext), nil } // Decrypt sensitive field func (e *FieldEncryptor) Decrypt(encrypted string) (string, error) { ciphertext, err := base64.StdEncoding.DecodeString(encrypted) if err != nil { return "", err } block, err := aes.NewCipher(e.key) if err != nil { return "", err } gcm, err := cipher.NewGCM(block) if err != nil { return "", err } nonceSize := gcm.NonceSize() nonce, ciphertext := ciphertext[:nonceSize], ciphertext[nonceSize:] plaintext, err := gcm.Open(nil, nonce, ciphertext, nil) if err != nil { return "", err } return string(plaintext), nil } // Database column encryption using PostgreSQL pgcrypto const createEncryptedColumn = ` -- Create encrypted column ALTER TABLE customers ADD COLUMN pan_encrypted BYTEA; -- Encrypt existing data UPDATE customers SET pan_encrypted = pgp_sym_encrypt(pan_number, $1) WHERE pan_number IS NOT NULL; -- Drop plaintext column ALTER TABLE customers DROP COLUMN pan_number; `

Audit Trail Implementation

sql-- audit_trigger.sql -- Automatic audit logging for all data changes CREATE OR REPLACE FUNCTION audit_trigger_function() RETURNS TRIGGER AS $$ DECLARE audit_row audit_logs; changed_fields TEXT[]; BEGIN audit_row.table_name = TG_TABLE_NAME; audit_row.user_id = current_setting('app.current_user_id', true)::BIGINT; audit_row.user_type = current_setting('app.current_user_type', true); audit_row.ip_address = current_setting('app.client_ip', true)::INET; audit_row.session_id = current_setting('app.session_id', true); audit_row.created_at = CURRENT_TIMESTAMP; IF TG_OP = 'INSERT' THEN audit_row.operation = 'I'; audit_row.record_id = NEW.id; audit_row.new_values = to_jsonb(NEW); audit_row.old_values = NULL; ELSIF TG_OP = 'UPDATE' THEN audit_row.operation = 'U'; audit_row.record_id = NEW.id; audit_row.old_values = to_jsonb(OLD); audit_row.new_values = to_jsonb(NEW); -- Track which fields changed SELECT array_agg(key) INTO changed_fields FROM jsonb_each(to_jsonb(NEW)) WHERE to_jsonb(NEW) -> key != to_jsonb(OLD) -> key; audit_row.changed_fields = changed_fields; ELSIF TG_OP = 'DELETE' THEN audit_row.operation = 'D'; audit_row.record_id = OLD.id; audit_row.old_values = to_jsonb(OLD); audit_row.new_values = NULL; END IF; INSERT INTO audit_logs VALUES (audit_row.*); RETURN NULL; END; $$ LANGUAGE plpgsql; -- Apply to critical tables CREATE TRIGGER audit_customers AFTER INSERT OR UPDATE OR DELETE ON customers FOR EACH ROW EXECUTE FUNCTION audit_trigger_function(); CREATE TRIGGER audit_accounts AFTER INSERT OR UPDATE OR DELETE ON accounts FOR EACH ROW EXECUTE FUNCTION audit_trigger_function(); CREATE TRIGGER audit_transactions AFTER INSERT ON transactions FOR EACH ROW EXECUTE FUNCTION audit_trigger_function();

Access Control Matrix

sql-- roles_and_permissions.sql -- Role-based access control for banking operations -- Create roles CREATE ROLE banking_readonly; CREATE ROLE banking_teller; CREATE ROLE banking_officer; CREATE ROLE banking_manager; CREATE ROLE banking_admin; -- Read-only role (customer service) GRANT SELECT ON customers, accounts, transactions TO banking_readonly; REVOKE ALL ON audit_logs FROM banking_readonly; -- Teller role (branch operations) GRANT banking_readonly TO banking_teller; GRANT INSERT ON transactions TO banking_teller; GRANT UPDATE (balance, available_balance, hold_amount) ON accounts TO banking_teller; -- Limit: Can only process transactions up to 50,000 -- Officer role (approvals) GRANT banking_teller TO banking_officer; GRANT UPDATE ON transactions TO banking_officer; -- Can approve transactions up to 500,000 -- Manager role (branch manager) GRANT banking_officer TO banking_manager; GRANT INSERT, UPDATE ON customers TO banking_manager; GRANT INSERT, UPDATE ON accounts TO banking_manager; -- Can approve any transaction -- Admin role (IT admin) GRANT ALL ON ALL TABLES IN SCHEMA public TO banking_admin; -- But restricted by application-level controls -- Row-level security for branch isolation ALTER TABLE customers ENABLE ROW LEVEL SECURITY; CREATE POLICY branch_isolation ON customers USING ( branch_id = current_setting('app.user_branch_id')::INT OR current_setting('app.user_role') = 'admin' );

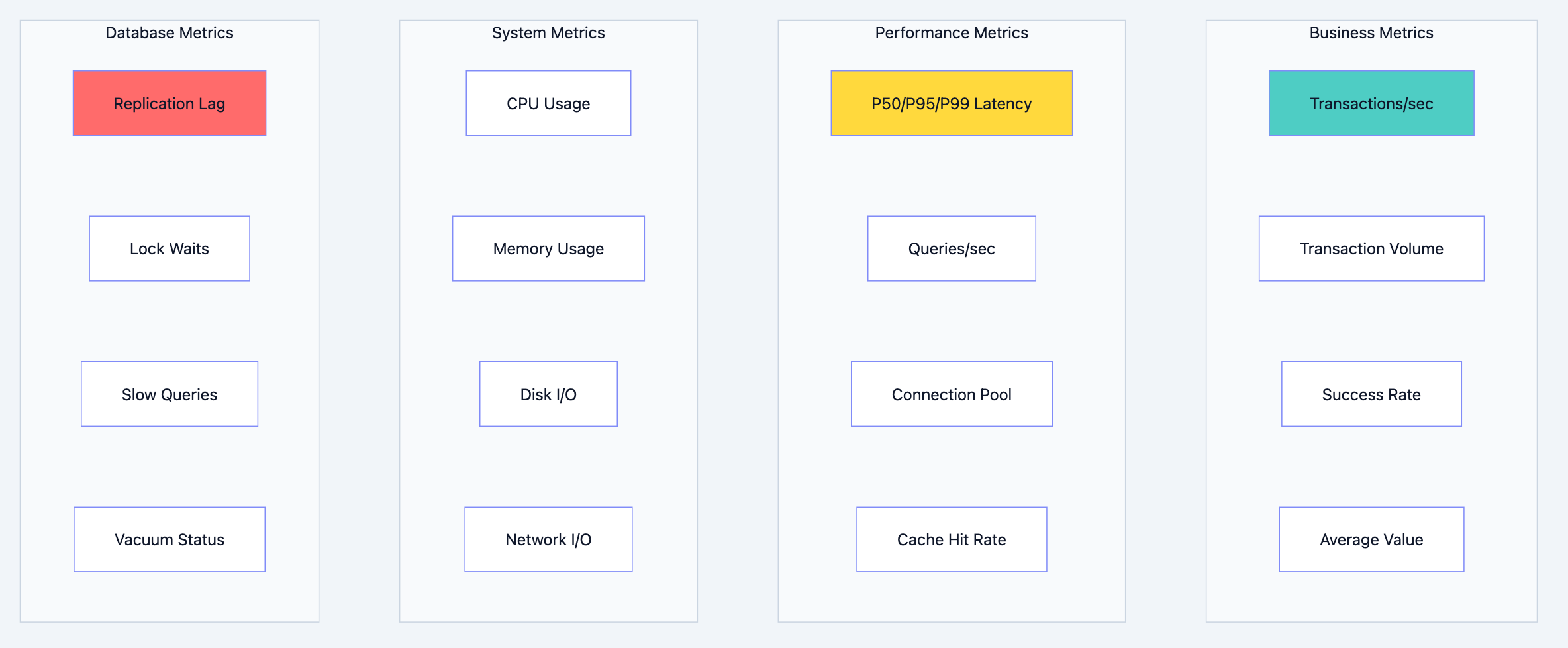

Part 8: Monitoring & Observability

Key Metrics Dashboard

Banking database diagram 11

Monitoring Implementation

go// metrics.go // Prometheus metrics for banking operations import ( "github.com/prometheus/client_golang/prometheus" "github.com/prometheus/client_golang/prometheus/promauto" ) var ( // Transaction metrics transactionTotal = promauto.NewCounterVec( prometheus.CounterOpts{ Name: "banking_transactions_total", Help: "Total number of transactions processed", }, []string{"type", "channel", "status"}, ) transactionDuration = promauto.NewHistogramVec( prometheus.HistogramOpts{ Name: "banking_transaction_duration_seconds", Help: "Transaction processing duration", Buckets: []float64{0.01, 0.025, 0.05, 0.1, 0.25, 0.5, 1, 2.5, 5}, }, []string{"type"}, ) transactionAmount = promauto.NewHistogramVec( prometheus.HistogramOpts{ Name: "banking_transaction_amount", Help: "Transaction amount distribution", Buckets: []float64{100, 500, 1000, 5000, 10000, 50000, 100000, 500000, 1000000}, }, []string{"type", "direction"}, ) // Account metrics activeAccounts = promauto.NewGauge( prometheus.GaugeOpts{ Name: "banking_active_accounts", Help: "Number of active accounts", }, ) // Database metrics dbPoolSize = promauto.NewGaugeVec( prometheus.GaugeOpts{ Name: "banking_db_pool_connections", Help: "Database connection pool status", }, []string{"state"}, // idle, in_use, waiting ) replicationLag = promauto.NewGauge( prometheus.GaugeOpts{ Name: "banking_db_replication_lag_bytes", Help: "Replication lag in bytes", }, ) // Cache metrics cacheHits = promauto.NewCounterVec( prometheus.CounterOpts{ Name: "banking_cache_hits_total", Help: "Cache hits", }, []string{"cache_type"}, ) cacheMisses = promauto.NewCounterVec( prometheus.CounterOpts{ Name: "banking_cache_misses_total", Help: "Cache misses", }, []string{"cache_type"}, ) ) // RecordTransaction records metrics for a transaction func RecordTransaction(txnType, channel, status string, duration time.Duration, amount float64, direction string) { transactionTotal.WithLabelValues(txnType, channel, status).Inc() transactionDuration.WithLabelValues(txnType).Observe(duration.Seconds()) transactionAmount.WithLabelValues(txnType, direction).Observe(amount) }

Alert Rules

yaml# alerts.yml # Prometheus alerting rules for banking system groups: - name: banking_critical rules: # Transaction failures - alert: HighTransactionFailureRate expr: | sum(rate(banking_transactions_total{status="failed"}[5m])) / sum(rate(banking_transactions_total[5m])) > 0.01 for: 2m labels: severity: critical annotations: summary: "Transaction failure rate above 1%" description: "{{ $value | humanizePercentage }} of transactions failing" # Latency - alert: HighTransactionLatency expr: | histogram_quantile(0.99, rate(banking_transaction_duration_seconds_bucket[5m])) > 2 for: 5m labels: severity: critical annotations: summary: "P99 transaction latency above 2 seconds" # Replication lag - alert: HighReplicationLag expr: banking_db_replication_lag_bytes > 10485760 # 10MB for: 1m labels: severity: critical annotations: summary: "Database replication lag above 10MB" # Connection pool exhaustion - alert: ConnectionPoolExhaustion expr: | banking_db_pool_connections{state="waiting"} > 10 for: 1m labels: severity: warning annotations: summary: "Database connection pool has waiting requests" - name: banking_business rules: # Unusual transaction volume - alert: UnusualTransactionVolume expr: | abs(sum(rate(banking_transactions_total[1h])) - sum(rate(banking_transactions_total[1h] offset 1d))) / sum(rate(banking_transactions_total[1h] offset 1d)) > 0.5 for: 30m labels: severity: warning annotations: summary: "Transaction volume differs >50% from same time yesterday" # Large transaction spike - alert: LargeTransactionSpike expr: | sum(rate(banking_transactions_total{amount_bucket="+Inf"}[5m])) > 2 * sum(rate(banking_transactions_total{amount_bucket="+Inf"}[5m] offset 1h)) for: 10m labels: severity: info annotations: summary: "Large transaction volume spike detected"

Part 9: Performance Optimization

Query Optimization

sql-- slow_query_analysis.sql -- Find and optimize slow queries -- Enable slow query logging ALTER SYSTEM SET log_min_duration_statement = 100; -- Log queries > 100ms ALTER SYSTEM SET auto_explain.log_min_duration = 100; ALTER SYSTEM SET auto_explain.log_analyze = true; SELECT pg_reload_conf(); -- Find worst performing queries SELECT substring(query, 1, 100) as query_preview, calls, total_exec_time / 1000 as total_seconds, mean_exec_time as avg_ms, stddev_exec_time as stddev_ms, rows, shared_blks_hit + shared_blks_read as total_blocks, 100.0 * shared_blks_hit / NULLIF(shared_blks_hit + shared_blks_read, 0) as cache_hit_pct FROM pg_stat_statements ORDER BY total_exec_time DESC LIMIT 20; -- Find queries with poor cache hit ratio SELECT substring(query, 1, 100), 100.0 * shared_blks_hit / NULLIF(shared_blks_hit + shared_blks_read, 0) as cache_hit_pct, calls FROM pg_stat_statements WHERE calls > 1000 AND shared_blks_hit + shared_blks_read > 0 ORDER BY cache_hit_pct LIMIT 20; -- Identify missing indexes SELECT schemaname, relname as table_name, seq_scan, seq_tup_read, idx_scan, idx_tup_fetch, n_live_tup, round(100.0 * idx_scan / NULLIF(seq_scan + idx_scan, 0), 2) as idx_scan_pct FROM pg_stat_user_tables WHERE seq_scan > 100 ORDER BY seq_tup_read DESC LIMIT 20; -- Unused indexes (candidates for removal) SELECT schemaname, relname as table_name, indexrelname as index_name, idx_scan, pg_size_pretty(pg_relation_size(indexrelid)) as index_size FROM pg_stat_user_indexes WHERE idx_scan = 0 AND indexrelname NOT LIKE '%_pkey' ORDER BY pg_relation_size(indexrelid) DESC;

Index Strategy for Banking Tables

sql-- banking_indexes.sql -- Optimized index strategy for banking workload -- ============================================ -- ACCOUNTS TABLE INDEXES -- ============================================ -- Primary lookup by account number CREATE UNIQUE INDEX CONCURRENTLY idx_accounts_number_lookup ON accounts(account_number) WHERE status != 'CLOSED'; -- Customer's accounts list CREATE INDEX CONCURRENTLY idx_accounts_customer_status ON accounts(customer_id, status) INCLUDE (account_number, account_type_id, balance) WHERE status = 'ACTIVE'; -- Branch reporting CREATE INDEX CONCURRENTLY idx_accounts_branch_type ON accounts(branch_id, account_type_id) WHERE status = 'ACTIVE'; -- ============================================ -- TRANSACTIONS TABLE INDEXES -- ============================================ -- Customer statement query (most common) -- Query: Get transactions for account, ordered by date, limit 50 CREATE INDEX CONCURRENTLY idx_txn_statement ON transactions(account_id, created_at DESC) INCLUDE (transaction_ref, amount, direction, balance_after, description, status); -- Reference number lookup (for support queries) CREATE INDEX CONCURRENTLY idx_txn_reference ON transactions(reference_number) WHERE reference_number IS NOT NULL; -- Pending transactions (for processing queue) CREATE INDEX CONCURRENTLY idx_txn_pending ON transactions(status, created_at) WHERE status IN ('PENDING', 'PROCESSING'); -- Daily totals for limit checking CREATE INDEX CONCURRENTLY idx_txn_daily_totals ON transactions(account_id, created_at::date, direction) WHERE status = 'COMPLETED'; -- ============================================ -- PARTIAL INDEXES FOR HOT DATA -- ============================================ -- Recent transactions (last 30 days) - frequently accessed CREATE INDEX CONCURRENTLY idx_txn_recent ON transactions(account_id, created_at DESC) WHERE created_at > CURRENT_DATE - INTERVAL '30 days'; -- Active accounts only CREATE INDEX CONCURRENTLY idx_accounts_active ON accounts(account_id) INCLUDE (balance, available_balance) WHERE status = 'ACTIVE'; -- ============================================ -- BRIN INDEXES FOR TIME-SERIES DATA -- ============================================ -- Efficient for time-range queries on partitioned data CREATE INDEX CONCURRENTLY idx_txn_brin_created ON transactions USING BRIN (created_at);

Connection Pool Optimization

go// connection_optimization.go // Fine-tuned connection pool for banking workload func OptimizedPoolConfig() *pgxpool.Config { config, _ := pgxpool.ParseConfig(os.Getenv("DATABASE_URL")) // Pool sizing based on workload analysis // Formula: connections = (core_count * 2) + effective_spindle_count // For SSDs: ~2-4x core count config.MaxConns = 50 // For 16-core server with SSD config.MinConns = 10 // Keep minimum ready // Connection lifecycle config.MaxConnLifetime = 30 * time.Minute // Recycle before stale config.MaxConnIdleTime = 5 * time.Minute // Release unused quickly config.HealthCheckPeriod = 30 * time.Second // Detect failures // Query execution config.ConnConfig.DefaultQueryExecMode = pgx.QueryExecModeCacheStatement // Timeouts config.ConnConfig.ConnectTimeout = 5 * time.Second // After connect hook - set session parameters config.AfterConnect = func(ctx context.Context, conn *pgx.Conn) error { // Set work_mem for complex queries _, err := conn.Exec(ctx, "SET work_mem = '256MB'") if err != nil { return err } // Set statement timeout to prevent runaway queries _, err = conn.Exec(ctx, "SET statement_timeout = '30s'") return err } return config }

Part 10: Testing Strategy

Transaction Testing

go// transaction_test.go // Comprehensive tests for banking transactions func TestTransferBasic(t *testing.T) { ctx := context.Background() service := setupTestService(t) // Create test accounts fromAccount := createTestAccount(t, 10000.00) toAccount := createTestAccount(t, 5000.00) // Execute transfer result, err := service.Transfer(ctx, TransferRequest{ FromAccountID: fromAccount.AccountID, ToAccountID: toAccount.AccountID, Amount: 1000.00, IdempotencyKey: uuid.New().String(), }) require.NoError(t, err) assert.Equal(t, 9000.00, result.FromBalance) assert.Equal(t, 6000.00, result.ToBalance) // Verify database state verifyAccountBalance(t, fromAccount.AccountID, 9000.00) verifyAccountBalance(t, toAccount.AccountID, 6000.00) // Verify transaction records verifyTransactionPair(t, result.TransactionRef, 1000.00) // Verify ledger entries balance verifyLedgerBalance(t, result.TransactionRef) } func TestTransferInsufficientBalance(t *testing.T) { ctx := context.Background() service := setupTestService(t) fromAccount := createTestAccount(t, 500.00) toAccount := createTestAccount(t, 5000.00) _, err := service.Transfer(ctx, TransferRequest{ FromAccountID: fromAccount.AccountID, ToAccountID: toAccount.AccountID, Amount: 1000.00, IdempotencyKey: uuid.New().String(), }) assert.ErrorIs(t, err, ErrInsufficientBalance) // Verify no changes verifyAccountBalance(t, fromAccount.AccountID, 500.00) verifyAccountBalance(t, toAccount.AccountID, 5000.00) } func TestTransferIdempotency(t *testing.T) { ctx := context.Background() service := setupTestService(t) fromAccount := createTestAccount(t, 10000.00) toAccount := createTestAccount(t, 5000.00) idempotencyKey := uuid.New().String() // First request result1, err := service.Transfer(ctx, TransferRequest{ FromAccountID: fromAccount.AccountID, ToAccountID: toAccount.AccountID, Amount: 1000.00, IdempotencyKey: idempotencyKey, }) require.NoError(t, err) // Duplicate request with same idempotency key result2, err := service.Transfer(ctx, TransferRequest{ FromAccountID: fromAccount.AccountID, ToAccountID: toAccount.AccountID, Amount: 1000.00, IdempotencyKey: idempotencyKey, }) require.NoError(t, err) // Should return same result, not process twice assert.Equal(t, result1.TransactionRef, result2.TransactionRef) // Balance should only be debited once verifyAccountBalance(t, fromAccount.AccountID, 9000.00) } func TestTransferConcurrentDeadlockPrevention(t *testing.T) { ctx := context.Background() service := setupTestService(t) accountA := createTestAccount(t, 10000.00) accountB := createTestAccount(t, 10000.00) var wg sync.WaitGroup wg.Add(2) var err1, err2 error // Concurrent transfers in opposite directions go func() { defer wg.Done() _, err1 = service.Transfer(ctx, TransferRequest{ FromAccountID: accountA.AccountID, ToAccountID: accountB.AccountID, Amount: 100.00, IdempotencyKey: uuid.New().String(), }) }() go func() { defer wg.Done() _, err2 = service.Transfer(ctx, TransferRequest{ FromAccountID: accountB.AccountID, ToAccountID: accountA.AccountID, Amount: 100.00, IdempotencyKey: uuid.New().String(), }) }() wg.Wait() // Both should succeed (no deadlock) require.NoError(t, err1) require.NoError(t, err2) // Balances should be consistent balanceA := getAccountBalance(t, accountA.AccountID) balanceB := getAccountBalance(t, accountB.AccountID) assert.Equal(t, 20000.00, balanceA+balanceB) // Total unchanged } func TestTransferUnderLoad(t *testing.T) { if testing.Short() { t.Skip("Skipping load test in short mode") } ctx := context.Background() service := setupTestService(t) // Create 100 accounts accounts := make([]*Account, 100) for i := 0; i < 100; i++ { accounts[i] = createTestAccount(t, 10000.00) } // Run 1000 concurrent transfers var wg sync.WaitGroup errors := make(chan error, 1000) for i := 0; i < 1000; i++ { wg.Add(1) go func(i int) { defer wg.Done() from := accounts[rand.Intn(100)] to := accounts[rand.Intn(100)] if from.AccountID == to.AccountID { return } _, err := service.Transfer(ctx, TransferRequest{ FromAccountID: from.AccountID, ToAccountID: to.AccountID, Amount: 1.00, IdempotencyKey: uuid.New().String(), }) if err != nil && !errors.Is(err, ErrInsufficientBalance) { errors <- err } }(i) } wg.Wait() close(errors) // Check for unexpected errors for err := range errors { t.Errorf("Unexpected error: %v", err) } // Verify total balance unchanged totalBalance := 0.0 for _, acc := range accounts { totalBalance += getAccountBalance(t, acc.AccountID) } assert.Equal(t, 1000000.00, totalBalance) // 100 accounts * 10000 }

Summary: The Banking Database Checklist

Design Principles

- ACID is non-negotiable - Every transaction must be atomic, consistent, isolated, durable

- Double-entry bookkeeping - Every money movement has two sides

- Immutable audit trail - Every change is logged, nothing is truly deleted

- Defense in depth - Multiple layers of security and validation

Technology Stack

| Layer | Technology | Why |

|---|---|---|

| Primary DB | PostgreSQL / Oracle | ACID, mature, proven |

| Caching | Redis Cluster | Sub-ms reads, atomic operations |

| Messaging | Kafka | Durable, ordered, replayable events |

| Analytics | ClickHouse | Fast aggregations on historical data |

| Search | Elasticsearch | Customer name search, fuzzy matching |

Scaling Strategy

- Read replicas first - Most banking is read-heavy

- Connection pooling - PgBouncer or application-level

- Caching aggressively - Balance lookups, customer data

- Partitioning - Transactions by date

- Sharding - Only when other options exhausted

High Availability

- RPO: Near zero - Synchronous replication

- RTO: < 30 seconds - Automatic failover

- Multi-region DR - Async replication to DR site

- Daily backups - Point-in-time recovery capability

Security

- Encryption at rest - AES-256 for all data

- Encryption in transit - TLS 1.3 everywhere

- Field-level encryption - PAN, Aadhaar, etc.

- Row-level security - Branch isolation

- Audit everything - Complete trail for regulators

Monitoring

- Transaction success rate - Alert if < 99.9%

- P99 latency - Alert if > 2 seconds

- Replication lag - Alert if > 1 second

- Connection pool - Alert if waiting > 0

This is how you design a database that handles millions of transactions, never loses money, and lets people sleep at night.