Why Cloud Internal Networks Are Lightning Fast: The Secret Behind AWS and GCP

The Speed You Never Notice

You deploy your application to AWS. Your database runs on one server, your API on another, and your cache on a third. They talk to each other constantly, exchanging millions of messages per day. Yet everything feels instant. You never think about it.

Then one day, you try to connect those same services across different cloud providers, or worse, across the regular internet. Suddenly, everything slows down. Requests that took 1 millisecond now take 50. What changed?

The answer lies in something cloud providers rarely talk about but spend billions building: their internal networks. These private highways between servers are fundamentally different from the internet you use every day. Understanding why they are so fast reveals how modern applications can do things that were impossible a decade ago.

The Internet Speed Limit Problem

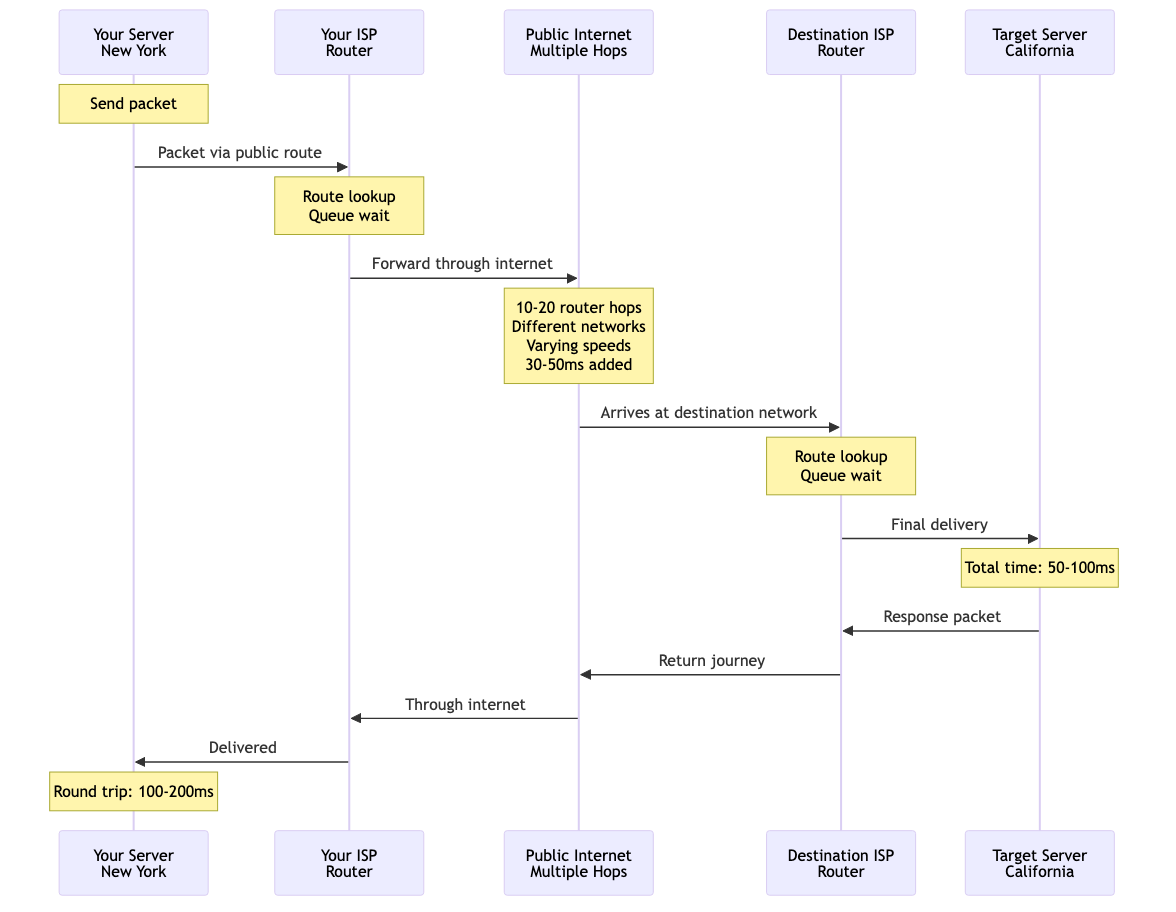

When you send data across the regular internet, your packet goes on a journey. It hops through your ISP's router, then to a regional hub, maybe across a submarine cable, through several more networks, and finally reaches its destination. Each hop adds delay. Each router makes decisions. Each network has its own speed limits.

Diagram 1

More importantly, the internet was designed to connect everyone everywhere. That means incredible flexibility but also compromise. Every packet must work with routers built by different companies, running different software, following general purpose rules that work for any situation.

This is like shipping a package through the regular postal service. It works great for reaching anywhere in the world, but there are many stops, many handlers, and many rules to follow.

The Cloud Private Highway

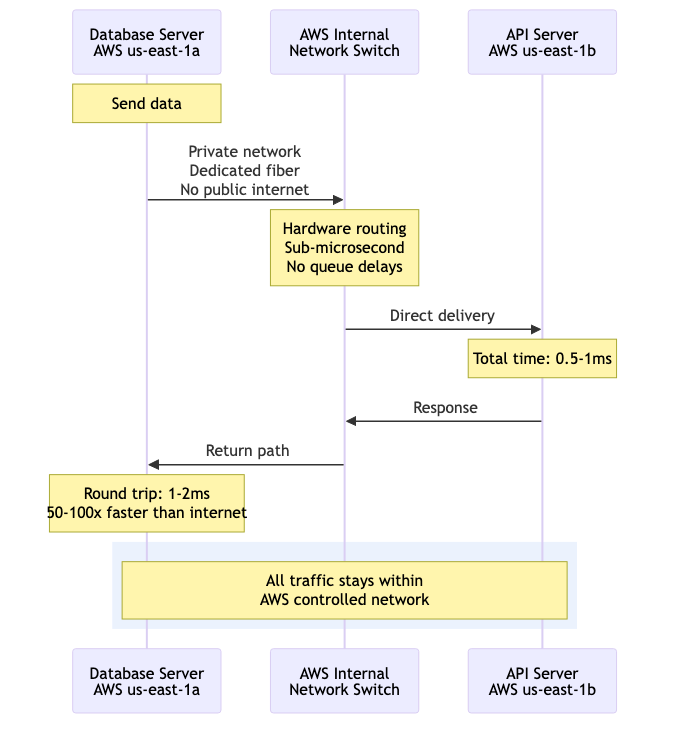

Inside AWS or GCP, things work differently. When your database server talks to your API server, both living in the same region, that traffic never touches the public internet. Instead, it travels on a private network that cloud providers own and control completely.

Diagram 2

Think of it like Amazon's internal delivery network for their warehouses. They don't use public roads or postal services to move products between buildings in the same warehouse complex. They have their own conveyor belts, their own trucks, their own optimized system. It is dramatically faster because they control every piece.

Cloud providers have built enormous private networks connecting all their data centers. These networks use fiber optic cables they own, routers they configure exactly how they want, and protocols optimized for their specific use case.

The Secret Protocol Advantage

Here is where it gets interesting. On the public internet, everyone uses standard protocols like TCP/IP. These protocols are brilliant because they work everywhere, but they include overhead for handling worst case scenarios. They assume packets might get lost, networks might be congested, and routes might change unpredictably.

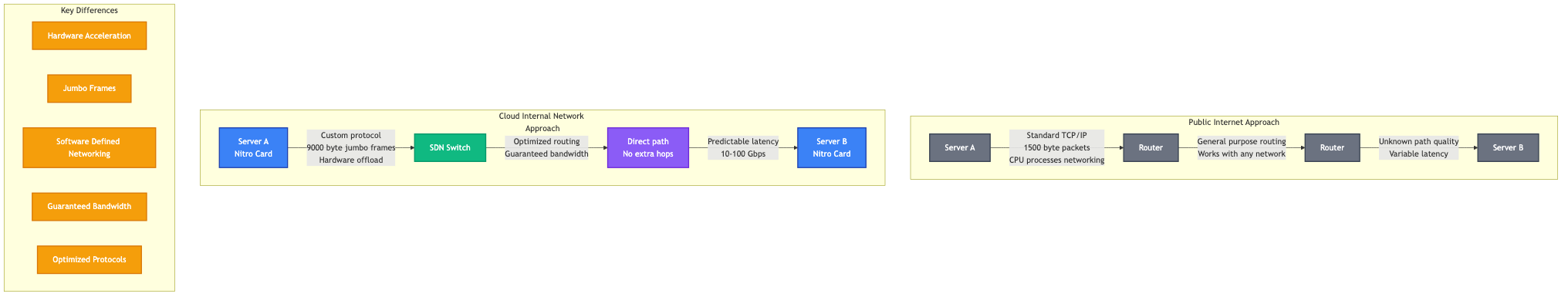

Inside cloud networks, providers can do better. While they still use IP for addressing, they layer on custom protocols and optimizations:

AWS uses something called the Nitro System, which offloads networking to dedicated hardware. This means your server CPU doesn't waste cycles processing network packets. Specialized chips handle networking at wire speed.

GCP uses custom network protocols internally that reduce overhead. They also employ something called Software Defined Networking (SDN), which means they can reconfigure the entire network instantly based on traffic patterns.

Diagram 3

More importantly, they use techniques like jumbo frames (sending bigger packets to reduce overhead) and direct server connections (skipping unnecessary hops). On the public internet, you cannot assume other networks support these features. Inside a cloud provider's network, they guarantee it.

The Latency Numbers That Matter

Let me give you concrete numbers. On the public internet, a round trip between two servers in different cities might take 50 to 100 milliseconds. Between two servers in AWS's us-east-1 region, that same communication takes less than 1 millisecond. Often it is measured in microseconds.

That is not a small difference. It is a 50 to 100 times speedup. This makes entirely new architectures possible.

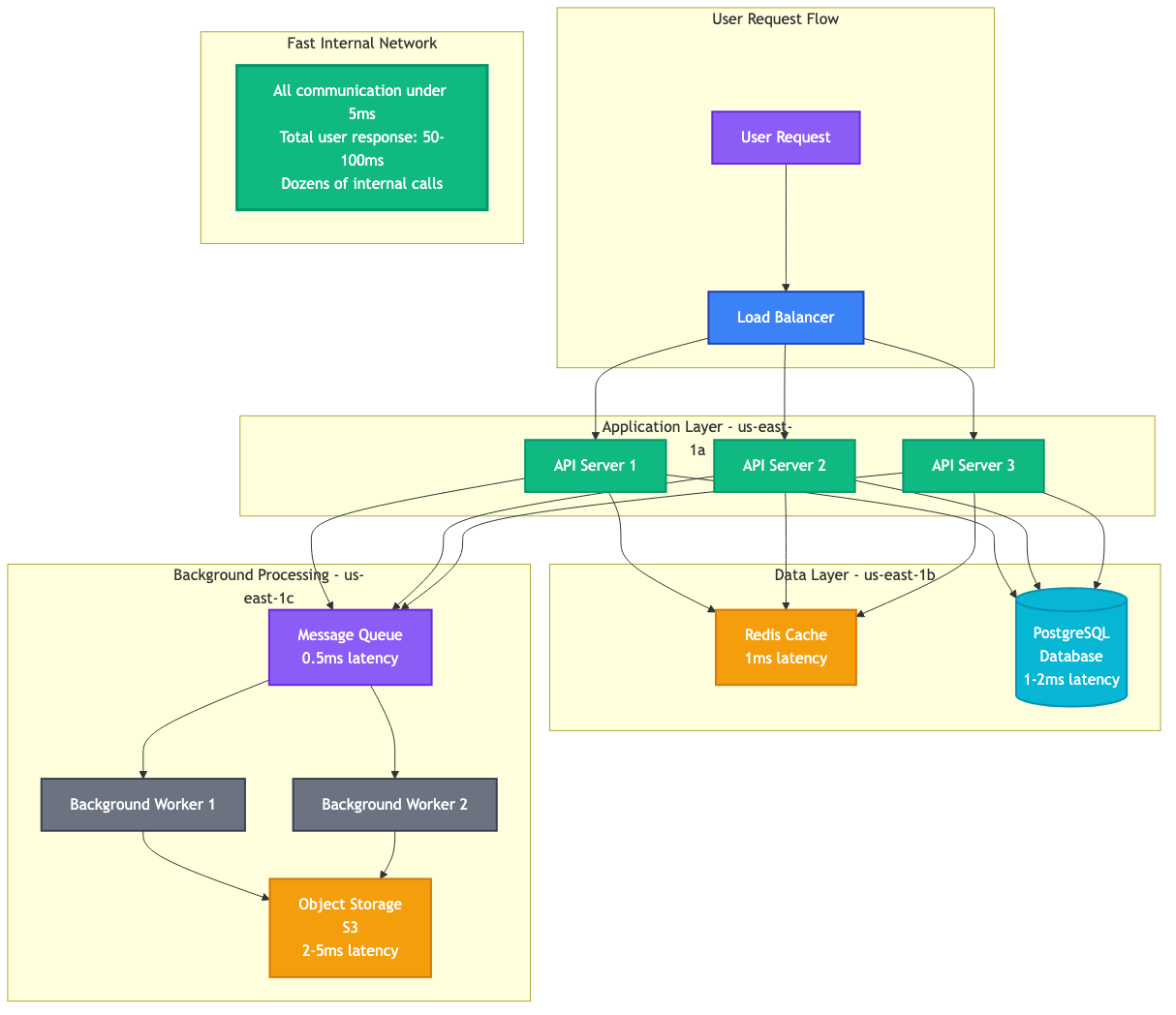

Consider a microservices application where dozens of services call each other to handle a single user request. On the public internet, if each service call takes 50ms, your user waits seconds for a response. Inside a cloud network where each call takes 1ms, the user gets an answer in 50 milliseconds. One feels slow. The other feels instant.

How This Powers Distributed Systems

This speed advantage is why distributed systems took off in the cloud era. Before fast internal networks, putting your database on one server and your application on another meant paying a latency penalty. Developers avoided it when possible.

Diagram 4

Now, you can split systems into many specialized pieces without worrying about network speed. Your application can:

Store data in a managed database service on one set of servers, cache frequently accessed data in Redis on another set, process background jobs on dedicated workers, serve static files from object storage, and coordinate everything with a message queue. Each piece runs on different hardware, optimized for its specific job. The fast internal network ties it all together seamlessly.

This is how Netflix streams to millions simultaneously. How Spotify serves your playlists instantly. How Slack handles millions of messages per second. They split complex systems into manageable pieces, knowing the cloud network will keep everything coordinated.

Beyond Just Speed: Bandwidth and Reliability

Speed is only part of the story. Cloud internal networks also offer massive bandwidth. Transferring data between servers in the same region often gives you 10 to 100 Gbps throughput. Some specialized instances can push terabits per second.

Compare that to your home internet connection at maybe 100 Mbps, or even a business connection at 1 Gbps. Cloud internal networks are in a different league entirely.

They also offer predictable performance. On the public internet, your speed varies based on congestion, routing changes, and other users. Inside a cloud network, you get consistent performance because the provider controls everything and provisions capacity accordingly.

The Multi Region Reality Check

This magic has limits. Move across regions, and you are back to internet speeds. Data traveling from us-east-1 to eu-west-1 goes through the public internet (or cloud provider backbone networks, which are faster than regular internet but slower than internal networks).

Cloud providers offer dedicated connections between regions, but physics still applies. The speed of light in fiber optic cables limits how fast data can travel. A packet cannot go from Virginia to Ireland faster than about 40 milliseconds no matter how good your network is.

This is why application architecture matters. Keep frequently communicating services in the same region. Use caching and async processing for cross region communication. Understand the latency characteristics of your deployment.

What This Means for You

If you build applications in the cloud, this internal network speed is a massive advantage you get for free. You do not pay extra for sub-millisecond latency between your services. You do not need to manage the network infrastructure. It just works.

But you do need to design for it. Putting your application and database in the same region is not just a best practice, it is the difference between a responsive application and a slow one. Keeping related services close matters enormously.

The dirty secret of cloud computing is not the servers or the storage. It is the network. Cloud providers have spent billions building private networks that are faster, more reliable, and higher bandwidth than anything you could build yourself. This network infrastructure is what makes modern distributed systems practical.

Next time your microservices application responds in milliseconds despite coordinating dozens of components, remember it is not magic. It is physics, engineering, and billions of dollars in fiber optic cables working exactly as designed.