gRPC: High-Performance RPC Framework

Why This Matters

Imagine you're building a microservices architecture with 50+ services talking to each other. Using REST APIs with JSON, you face:

- Latency: JSON parsing overhead on every request

- Contract drift: No enforced schema, breaking changes slip through

- Boilerplate: Hand-writing client libraries for every language

- Bandwidth: Verbose JSON payloads consuming network resources

Now imagine a world where:

- Services communicate 10x faster with binary serialization

- Your API contract is code-generated in 10+ languages automatically

- Type safety is enforced at compile time, catching bugs before production

- Bi-directional streaming is built-in for real-time features

This is gRPC—Google's high-performance RPC framework that powers their internal microservices (handling 10 billion requests per second). It's used by Netflix, Square, Cisco, and thousands of companies to build production-grade distributed systems.

By the end of this deep-dive, you'll understand:

- How Protocol Buffers achieve 5-10x faster serialization than JSON

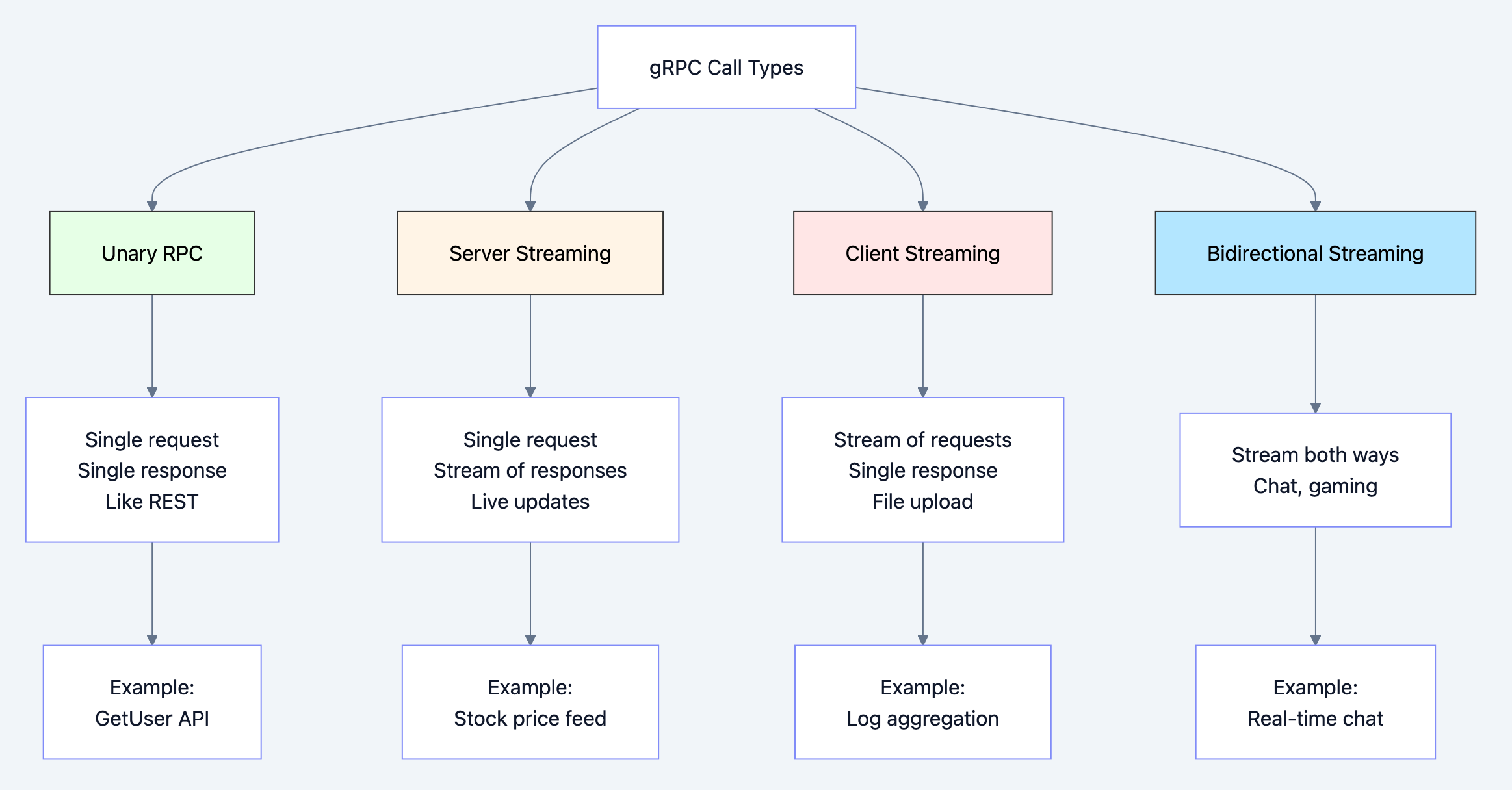

- The four types of gRPC calls (unary, server streaming, client streaming, bidirectional)

- How gRPC leverages HTTP/2 multiplexing for performance

- When to use gRPC vs REST vs GraphQL

Real-world impact: Netflix replaced their REST APIs with gRPC and reduced latency by 50% while increasing throughput by 100%.

What gRPC Solves

Problems with Traditional REST APIs

1. Schema Drift and Breaking Changes

With REST/JSON, you have no enforced contract:

json// Version 1: Client expects this {"user_id": 123, "name": "Alice"} // Version 2: Server changes field name (BREAKS CLIENT!) {"userId": 123, "name": "Alice"}

No compile-time check catches this. It fails in production.

2. JSON Parsing Overhead

Every REST request involves:

- Serializing objects to JSON strings (expensive)

- Network transmission (verbose, large payloads)

- Parsing JSON strings back to objects (CPU-intensive)

go// JSON: 85 bytes, requires parsing {"id":12345,"email":"user@example.com","created_at":"2026-01-27T10:30:00Z"} // Protobuf: 32 bytes, binary format (60% smaller) // Pre-compiled struct, no parsing needed

3. Manual Client Library Development

For a REST API, you manually write clients for each language:

- Go client

- Python client

- Java client

- JavaScript client

All duplicating the same logic, prone to inconsistencies.

4. No Built-in Streaming

REST is request-response only. For real-time features, you need:

- Server-Sent Events (SSE) - one-way only

- WebSockets - complex protocol, not HTTP/2-native

- Polling - inefficient and high latency

Real-World Scenarios Where gRPC Excels

- Microservices Communication: Low-latency service-to-service calls

- Mobile Clients: Efficient battery usage with binary payloads

- Real-Time Systems: Stock trading, gaming, IoT telemetry

- Polyglot Environments: Auto-generated clients for 10+ languages

- High-Throughput APIs: Processing millions of requests per second

How gRPC Works

gRPC is built on three key technologies:

1. Protocol Buffers (Protobuf): The Language

Protocol Buffers is Google's language-neutral, platform-neutral serialization format. You define your API in a

.proto file:protobufsyntax = "proto3"; package users; // Service definition service UserService { rpc GetUser(GetUserRequest) returns (User); rpc ListUsers(ListUsersRequest) returns (stream User); } // Message definitions message User { int64 id = 1; string email = 2; string name = 3; int64 created_at = 4; } message GetUserRequest { int64 user_id = 1; } message ListUsersRequest { int32 page_size = 1; string page_token = 2; }

From this single file, you generate client and server code in Go, Java, Python, C++, Ruby, JavaScript, etc.

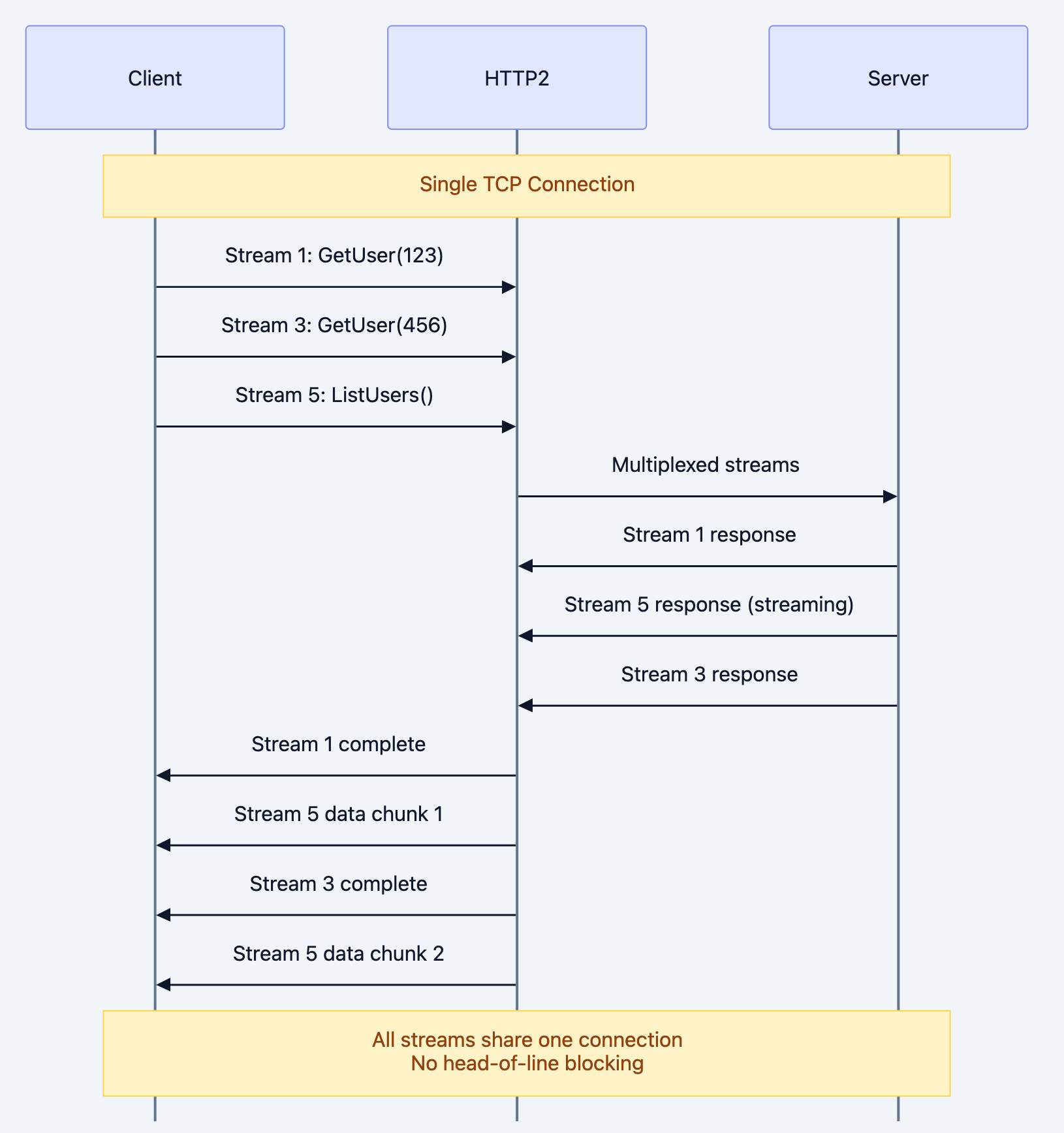

2. HTTP/2: The Transport

gRPC runs over HTTP/2, giving you:

- Multiplexing: Multiple RPC calls over one TCP connection

- Header compression: HPACK reduces overhead

- Binary framing: Efficient parsing

- Flow control: Prevents overwhelming receivers

3. Four Types of RPC Calls

Diagram 1

The Remote Function Call

Think of gRPC as calling a function on a remote machine as if it were local:

Traditional REST:

go// Manual HTTP client code resp, _ := http.Get("https://api.example.com/users/123") body, _ := ioutil.ReadAll(resp.Body) var user User json.Unmarshal(body, &user)

gRPC:

go// Looks like a local function call! user, err := client.GetUser(ctx, &pb.GetUserRequest{UserId: 123})

Under the hood:

- Request is serialized to binary (Protobuf)

- Sent over HTTP/2 connection

- Server deserializes, calls actual function

- Response is serialized back

- Client receives and deserializes automatically

The beauty: All serialization, networking, and error handling is abstracted away.

Deep Technical Dive

1. Code Example: Defining a gRPC Service

Let's build a simple user service. First, define the API in

user.proto:protobufsyntax = "proto3"; package user; option go_package = "github.com/example/userservice/pb"; // User service definition service UserService { // Unary RPC: Get single user rpc GetUser(GetUserRequest) returns (GetUserResponse); // Server streaming: List users with pagination rpc ListUsers(ListUsersRequest) returns (stream User); // Client streaming: Batch create users rpc BatchCreateUsers(stream CreateUserRequest) returns (BatchCreateResponse); // Bidirectional streaming: Real-time user updates rpc StreamUserUpdates(stream UserUpdateRequest) returns (stream UserUpdateResponse); } // Message types message User { int64 id = 1; string email = 2; string name = 3; bool is_active = 4; int64 created_at = 5; } message GetUserRequest { int64 user_id = 1; } message GetUserResponse { User user = 1; string error = 2; } message ListUsersRequest { int32 page_size = 1; string page_token = 2; } message CreateUserRequest { string email = 1; string name = 2; } message BatchCreateResponse { int32 created_count = 1; repeated int64 user_ids = 2; } message UserUpdateRequest { int64 user_id = 1; string field = 2; string value = 3; } message UserUpdateResponse { bool success = 1; User updated_user = 2; }

Generate Go code:

bashprotoc --go_out=. --go-grpc_out=. user.proto

This creates:

user.pb.go- Message typesuser_grpc.pb.go- Service interface and client

2. Code Example: Implementing the gRPC Server

Now implement the server in Go:

gopackage main import ( "context" "fmt" "log" "net" "sync" "time" pb "github.com/example/userservice/pb" "google.golang.org/grpc" "google.golang.org/grpc/codes" "google.golang.org/grpc/status" ) // UserServiceServer implements the gRPC service type UserServiceServer struct { pb.UnimplementedUserServiceServer users map[int64]*pb.User mu sync.RWMutex nextID int64 } func NewUserServiceServer() *UserServiceServer { return &UserServiceServer{ users: make(map[int64]*pb.User), nextID: 1, } } // Unary RPC: Get single user func (s *UserServiceServer) GetUser(ctx context.Context, req *pb.GetUserRequest) (*pb.GetUserResponse, error) { s.mu.RLock() defer s.mu.RUnlock() user, exists := s.users[req.UserId] if !exists { return nil, status.Errorf(codes.NotFound, "user %d not found", req.UserId) } return &pb.GetUserResponse{User: user}, nil } // Server streaming: Stream all users to client func (s *UserServiceServer) ListUsers(req *pb.ListUsersRequest, stream pb.UserService_ListUsersServer) error { s.mu.RLock() defer s.mu.RUnlock() pageSize := req.PageSize if pageSize == 0 { pageSize = 10 // Default page size } count := 0 for _, user := range s.users { // Send user to client stream if err := stream.Send(user); err != nil { return err } count++ if count >= int(pageSize) { break } // Simulate streaming delay time.Sleep(100 * time.Millisecond) } return nil } // Client streaming: Receive multiple users from client func (s *UserServiceServer) BatchCreateUsers(stream pb.UserService_BatchCreateUsersServer) error { var createdIDs []int64 // Receive stream of users from client for { req, err := stream.Recv() if err == io.EOF { // Client finished sending return stream.SendAndClose(&pb.BatchCreateResponse{ CreatedCount: int32(len(createdIDs)), UserIds: createdIDs, }) } if err != nil { return err } // Create user s.mu.Lock() userID := s.nextID s.nextID++ user := &pb.User{ Id: userID, Email: req.Email, Name: req.Name, IsActive: true, CreatedAt: time.Now().Unix(), } s.users[userID] = user s.mu.Unlock() createdIDs = append(createdIDs, userID) log.Printf("Created user: %d - %s", userID, req.Email) } } // Bidirectional streaming: Real-time updates func (s *UserServiceServer) StreamUserUpdates(stream pb.UserService_StreamUserUpdatesServer) error { for { // Receive update from client req, err := stream.Recv() if err == io.EOF { return nil } if err != nil { return err } // Apply update s.mu.Lock() user, exists := s.users[req.UserId] if !exists { s.mu.Unlock() stream.Send(&pb.UserUpdateResponse{Success: false}) continue } // Update field (simplified example) switch req.Field { case "name": user.Name = req.Value case "email": user.Email = req.Value } s.mu.Unlock() // Send confirmation back stream.Send(&pb.UserUpdateResponse{ Success: true, UpdatedUser: user, }) log.Printf("Updated user %d: %s = %s", req.UserId, req.Field, req.Value) } } func main() { // Create TCP listener listener, err := net.Listen("tcp", ":50051") if err != nil { log.Fatalf("Failed to listen: %v", err) } // Create gRPC server grpcServer := grpc.NewServer( grpc.MaxRecvMsgSize(10 * 1024 * 1024), // 10MB max message grpc.MaxSendMsgSize(10 * 1024 * 1024), ) // Register service userService := NewUserServiceServer() pb.RegisterUserServiceServer(grpcServer, userService) log.Println("gRPC server listening on :50051") if err := grpcServer.Serve(listener); err != nil { log.Fatalf("Failed to serve: %v", err) } }

3. Code Example: gRPC Client with All Four Call Types

gopackage main import ( "context" "io" "log" "time" pb "github.com/example/userservice/pb" "google.golang.org/grpc" "google.golang.org/grpc/credentials/insecure" ) func main() { // Connect to gRPC server conn, err := grpc.Dial("localhost:50051", grpc.WithTransportCredentials(insecure.NewCredentials()), grpc.WithBlock(), grpc.WithTimeout(5*time.Second), ) if err != nil { log.Fatalf("Failed to connect: %v", err) } defer conn.Close() client := pb.NewUserServiceClient(conn) ctx := context.Background() // 1. UNARY RPC: Single request, single response log.Println("--- Unary RPC Example ---") userResp, err := client.GetUser(ctx, &pb.GetUserRequest{UserId: 1}) if err != nil { log.Printf("GetUser failed: %v", err) } else { log.Printf("Got user: %v", userResp.User) } // 2. SERVER STREAMING: Single request, stream of responses log.Println("\n--- Server Streaming Example ---") stream, err := client.ListUsers(ctx, &pb.ListUsersRequest{PageSize: 5}) if err != nil { log.Fatalf("ListUsers failed: %v", err) } for { user, err := stream.Recv() if err == io.EOF { break // Stream ended } if err != nil { log.Fatalf("Stream error: %v", err) } log.Printf("Received user: %d - %s", user.Id, user.Email) } // 3. CLIENT STREAMING: Stream of requests, single response log.Println("\n--- Client Streaming Example ---") batchStream, err := client.BatchCreateUsers(ctx) if err != nil { log.Fatalf("BatchCreateUsers failed: %v", err) } // Send multiple users users := []struct{ email, name string }{ {"alice@example.com", "Alice"}, {"bob@example.com", "Bob"}, {"charlie@example.com", "Charlie"}, } for _, u := range users { if err := batchStream.Send(&pb.CreateUserRequest{ Email: u.email, Name: u.name, }); err != nil { log.Fatalf("Send failed: %v", err) } log.Printf("Sent user: %s", u.email) } // Close stream and get response batchResp, err := batchStream.CloseAndRecv() if err != nil { log.Fatalf("CloseAndRecv failed: %v", err) } log.Printf("Created %d users: %v", batchResp.CreatedCount, batchResp.UserIds) // 4. BIDIRECTIONAL STREAMING: Stream both ways log.Println("\n--- Bidirectional Streaming Example ---") biStream, err := client.StreamUserUpdates(ctx) if err != nil { log.Fatalf("StreamUserUpdates failed: %v", err) } // Goroutine to receive responses go func() { for { resp, err := biStream.Recv() if err == io.EOF { return } if err != nil { log.Fatalf("Receive error: %v", err) } log.Printf("Update confirmed: %v", resp.Success) if resp.UpdatedUser != nil { log.Printf("Updated user: %v", resp.UpdatedUser) } } }() // Send updates updates := []struct{ userID int64; field, value string }{ {1, "name", "Alice Updated"}, {2, "email", "bob.new@example.com"}, } for _, u := range updates { if err := biStream.Send(&pb.UserUpdateRequest{ UserId: u.userID, Field: u.field, Value: u.value, }); err != nil { log.Fatalf("Send failed: %v", err) } time.Sleep(500 * time.Millisecond) } biStream.CloseSend() time.Sleep(1 * time.Second) // Wait for responses log.Println("Done!") }

4. Protocol Buffers: Binary Serialization

How Protobuf Encodes Data:

protobufmessage User { int64 id = 1; // Field number 1 string email = 2; // Field number 2 string name = 3; // Field number 3 }

Binary encoding (wire format):

Field 1 (id): [field_number << 3 | wire_type][value] Field 2 (email): [field_number << 3 | wire_type][length][bytes] Field 3 (name): [field_number << 3 | wire_type][length][bytes]

Comparison: JSON vs Protobuf

json// JSON: 89 bytes { "id": 12345, "email": "alice@example.com", "name": "Alice Smith" }

// Protobuf: 37 bytes (58% smaller) 08 B9 60 12 13 61 6C 69 63 65 40 65 78 61 6D 70 6C 65 2E 63 6F 6D 1A 0B 41 6C 69 63 65 20 53 6D 69 74 68

Performance Benefits:

- Serialization: 5-10x faster than JSON

- Deserialization: 3-5x faster

- Payload size: 30-70% smaller

- Type safety: Compile-time validation

5. gRPC Over HTTP/2

gRPC leverages HTTP/2 features:

Note over Client,Server: Single TCP Connection

HTTP/2 Benefits for gRPC:

- Multiplexing: Multiple RPCs over one connection

- Header compression: HPACK reduces metadata overhead

- Flow control: Prevents buffer overflow

- Server push: Not used by gRPC, but available

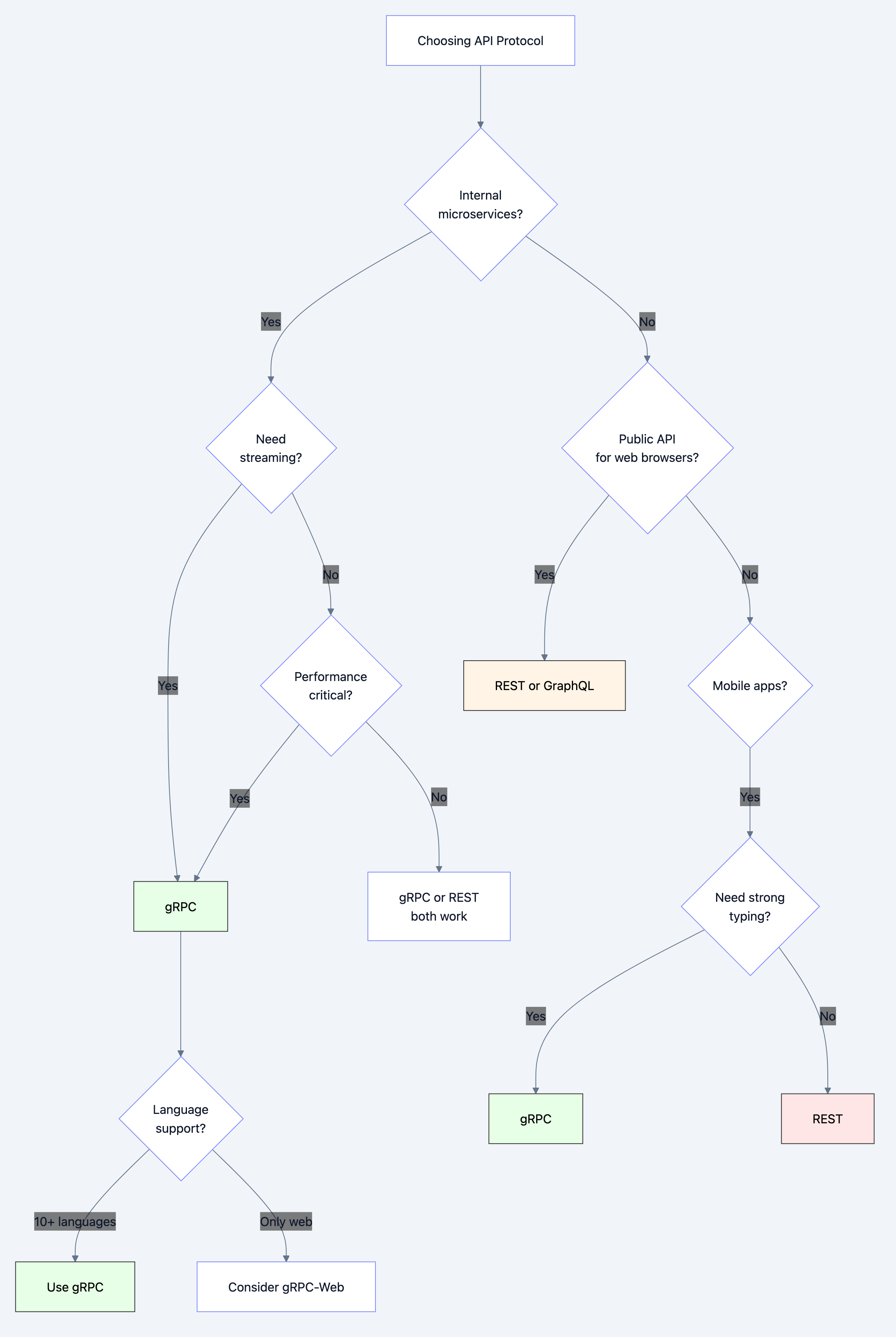

When to Use: Decision Framework

Diagram 3

Use gRPC When:

- Building microservices architecture

- Need high-performance service-to-service communication

- Want type-safe APIs with code generation

- Need streaming (server, client, or bidirectional)

- Working in polyglot environment (Go, Java, Python, etc.)

- Mobile clients (efficient battery usage)

Use REST When:

- Public APIs for third-party developers

- Browser-based clients (though gRPC-Web exists)

- Simple CRUD operations

- Need human-readable API (debugging, curl commands)

- Wide ecosystem compatibility required

Use GraphQL When:

- Flexible querying needed (mobile apps with varying data needs)

- Frontend-driven API evolution

- Need to aggregate data from multiple services

- Public API with complex data relationships

Common Pitfalls and How to Avoid Them

Pitfall 1: Breaking Proto File Changes

The Mistake:

protobuf// Before message User { int64 id = 1; string email = 2; } // After - BREAKING CHANGE! message User { int64 id = 1; string username = 2; // Renamed field, same number! }

Why It's Dangerous:

- Old clients will parse

usernameasemail - No compile error, silent data corruption

The Fix:

Follow Protobuf's evolution rules:

protobuf// Correct way to add field message User { int64 id = 1; string email = 2; string username = 3; // New field, new number } // Or deprecate old field message User { int64 id = 1; string email = 2 [deprecated = true]; string username = 3; }

Rules:

- Never change field numbers

- Never change field types

- Use

reservedfor deleted fields:

protobufreserved 4, 5; // Don't reuse these numbers reserved "old_field_name";

Pitfall 2: No Error Handling

The Mistake:

gouser, _ := client.GetUser(ctx, req) // Ignoring errors! fmt.Println(user.Email) // Panics if error occurred

The Fix:

Always check errors and status codes:

gouser, err := client.GetUser(ctx, req) if err != nil { // Check gRPC status code st, ok := status.FromError(err) if ok { switch st.Code() { case codes.NotFound: log.Println("User not found") case codes.Unavailable: log.Println("Service unavailable, retry") case codes.DeadlineExceeded: log.Println("Request timeout") default: log.Printf("Error: %v", st.Message()) } } return }

Pitfall 3: No Timeouts/Deadlines

The Mistake:

go// Request waits forever if server hangs ctx := context.Background() client.GetUser(ctx, req)

The Fix:

Always use context with timeout:

goctx, cancel := context.WithTimeout(context.Background(), 5*time.Second) defer cancel() user, err := client.GetUser(ctx, req) if err != nil { if ctx.Err() == context.DeadlineExceeded { log.Println("Request timed out after 5 seconds") } return }

Pitfall 4: Not Handling Stream Errors

The Mistake:

gostream, _ := client.ListUsers(ctx, req) for { user, _ := stream.Recv() // Ignoring EOF and errors fmt.Println(user) }

The Fix:

Properly handle EOF and errors:

gostream, err := client.ListUsers(ctx, req) if err != nil { log.Fatal(err) } for { user, err := stream.Recv() if err == io.EOF { break // Stream ended normally } if err != nil { log.Fatalf("Stream error: %v", err) } fmt.Println(user) }

Pitfall 5: Large Message Sizes

The Problem:

Default max message size is 4MB. Large messages fail:

error: received message larger than max (5242880 vs 4194304)

The Fix:

Configure max message size:

go// Server grpcServer := grpc.NewServer( grpc.MaxRecvMsgSize(10 * 1024 * 1024), // 10MB grpc.MaxSendMsgSize(10 * 1024 * 1024), ) // Client conn, _ := grpc.Dial(address, grpc.WithDefaultCallOptions( grpc.MaxCallRecvMsgSize(10 * 1024 * 1024), grpc.MaxCallSendMsgSize(10 * 1024 * 1024), ), )

Better Solution: Use streaming for large data instead of increasing limits.

Performance Implications

gRPC vs REST Performance

Benchmarks (approximate):

| Metric | gRPC | REST/JSON |

|---|---|---|

| Serialization | 5-10x faster | Baseline |

| Payload size | 30-70% smaller | Baseline |

| Latency (LAN) | 0.5-1ms | 2-5ms |

| Throughput | 2-5x higher | Baseline |

| Memory usage | 50% lower | Baseline |

Real-world example:

- REST: 1000 req/sec at 50ms latency

- gRPC: 5000 req/sec at 10ms latency

Optimization Strategies

1. Connection Pooling:

go// Reuse connections instead of dial per request var clientConn *grpc.ClientConn func init() { conn, _ := grpc.Dial(address, options...) clientConn = conn } func makeRequest() { client := pb.NewUserServiceClient(clientConn) // Use client... }

2. Streaming for Large Datasets:

protobuf// Instead of returning huge list rpc GetAllUsers() returns (UserList); // BAD: 10MB response // Use server streaming rpc GetAllUsers(Empty) returns (stream User); // GOOD: chunks

3. Compression:

go// Enable gzip compression conn, _ := grpc.Dial(address, grpc.WithDefaultCallOptions(grpc.UseCompressor(gzip.Name)), )

4. Multiplexing:

HTTP/2 multiplexing is automatic, but ensure you're not creating new connections unnecessarily.

5. Load Balancing:

go// Client-side load balancing conn, _ := grpc.Dial( "dns:///my-service:50051", // DNS-based discovery grpc.WithDefaultServiceConfig(`{"loadBalancingPolicy":"round_robin"}`), )

Testing and Debugging

Testing gRPC Services

Unit Testing with Mock:

goimport ( "testing" "github.com/stretchr/testify/mock" ) // Mock client type MockUserServiceClient struct { mock.Mock } func (m *MockUserServiceClient) GetUser(ctx context.Context, req *pb.GetUserRequest, opts ...grpc.CallOption) (*pb.GetUserResponse, error) { args := m.Called(ctx, req) return args.Get(0).(*pb.GetUserResponse), args.Error(1) } func TestGetUser(t *testing.T) { mockClient := new(MockUserServiceClient) expectedUser := &pb.User{Id: 1, Email: "test@example.com"} mockClient.On("GetUser", mock.Anything, mock.Anything). Return(&pb.GetUserResponse{User: expectedUser}, nil) // Test your code using mockClient resp, err := mockClient.GetUser(context.Background(), &pb.GetUserRequest{UserId: 1}) assert.NoError(t, err) assert.Equal(t, expectedUser.Email, resp.User.Email) }

Debugging with grpcurl

grpcurl is like curl for gRPC:bash# List services grpcurl -plaintext localhost:50051 list # Describe service grpcurl -plaintext localhost:50051 describe user.UserService # Make request grpcurl -plaintext -d '{"user_id": 1}' \ localhost:50051 user.UserService/GetUser # Server streaming grpcurl -plaintext -d '{"page_size": 5}' \ localhost:50051 user.UserService/ListUsers

Interceptors for Logging/Monitoring

go// Unary interceptor for logging func loggingInterceptor(ctx context.Context, req interface{}, info *grpc.UnaryServerInfo, handler grpc.UnaryHandler) (interface{}, error) { start := time.Now() resp, err := handler(ctx, req) duration := time.Since(start) log.Printf("Method: %s, Duration: %v, Error: %v", info.FullMethod, duration, err) return resp, err } // Register interceptor grpcServer := grpc.NewServer( grpc.UnaryInterceptor(loggingInterceptor), )

Real-World Use Cases

Use Case 1: Netflix - Content Recommendation Engine

Netflix replaced REST APIs with gRPC for internal microservices:

Before (REST):

- 50ms average latency

- 10,000 req/sec per service

- JSON parsing overhead on every request

After (gRPC):

- 25ms average latency (50% reduction)

- 20,000 req/sec per service (100% increase)

- Binary serialization, no parsing overhead

Implementation:

protobufservice RecommendationService { rpc GetRecommendations(UserPreferences) returns (stream Movie); }

Use Case 2: Square - Payment Processing

Square uses gRPC for real-time payment processing:

Requirements:

- Sub-50ms latency for authorization

- Strong typing (money must be exact)

- Streaming for transaction monitoring

Implementation:

protobufservice PaymentService { rpc AuthorizePayment(PaymentRequest) returns (PaymentResponse); rpc StreamTransactions(AccountRequest) returns (stream Transaction); } message Money { int64 amount = 1; // Cents, not float! string currency = 2; }

Use Case 3: Uber - Ride Matching

Uber uses bidirectional streaming for real-time ride matching:

protobufservice RideMatchingService { rpc StreamRideRequests(stream DriverLocation) returns (stream RideRequest); }

Flow:

- Driver app streams location updates

- Server streams nearby ride requests

- Both directions happen simultaneously

- Low latency critical for user experience

Interview Questions: What You Should Know

Junior Level

Q: What is gRPC and how is it different from REST?

A: gRPC is a high-performance RPC framework using Protocol Buffers and HTTP/2. Unlike REST (text-based JSON over HTTP/1.1), gRPC uses binary serialization (faster, smaller) and supports streaming natively.

Q: What are Protocol Buffers?

A: Protocol Buffers (Protobuf) is a language-neutral binary serialization format. You define data structures in .proto files, then generate code for multiple languages. It's faster and smaller than JSON.

Q: What are the four types of gRPC calls?

A:

- Unary: Single request, single response (like REST)

- Server streaming: Single request, stream of responses

- Client streaming: Stream of requests, single response

- Bidirectional streaming: Stream both ways simultaneously

Mid Level

Q: How does gRPC achieve better performance than REST?

A:

- Binary serialization (Protobuf) is 5-10x faster than JSON parsing

- HTTP/2 multiplexing eliminates connection overhead

- Smaller payloads (30-70% smaller than JSON)

- No runtime reflection for serialization

- Connection reuse and header compression

Q: Explain how Protobuf field numbers work and why they matter.

A: Field numbers are used in binary encoding to identify fields. They must never change because old clients depend on them. Rules:

- Use numbers 1-15 for frequent fields (1 byte encoding)

- Never reuse deleted field numbers

- Use

reservedto prevent accidental reuse

Q: What happens if you change a Protobuf field type?

A: It breaks wire compatibility. Old clients will misinterpret the data, causing silent corruption or errors. Always add new fields instead of changing existing ones.

Senior Level

Q: Design a gRPC-based microservices architecture with load balancing and service discovery.

A:

- Service Discovery: Use Kubernetes DNS or Consul

- Load Balancing: Client-side with

grpc.WithBalancerName("round_robin") - Health Checks: Implement

grpc.health.v1.Healthservice - Circuit Breaking: Use interceptors with timeout/retry logic

- Observability: Interceptors for logging, metrics (Prometheus), tracing (Jaeger)

- Security: mTLS for service-to-service auth

- Deployment: Use service mesh (Istio) for advanced features

Q: How would you handle backward compatibility when evolving a gRPC API?

A:

- Add, don't modify: Always add new fields/methods, never change existing ones

- Use

oneofcarefully: Adding fields tooneofcan break clients - Versioning: Include version in package name (

user.v1,user.v2) - Deprecation: Mark old fields as

deprecated = true - Testing: Maintain integration tests with old client versions

- Documentation: Clearly document breaking changes

Q: What are the trade-offs of using gRPC for public APIs?

A:

Cons:

- Browser support requires grpc-web (proxy needed)

- Not human-readable (can't use curl easily)

- Steeper learning curve for API consumers

- Tooling less mature than REST

Cons:

- Much faster performance

- Strong typing and code generation

- Built-in streaming

- Better for mobile clients (battery efficiency)

Recommendation: Use REST for public APIs, gRPC for internal microservices.

Key Takeaways

-

gRPC is 5-10x faster than REST due to binary serialization and HTTP/2

-

Protocol Buffers provide type safety and code generation for 10+ languages

-

Four call types: Unary, server streaming, client streaming, bidirectional

-

HTTP/2 multiplexing allows multiple RPCs over one connection

-

Never change Protobuf field numbers or types - only add new fields

-

Always use timeouts and error handling - context.WithTimeout is essential

-

Streaming is powerful for real-time features and large datasets

-

Best for internal microservices - REST still better for public APIs

-

Load balancing and service discovery are critical for production

-

Use interceptors for cross-cutting concerns (logging, auth, metrics)

Further Learning

- Practice: Build a chat application with bidirectional streaming

- Read: gRPC official documentation and best practices

- Experiment: Compare REST vs gRPC performance with benchmarks

- Advanced: Study gRPC internals (HTTP/2 framing, flow control)

- Production: Learn Istio or Linkerd for service mesh patterns

gRPC isn't just a faster REST—it's a fundamentally different approach to building distributed systems. Master it, and you'll build services that scale to billions of requests.