Goroutine Leaks: Finding and Fixing the Silent Memory Killer

The Memory That Keeps Growing

Your Go service starts with 50MB of memory. A week later, it's using 2GB. No obvious allocations. Profiling shows minimal heap usage. Yet memory grows steadily. Eventually, Kubernetes kills your pod. OOM Killed.

The culprit? Goroutine leaks. Thousands of goroutines stuck forever, each holding a small stack. They never complete. They never get garbage collected. They accumulate silently until your service dies.

What Is a Goroutine Leak

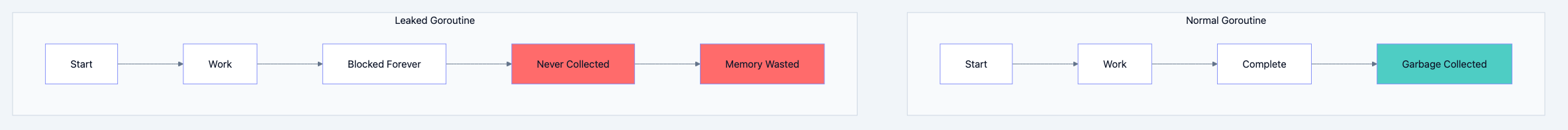

A goroutine leak occurs when a goroutine is created but never terminates. The goroutine sits there, waiting forever for something that will never happen.

Go blog diagram 1

Unlike memory leaks where you lose reference to allocated memory, goroutine leaks are worse. The goroutine is still reachable. The runtime tracks it. It just never finishes.

The Abandoned Worker Analogy

Think of it like this: You hire workers to complete tasks. Each worker waits for instructions. But you forget about some workers. They stand in the corner waiting forever. They still get paid (memory). They still count as employees (goroutines). But they do nothing useful. Eventually, you can't afford new workers because all your budget goes to idle ones.

Common Causes of Goroutine Leaks

Cause 1: Blocked Channel Send

go// Filename: leak_channel_send.go package main import ( "fmt" "runtime" "time" ) func leakyChannelSend() { ch := make(chan int) // Unbuffered go func() { ch <- 42 // Blocks forever - no receiver! fmt.Println("This never prints") }() // We never receive from ch // Goroutine is stuck forever } func main() { for i := 0; i < 10; i++ { leakyChannelSend() } time.Sleep(time.Second) fmt.Println("Goroutines:", runtime.NumGoroutine()) // Prints 11 (1 main + 10 leaked) }

Expected Output:

Goroutines: 11Cause 2: Blocked Channel Receive

go// Filename: leak_channel_receive.go package main import ( "fmt" "runtime" "time" ) func leakyChannelReceive() { ch := make(chan int) go func() { <-ch // Blocks forever - no sender, channel never closed! fmt.Println("This never prints") }() // We never send to ch or close it } func main() { for i := 0; i < 10; i++ { leakyChannelReceive() } time.Sleep(time.Second) fmt.Println("Goroutines:", runtime.NumGoroutine()) }

Cause 3: Forgotten Goroutines in Loop

go// Each request spawns a goroutine that might never complete func handleRequest(w http.ResponseWriter, r *http.Request) { go func() { // If this external call times out or hangs // The goroutine leaks result := callExternalAPI(r.Context()) processResult(result) // Never reached if API hangs }() // Response sent, but goroutine might still run w.WriteHeader(http.StatusAccepted) }

Cause 4: Missing Context Cancellation

go// Filename: leak_no_context.go func fetchForever(url string) <-chan []byte { ch := make(chan []byte) go func() { for { // No way to stop this! data := fetchData(url) ch <- data time.Sleep(time.Second) } }() return ch } // If caller stops reading from channel, goroutine still runs

Detecting Goroutine Leaks

Method 1: Runtime Checks

go// Filename: detect_leaks.go package main import ( "fmt" "runtime" "time" ) func main() { initial := runtime.NumGoroutine() fmt.Println("Initial goroutines:", initial) // Do work that might leak doSomeWork() // Force GC and wait for goroutines to settle runtime.GC() time.Sleep(100 * time.Millisecond) final := runtime.NumGoroutine() fmt.Println("Final goroutines:", final) if final > initial { fmt.Printf("WARNING: %d goroutines may be leaked\n", final-initial) } } func doSomeWork() { // Your code here }

Method 2: pprof Goroutine Profile

// Filename: pprof_server.go package main import ( "net/http" _ "net/http/pprof" // Import for side effects ) func main() { // Start pprof server go func() { http.ListenAndServe("localhost:6060", nil) }() // Your application here select {} }

Method 3: goleak Package (Testing)

go// Filename: leak_test.go package main import ( "testing" "go.uber.org/goleak" ) func TestMain(m *testing.M) { goleak.VerifyTestMain(m) } func TestNoLeak(t *testing.T) { defer goleak.VerifyNone(t) // Test code here // goleak will fail if goroutines leak }

Fixing Goroutine Leaks

Fix 1: Use Buffered Channels

go// LEAKY: Unbuffered channel, goroutine blocks if no receiver ch := make(chan int) go func() { ch <- result // Blocks forever }() // FIXED: Buffered channel, send succeeds even without receiver ch := make(chan int, 1) go func() { ch <- result // Succeeds immediately }()

Fix 2: Use Context for Cancellation

go// Filename: fix_context.go package main import ( "context" "fmt" "time" ) func fetchWithContext(ctx context.Context, url string) <-chan string { ch := make(chan string) go func() { defer close(ch) for { select { case <-ctx.Done(): fmt.Println("Fetch cancelled, exiting gracefully") return default: // Simulate fetch time.Sleep(100 * time.Millisecond) select { case ch <- "data from " + url: case <-ctx.Done(): return } } } }() return ch } func main() { ctx, cancel := context.WithTimeout(context.Background(), 500*time.Millisecond) defer cancel() ch := fetchWithContext(ctx, "http://example.com") for data := range ch { fmt.Println("Received:", data) } fmt.Println("Done - no leak!") }

Expected Output:

Received: data from http://example.com Received: data from http://example.com Received: data from http://example.com Received: data from http://example.com Fetch cancelled, exiting gracefully Done - no leak!

Fix 3: Done Channel Pattern

go// Filename: fix_done_channel.go package main import ( "fmt" "time" ) func worker(done <-chan struct{}, jobs <-chan int) { for { select { case <-done: fmt.Println("Worker shutting down") return case job := <-jobs: fmt.Println("Processing job:", job) time.Sleep(100 * time.Millisecond) } } } func main() { done := make(chan struct{}) jobs := make(chan int) go worker(done, jobs) // Send some jobs jobs <- 1 jobs <- 2 jobs <- 3 // Signal shutdown close(done) time.Sleep(200 * time.Millisecond) fmt.Println("Main exiting") }

Expected Output:

Processing job: 1 Processing job: 2 Processing job: 3 Worker shutting down Main exiting

Fix 4: Timeout Pattern

go// Filename: fix_timeout.go package main import ( "fmt" "time" ) func fetchWithTimeout(url string) (string, error) { resultCh := make(chan string, 1) errCh := make(chan error, 1) go func() { // Simulate slow operation time.Sleep(2 * time.Second) resultCh <- "data" }() select { case result := <-resultCh: return result, nil case err := <-errCh: return "", err case <-time.After(1 * time.Second): return "", fmt.Errorf("timeout after 1 second") } } func main() { result, err := fetchWithTimeout("http://slow.example.com") if err != nil { fmt.Println("Error:", err) return } fmt.Println("Result:", result) }

Expected Output:

Error: timeout after 1 secondProduction Patterns

Pattern 1: Graceful Shutdown

go// Filename: graceful_shutdown.go package main import ( "context" "fmt" "os" "os/signal" "sync" "syscall" "time" ) type Server struct { wg sync.WaitGroup ctx context.Context cancel context.CancelFunc } func NewServer() *Server { ctx, cancel := context.WithCancel(context.Background()) return &Server{ctx: ctx, cancel: cancel} } func (s *Server) StartWorker(id int) { s.wg.Add(1) go func() { defer s.wg.Done() for { select { case <-s.ctx.Done(): fmt.Printf("Worker %d: shutting down\n", id) return default: fmt.Printf("Worker %d: working\n", id) time.Sleep(500 * time.Millisecond) } } }() } func (s *Server) Shutdown() { s.cancel() s.wg.Wait() fmt.Println("All workers stopped") } func main() { server := NewServer() // Start workers for i := 1; i <= 3; i++ { server.StartWorker(i) } // Wait for interrupt sigCh := make(chan os.Signal, 1) signal.Notify(sigCh, syscall.SIGINT, syscall.SIGTERM) <-sigCh fmt.Println("\nReceived shutdown signal") server.Shutdown() }

Pattern 2: Worker Pool with Cleanup

go// Filename: worker_pool_cleanup.go package main import ( "context" "fmt" "sync" ) type Pool struct { workers int jobs chan func() wg sync.WaitGroup ctx context.Context cancel context.CancelFunc } func NewPool(workers int) *Pool { ctx, cancel := context.WithCancel(context.Background()) p := &Pool{ workers: workers, jobs: make(chan func(), 100), ctx: ctx, cancel: cancel, } p.start() return p } func (p *Pool) start() { for i := 0; i < p.workers; i++ { p.wg.Add(1) go func(id int) { defer p.wg.Done() for { select { case <-p.ctx.Done(): return case job, ok := <-p.jobs: if !ok { return } job() } } }(i) } } func (p *Pool) Submit(job func()) { select { case p.jobs <- job: case <-p.ctx.Done(): } } func (p *Pool) Stop() { p.cancel() close(p.jobs) p.wg.Wait() } func main() { pool := NewPool(3) for i := 0; i < 5; i++ { i := i pool.Submit(func() { fmt.Printf("Job %d executed\n", i) }) } pool.Stop() fmt.Println("Pool stopped cleanly - no leaks") }

Detection Checklist

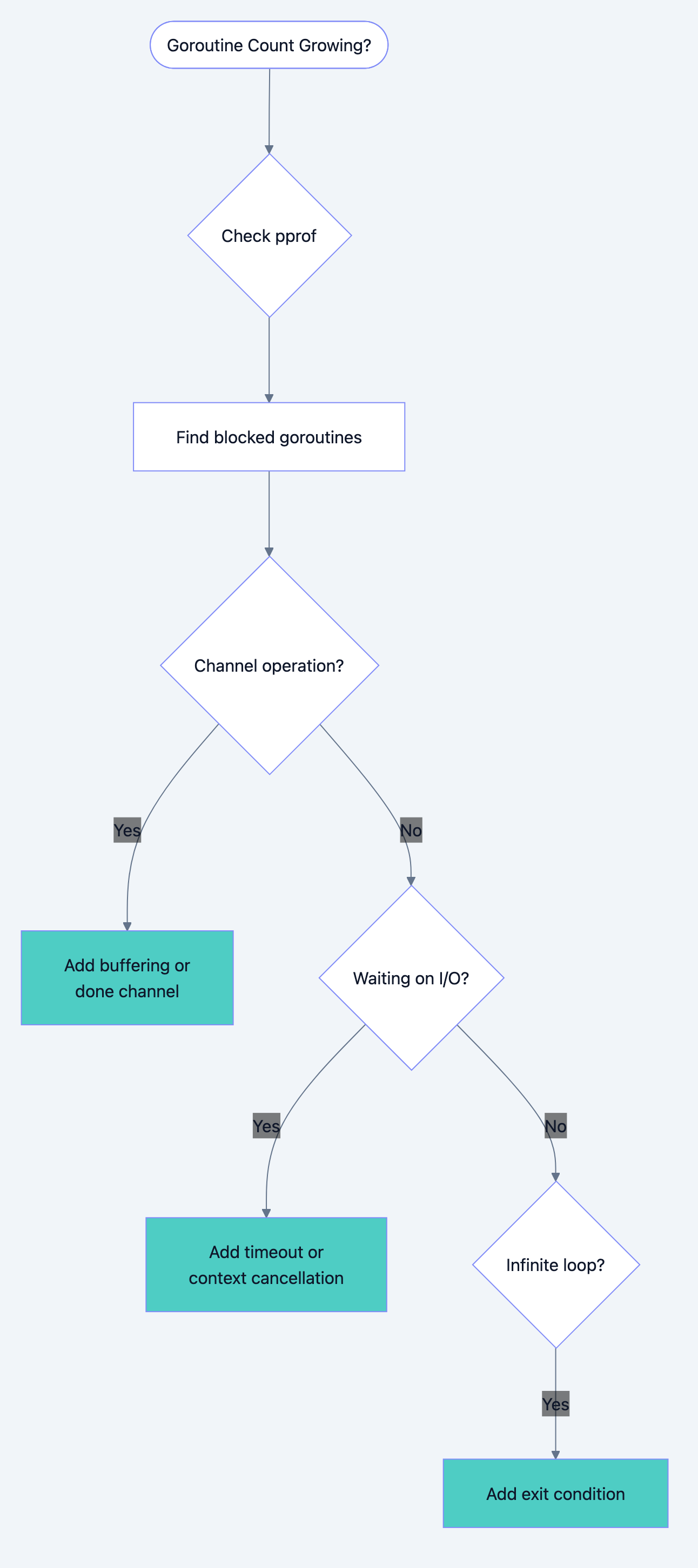

Go blog diagram 2

| Symptom | Likely Cause | Fix |

|---|---|---|

Goroutine blocked on chan send | No receiver | Buffer channel or add receiver |

Goroutine blocked on chan receive | No sender, not closed | Close channel when done |

Goroutine in select {} | No exit case | Add context or done channel |

Goroutine in time.Sleep loop | No stop signal | Add done channel |

What You Learned

You now understand that:

- Goroutine leaks are silent: They don't trigger errors

- Common causes are channel operations: Unbuffered channels without receivers

- Context enables cancellation: Always propagate context

- pprof reveals leaks: Use goroutine profiles to detect

- goleak catches leaks in tests: Automated leak detection

- Cleanup requires patterns: Done channels, graceful shutdown

Your Next Steps

- Audit: Run pprof on your services and check goroutine counts

- Read Next: Learn about the context package for proper cancellation

- Test: Add goleak to your test suite

Goroutine leaks are sneaky. They don't crash your program immediately. They slowly consume resources until something breaks. Now you know how to find them, fix them, and prevent them. Your services will thank you.