Go Scheduler: The Engine Behind Your Goroutines

A Million Goroutines, Eight Cores

You launch a million goroutines on an 8 core machine. Each runs. None blocks the others excessively. The system stays responsive. How?

Operating systems can't handle a million threads. Context switching would consume all CPU time. Memory would explode. Yet Go makes a million goroutines feel effortless.

The secret is Go's scheduler. It's a sophisticated piece of software that maps potentially millions of goroutines onto a handful of OS threads efficiently.

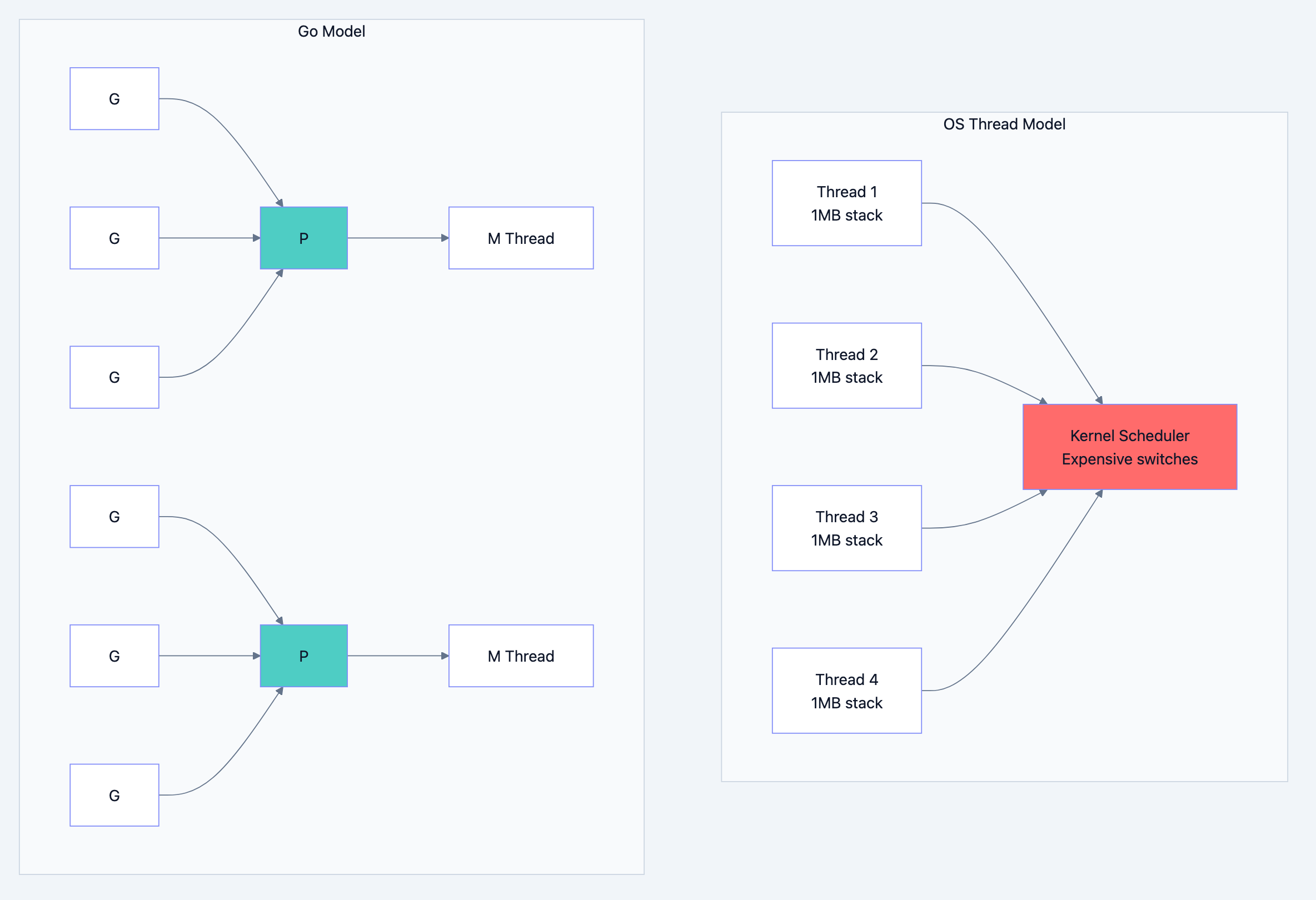

Why Not Just Use OS Threads

Operating system threads are expensive. Each thread needs:

- 1MB+ of stack memory

- Kernel involvement for creation

- Expensive context switches (save all registers, switch page tables)

- Kernel scheduling overhead

Go blog diagram 1

Go's scheduler runs in user space, making goroutine switches orders of magnitude faster than thread switches.

The Traffic Controller Analogy

Think of it like this: Imagine an airport with thousands of planes (goroutines) but only a few runways (OS threads). The air traffic controller (scheduler) decides which planes take off when. Planes wait in queues. When one plane is waiting for fuel (I/O), another takes its slot. The controller balances load across runways and handles emergencies. Go's scheduler does exactly this for your goroutines.

The GMP Model Explained

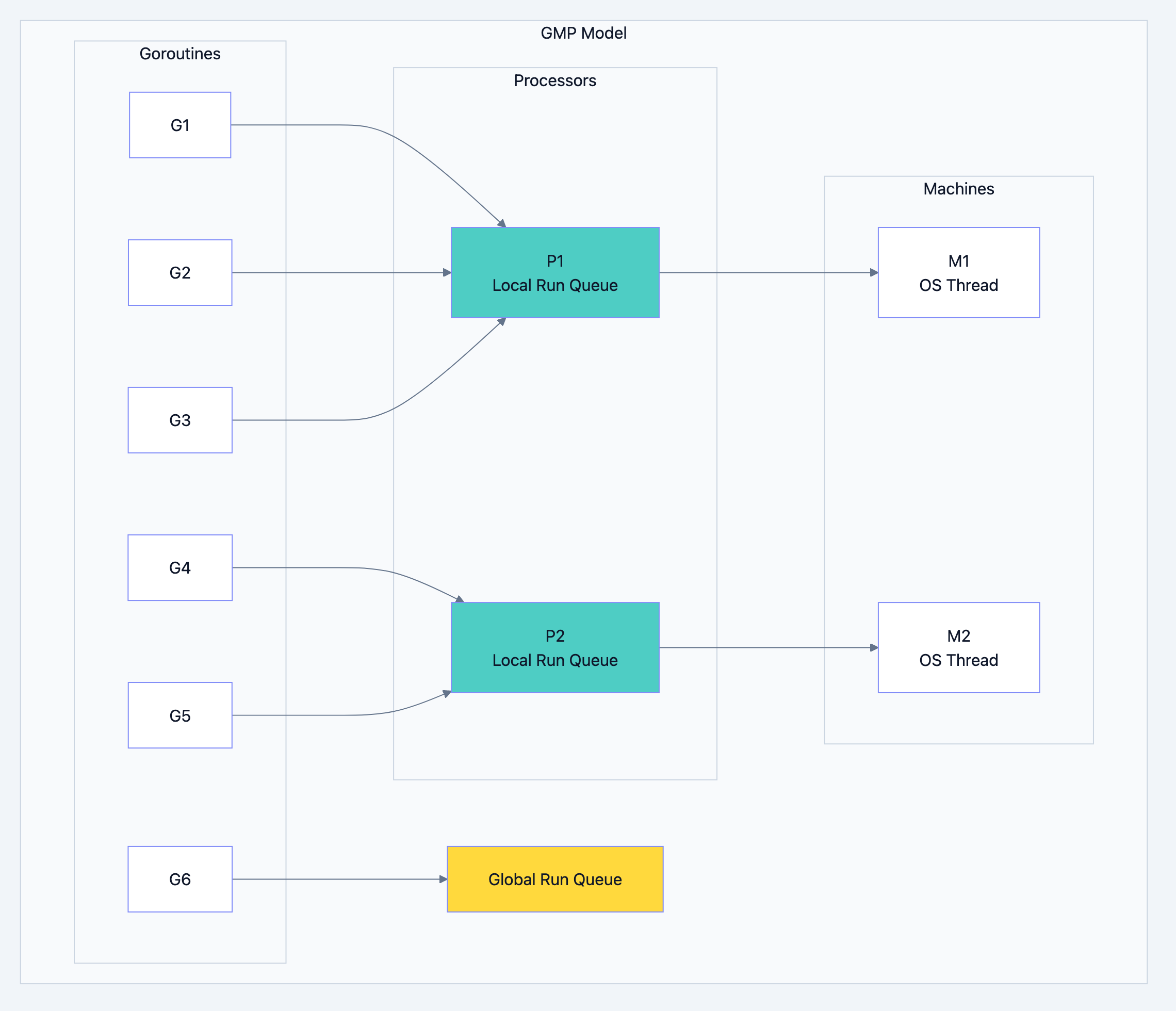

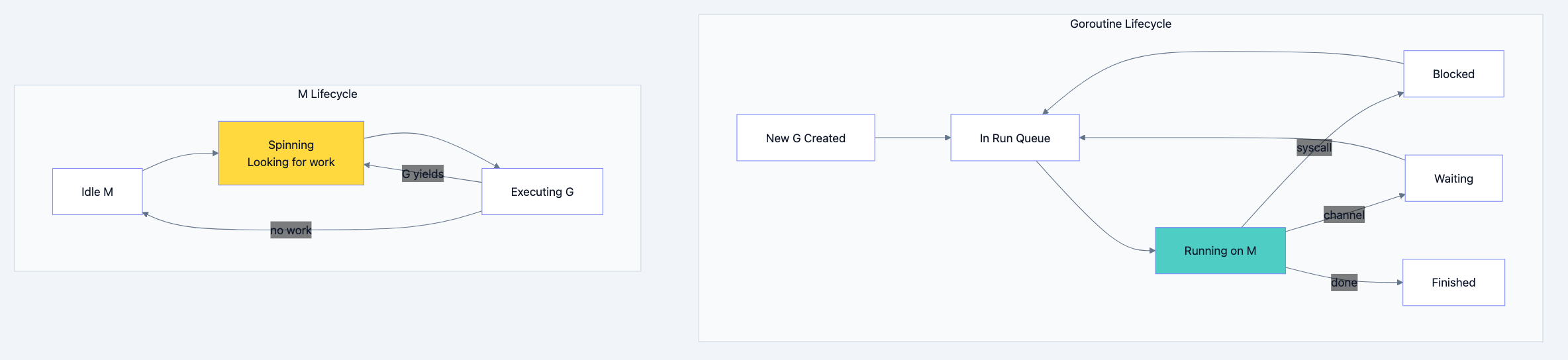

Go's scheduler uses three main entities:

G (Goroutine): The work to be done. Your code, its stack, instruction pointer.

M (Machine): An OS thread. Actually runs the code.

P (Processor): A logical processor. Holds a run queue of goroutines. Required for an M to execute G.

Go blog diagram 2

The Number of Ps

By default, GOMAXPROCS equals the number of CPU cores. This is the maximum number of goroutines running truly in parallel at any moment.

go// Filename: gomaxprocs.go package main import ( "fmt" "runtime" ) func main() { // Default: number of CPU cores fmt.Println("GOMAXPROCS:", runtime.GOMAXPROCS(0)) // You can change it (rarely needed) runtime.GOMAXPROCS(4) fmt.Println("After change:", runtime.GOMAXPROCS(0)) }

How Scheduling Works

Step 1: Goroutine Creation

When you write

go func(), a new G is created and placed in a P's local run queue.gogo func() { // New G created // Added to current P's local queue }()

Step 2: Running a Goroutine

An M attached to P takes a G from P's local queue and executes it.

Step 3: Goroutine Yields

Goroutines yield control at specific points:

- Channel operations (send/receive)

- System calls

- Function calls (checking stack growth)

- Explicit runtime.Gosched()

- Blocking operations

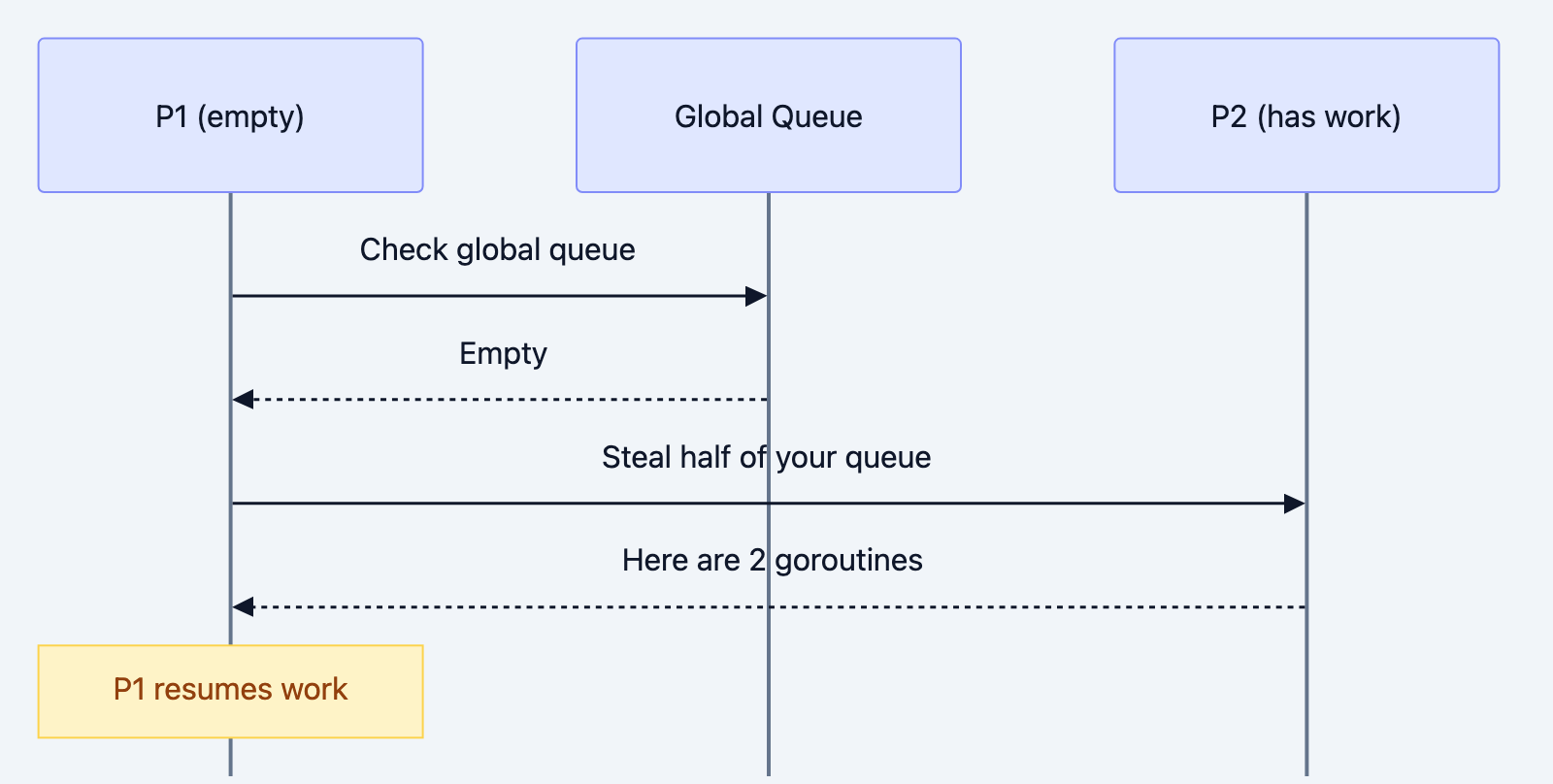

Step 4: Work Stealing

If a P's local queue is empty, it tries:

- Check global run queue

- Check network poller

- Steal from another P's queue

Go blog diagram 3

Work Stealing in Action

go// Filename: work_stealing_demo.go package main import ( "fmt" "runtime" "sync" "time" ) func main() { runtime.GOMAXPROCS(2) // 2 Ps var wg sync.WaitGroup // Create unbalanced load // 100 goroutines started from one P for i := 0; i < 100; i++ { wg.Add(1) go func(id int) { defer wg.Done() // Simulate work time.Sleep(10 * time.Millisecond) }(i) } // Work stealing ensures both Ps stay busy // Even though all Gs started on one P wg.Wait() fmt.Println("All done - work stealing balanced the load") }

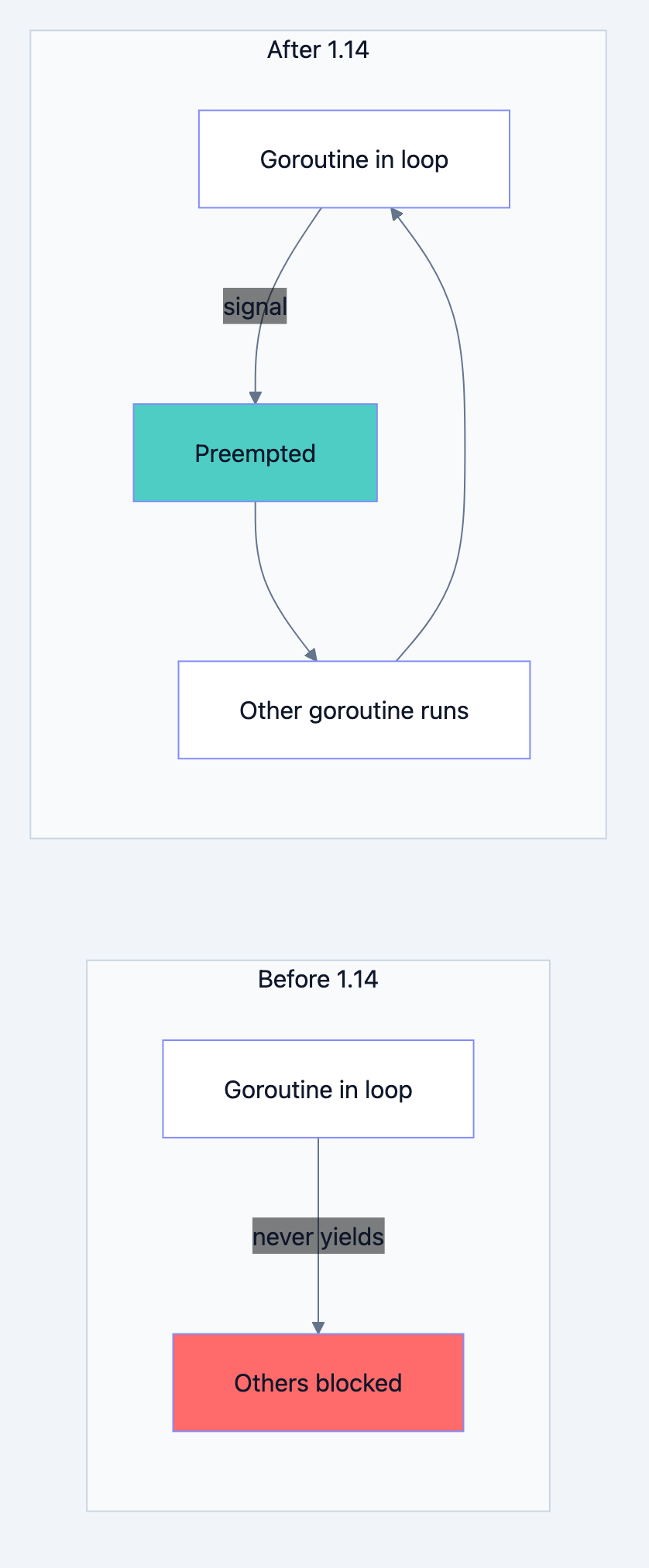

Preemption: No More Infinite Loops

Before Go 1.14, a tight loop could starve other goroutines:

go// Before Go 1.14: This would block other goroutines go func() { for { // No function calls = no yield points } }()

Go 1.14+ introduced asynchronous preemption using signals. The scheduler can now interrupt any goroutine, even in tight loops.

Go blog diagram 4

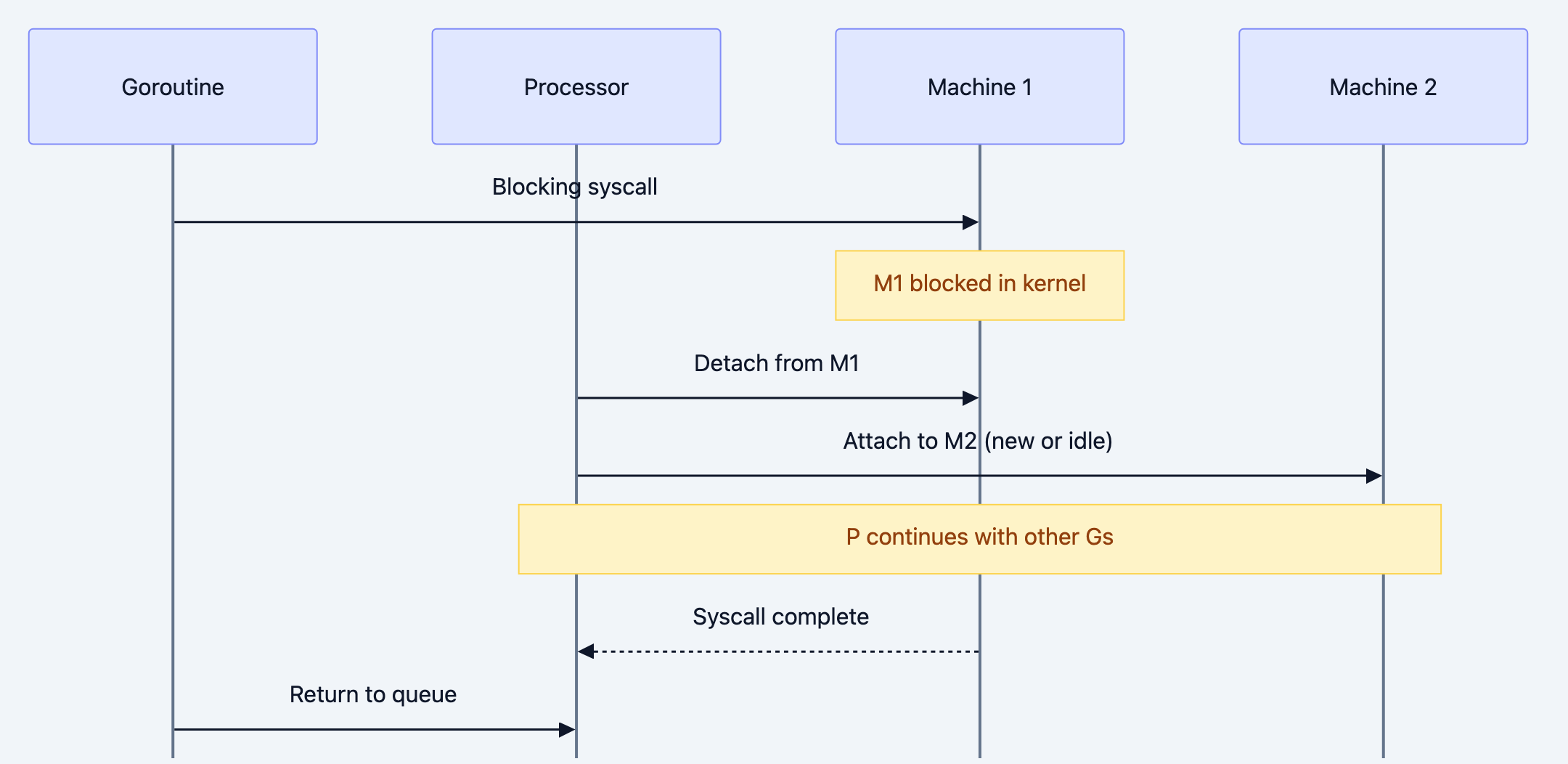

Scheduling During System Calls

When a goroutine makes a blocking system call (file I/O, network), the M blocks. But P shouldn't wait!

Go blog diagram 5

This is why blocking operations don't block the whole scheduler.

Network Poller Integration

For network I/O, Go uses non-blocking syscalls with epoll/kqueue. The network poller runs in a separate thread.

go// When you write: conn.Read(buffer) // Go's runtime: // 1. Tries non-blocking read // 2. If would block, registers with network poller // 3. Goroutine is parked (not running, not in queue) // 4. Network poller wakes goroutine when data arrives

This means network I/O is highly efficient. Thousands of goroutines can wait on network with minimal overhead.

Visualizing the Scheduler

Use the trace tool to see scheduling in action:

// Filename: trace_example.go package main import ( "os" "runtime/trace" "sync" ) func main() { f, _ := os.Create("trace.out") trace.Start(f) defer trace.Stop() var wg sync.WaitGroup for i := 0; i < 10; i++ { wg.Add(1) go func(id int) { defer wg.Done() // Work here }(i) } wg.Wait() }

This opens a browser showing goroutine scheduling, including:

- When each G runs

- Which P/M it runs on

- When it blocks and why

- Work stealing events

Scheduler-Friendly Code

Use Buffered Channels Wisely

go// Can cause excessive scheduling unbuffered := make(chan int) // Reduces scheduling overhead buffered := make(chan int, 100)

Batch Work

go// WRONG: One goroutine per tiny task for i := 0; i < 1000000; i++ { go process(item[i]) // Million goroutines! } // RIGHT: Batch into worker pools workers := runtime.GOMAXPROCS(0) batch := len(items) / workers for w := 0; w < workers; w++ { go func(start, end int) { for i := start; i < end; i++ { process(items[i]) } }(w*batch, (w+1)*batch) }

Avoid Lock Contention

go// WRONG: All goroutines fight for one lock var globalMu sync.Mutex var globalCounter int // RIGHT: Reduce contention with sharding type ShardedCounter struct { shards [16]struct { mu sync.Mutex count int } } func (c *ShardedCounter) Inc(id int) { shard := &c.shards[id%16] shard.mu.Lock() shard.count++ shard.mu.Unlock() }

Key Scheduler Metrics

go// Filename: scheduler_metrics.go package main import ( "fmt" "runtime" ) func main() { fmt.Println("NumCPU:", runtime.NumCPU()) fmt.Println("GOMAXPROCS:", runtime.GOMAXPROCS(0)) fmt.Println("NumGoroutine:", runtime.NumGoroutine()) // More detailed stats var memStats runtime.MemStats runtime.ReadMemStats(&memStats) fmt.Println("Num GC:", memStats.NumGC) }

GODEBUG Scheduler Tracing

bashGODEBUG=schedtrace=1000 ./myprogram

Output every 1000ms:

SCHED 1000ms: gomaxprocs=8 idleprocs=6 threads=9 spinningthreads=1 idlethreads=2 runqueue=0 [1 0 0 0 0 0 0 0]

gomaxprocs: Number of Psidleprocs: Ps without workthreads: Total Msrunqueue: Global queue size[1 0 0...]: Each P's local queue size

Scheduler Internals Summary

Go blog diagram 6

Common Misconceptions

Myth: More GOMAXPROCS = Faster

Not always. If your work is I/O bound, more Ps won't help. If work is CPU bound, GOMAXPROCS > cores causes overhead.

Myth: Goroutines are free

They're cheap, not free. Million goroutines still use memory and scheduling overhead.

Myth: Order of execution is predictable

Scheduling is not deterministic. Never assume goroutine execution order.

What You Learned

You now understand that:

- GMP model: Goroutines, Machines (threads), Processors

- Work stealing balances load: Idle Ps steal from busy ones

- Preemption prevents starvation: No goroutine can hog CPU

- System calls hand off P: Blocking doesn't block scheduling

- Network poller is efficient: Async I/O under the hood

- GOMAXPROCS controls parallelism: Usually defaults are fine

Your Next Steps

- Profile: Use

go tool traceon your application - Read Next: Learn about goroutine leaks and how to detect them

- Experiment: Try GODEBUG=schedtrace to see your scheduler in action

The Go scheduler is a masterpiece of systems programming. Understanding it helps you write faster, more efficient concurrent code. You don't need to control it, but knowing how it works makes you a better Go developer.