Go Concurrency Patterns: Battle Tested Solutions for Real Problems

When Simple Goroutines Aren't Enough

You've learned goroutines and channels. You can spin up concurrent tasks. But real problems need more structure. How do you limit concurrent database connections? How do you process a million items without exhausting memory? How do you gracefully handle partial failures?

Concurrency patterns are proven solutions to these common problems. They're the recipes that experienced Go developers reach for daily.

The Assembly Line Vision

Think of it like this: A car factory doesn't have one worker building entire cars. It's an assembly line where specialists handle specific tasks. One team installs engines, another handles electronics, another does painting. Work flows through stages, each optimized for its task. Go concurrency patterns create similar assembly lines for data.

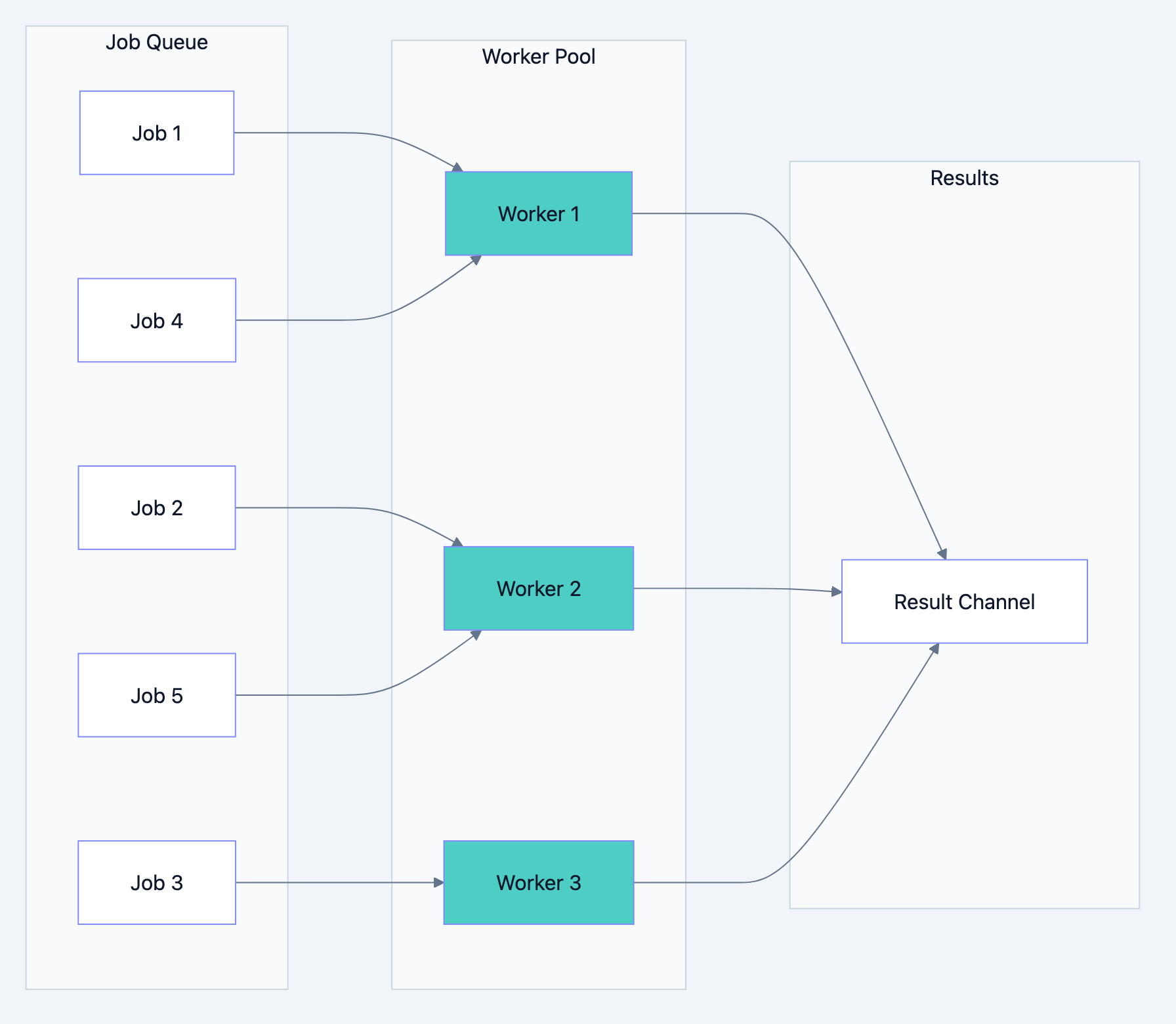

Pattern 1: Worker Pool

When you have many tasks but limited resources (database connections, API rate limits, memory), a worker pool controls concurrency.

Go blog diagram 1

go// Filename: worker_pool.go package main import ( "fmt" "sync" "time" ) // Job represents work to be done type Job struct { ID int Data string } // Result represents completed work type Result struct { JobID int Output string } // Worker processes jobs from the jobs channel // Why: Fixed number of workers limits concurrent operations func Worker(id int, jobs <-chan Job, results chan<- Result, wg *sync.WaitGroup) { defer wg.Done() for job := range jobs { // Simulate processing time time.Sleep(100 * time.Millisecond) results <- Result{ JobID: job.ID, Output: fmt.Sprintf("Worker %d processed: %s", id, job.Data), } } } func main() { const numWorkers = 3 const numJobs = 10 jobs := make(chan Job, numJobs) results := make(chan Result, numJobs) // Start worker pool var wg sync.WaitGroup for w := 1; w <= numWorkers; w++ { wg.Add(1) go Worker(w, jobs, results, &wg) } // Send jobs for j := 1; j <= numJobs; j++ { jobs <- Job{ID: j, Data: fmt.Sprintf("Task-%d", j)} } close(jobs) // Wait for workers and close results go func() { wg.Wait() close(results) }() // Collect results for result := range results { fmt.Println(result.Output) } }

Expected Output:

Worker 1 processed: Task-1 Worker 2 processed: Task-2 Worker 3 processed: Task-3 Worker 1 processed: Task-4 ...

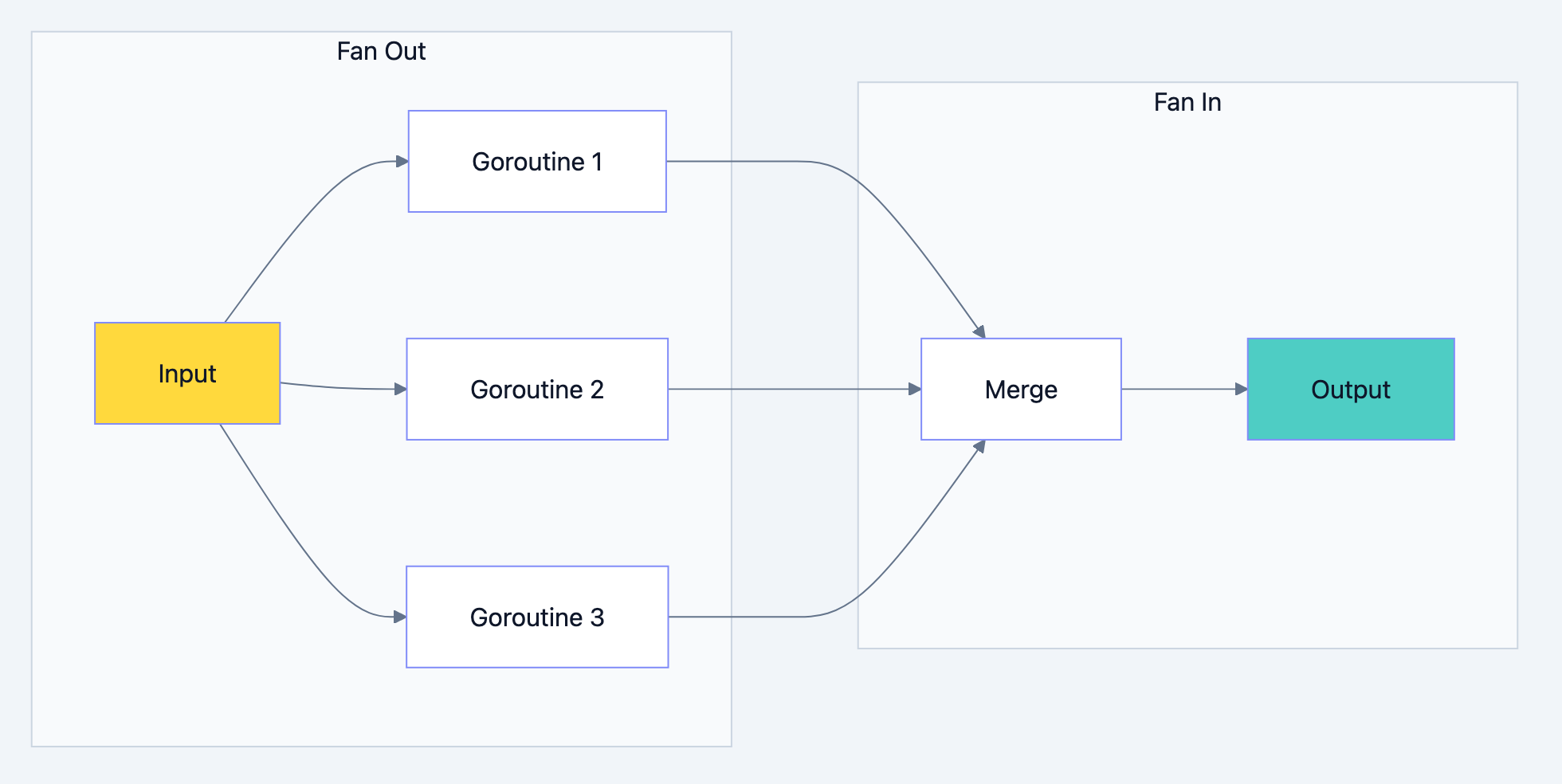

Pattern 2: Fan Out, Fan In

Split work across multiple goroutines (fan out), then combine results (fan in). Great for CPU intensive tasks.

Go blog diagram 2

go// Filename: fan_out_fan_in.go package main import ( "fmt" "sync" ) // Generator creates a channel of numbers // Why: Produces work items func Generator(nums ...int) <-chan int { out := make(chan int) go func() { for _, n := range nums { out <- n } close(out) }() return out } // Square computes the square of numbers // Why: CPU-bound work that benefits from parallelization func Square(in <-chan int) <-chan int { out := make(chan int) go func() { for n := range in { out <- n * n } close(out) }() return out } // Merge combines multiple channels into one // Why: Fan-in pattern collects results from parallel workers func Merge(channels ...<-chan int) <-chan int { out := make(chan int) var wg sync.WaitGroup // Start a goroutine for each input channel for _, ch := range channels { wg.Add(1) go func(c <-chan int) { defer wg.Done() for n := range c { out <- n } }(ch) } // Close output when all inputs are done go func() { wg.Wait() close(out) }() return out } func main() { // Generate numbers numbers := Generator(1, 2, 3, 4, 5, 6, 7, 8, 9, 10) // Fan out: distribute work to multiple squarers // Why: Each squarer runs in parallel sq1 := Square(numbers) sq2 := Square(numbers) sq3 := Square(numbers) // Fan in: merge results for result := range Merge(sq1, sq2, sq3) { fmt.Println("Squared:", result) } }

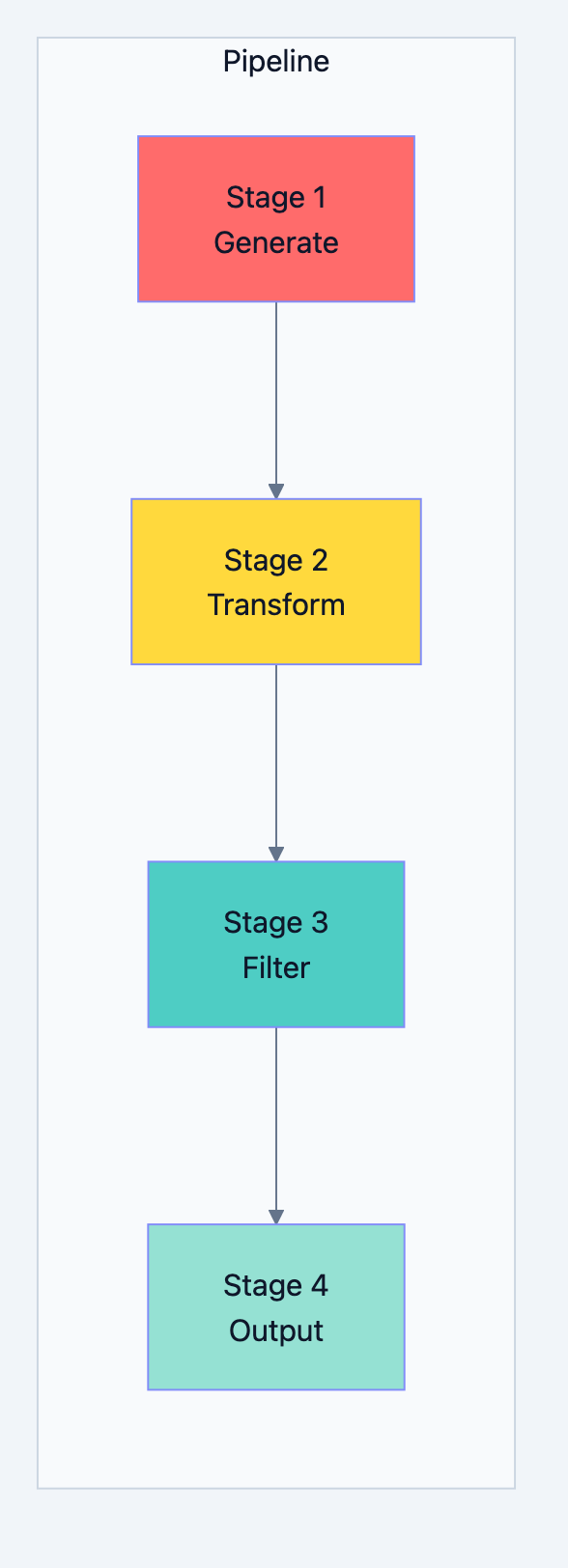

Pattern 3: Pipeline

Chain processing stages where each stage transforms data and passes it to the next. Each stage runs concurrently.

Go blog diagram 3

go// Filename: pipeline.go package main import "fmt" // Stage 1: Generate numbers func Generate(start, end int) <-chan int { out := make(chan int) go func() { for i := start; i <= end; i++ { out <- i } close(out) }() return out } // Stage 2: Double each number func Double(in <-chan int) <-chan int { out := make(chan int) go func() { for n := range in { out <- n * 2 } close(out) }() return out } // Stage 3: Filter even numbers func FilterEven(in <-chan int) <-chan int { out := make(chan int) go func() { for n := range in { if n%4 == 0 { // divisible by 4 after doubling out <- n } } close(out) }() return out } // Stage 4: Add prefix func Format(in <-chan int) <-chan string { out := make(chan string) go func() { for n := range in { out <- fmt.Sprintf("Result: %d", n) } close(out) }() return out } func main() { // Build pipeline: Generate -> Double -> Filter -> Format // Why: Each stage runs concurrently, processing flows through numbers := Generate(1, 20) doubled := Double(numbers) filtered := FilterEven(doubled) formatted := Format(filtered) // Consume final output for result := range formatted { fmt.Println(result) } }

Expected Output:

Result: 4 Result: 8 Result: 12 Result: 16 Result: 20 Result: 24 Result: 28 Result: 32 Result: 36 Result: 40

Pattern 4: Semaphore

Limit the number of concurrent operations. Unlike worker pools, semaphores don't require pre created workers.

go// Filename: semaphore.go package main import ( "fmt" "sync" "time" ) // Semaphore limits concurrent operations // Why: Control resource usage without worker pool overhead type Semaphore struct { ch chan struct{} } func NewSemaphore(max int) *Semaphore { return &Semaphore{ ch: make(chan struct{}, max), } } func (s *Semaphore) Acquire() { s.ch <- struct{}{} // Block if channel is full } func (s *Semaphore) Release() { <-s.ch // Free one slot } func main() { sem := NewSemaphore(3) // Max 3 concurrent var wg sync.WaitGroup // Launch 10 tasks, but only 3 run at a time for i := 1; i <= 10; i++ { wg.Add(1) go func(id int) { defer wg.Done() sem.Acquire() defer sem.Release() fmt.Printf("Task %d starting\n", id) time.Sleep(500 * time.Millisecond) fmt.Printf("Task %d done\n", id) }(i) } wg.Wait() fmt.Println("All tasks completed") }

Pattern 5: Or Channel (First Winner)

Wait for the first of multiple operations to complete. Useful for timeouts, cancellation, or racing requests.

go// Filename: or_channel.go package main import ( "fmt" "time" ) // Or returns a channel that closes when any input channel closes // Why: Wait for any one of multiple events func Or(channels ...<-chan struct{}) <-chan struct{} { switch len(channels) { case 0: return nil case 1: return channels[0] } orDone := make(chan struct{}) go func() { defer close(orDone) switch len(channels) { case 2: select { case <-channels[0]: case <-channels[1]: } default: select { case <-channels[0]: case <-channels[1]: case <-channels[2]: case <-Or(append(channels[3:], orDone)...): } } }() return orDone } // After returns a channel that closes after duration func After(d time.Duration) <-chan struct{} { done := make(chan struct{}) go func() { time.Sleep(d) close(done) }() return done } func main() { start := time.Now() // First one to complete wins <-Or( After(2*time.Second), After(3*time.Second), After(500*time.Millisecond), // This wins After(1*time.Second), ) fmt.Printf("Done after: %v\n", time.Since(start)) }

Expected Output:

Done after: 500.123msPattern 6: Error Handling in Pipelines

Handle errors gracefully without stopping the entire pipeline.

go// Filename: error_pipeline.go package main import ( "errors" "fmt" ) // Result wraps value and error type Result struct { Value int Err error } // Process simulates work that might fail func Process(in <-chan int) <-chan Result { out := make(chan Result) go func() { defer close(out) for n := range in { if n == 5 { out <- Result{Err: errors.New("unlucky number 5")} continue } out <- Result{Value: n * 2} } }() return out } func main() { // Generate numbers input := make(chan int) go func() { for i := 1; i <= 10; i++ { input <- i } close(input) }() // Process with error handling for result := range Process(input) { if result.Err != nil { fmt.Printf("Error: %v\n", result.Err) continue } fmt.Printf("Success: %d\n", result.Value) } }

Expected Output:

Success: 2 Success: 4 Success: 6 Success: 8 Error: unlucky number 5 Success: 12 Success: 14 Success: 16 Success: 18 Success: 20

Pattern 7: Bounded Parallelism with errgroup

Use golang.org/x/sync/errgroup for cleaner error handling.

go// Filename: errgroup_example.go package main import ( "context" "fmt" "golang.org/x/sync/errgroup" ) func main() { urls := []string{ "https://example.com/1", "https://example.com/2", "https://example.com/3", "https://example.com/4", "https://example.com/5", } g, ctx := errgroup.WithContext(context.Background()) // Limit to 2 concurrent fetches g.SetLimit(2) for _, url := range urls { url := url // Capture for closure g.Go(func() error { select { case <-ctx.Done(): return ctx.Err() default: fmt.Printf("Fetching: %s\n", url) // Simulated fetch return nil } }) } if err := g.Wait(); err != nil { fmt.Printf("Error: %v\n", err) return } fmt.Println("All fetches complete") }

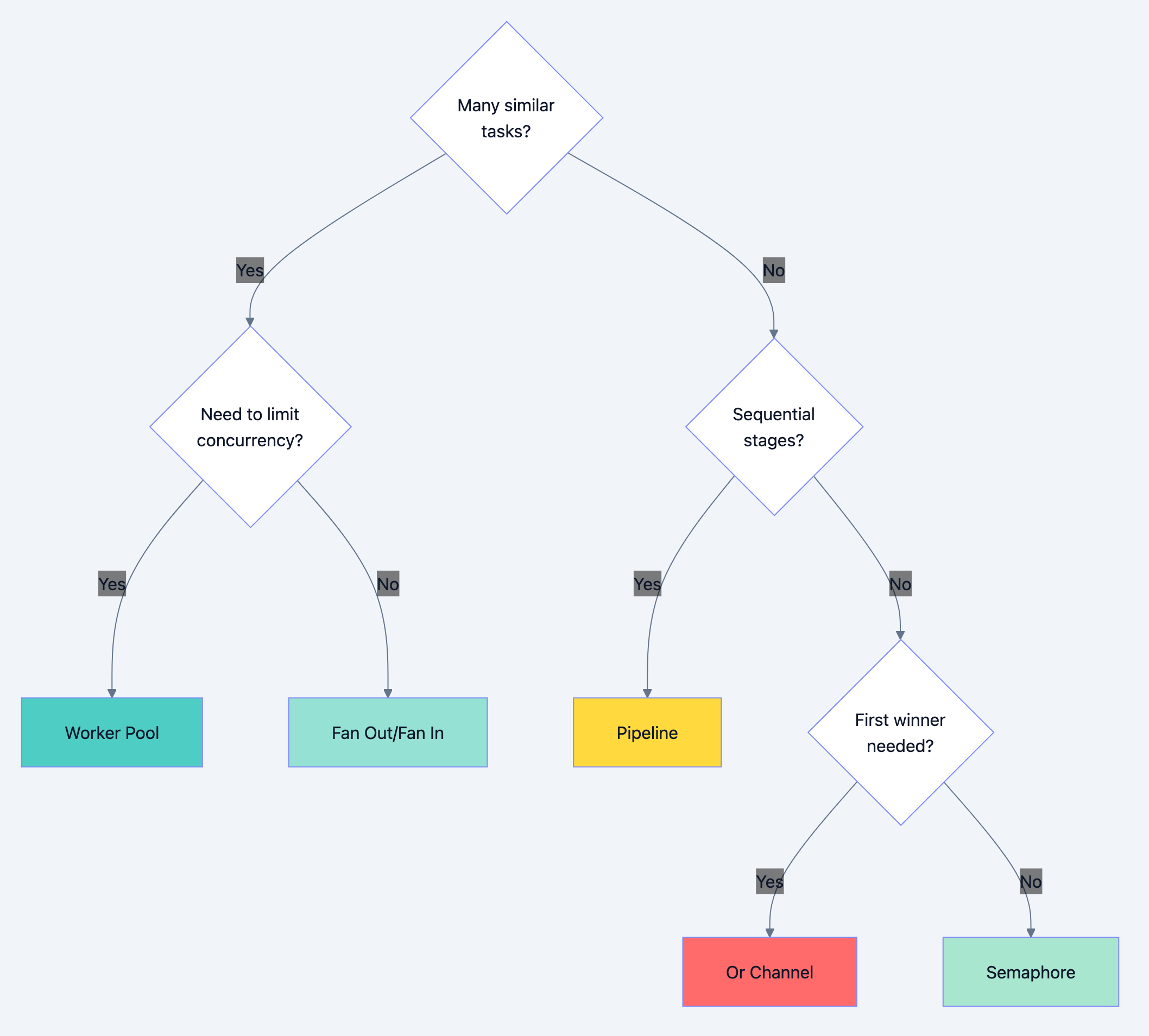

Pattern Comparison

Go blog diagram 4

| Pattern | Use Case | Pros | Cons |

|---|---|---|---|

| Worker Pool | Limited resources | Resource control | Fixed workers |

| Fan Out/In | CPU bound parallel | Max parallelism | Memory for results |

| Pipeline | Multi-stage processing | Clean stages | Complexity |

| Semaphore | Dynamic limiting | Flexible | Less structured |

| Or Channel | Race conditions | First wins | Complex for many |

Common Mistakes

Mistake 1: Forgetting to close channels

go// WRONG: Range never ends results := make(chan int) go func() { results <- 1 results <- 2 // Missing close(results) }() for r := range results { // Blocks forever! fmt.Println(r) } // RIGHT: Always close when done sending go func() { results <- 1 results <- 2 close(results) }()

Mistake 2: Goroutine leaks in pipelines

go// WRONG: Goroutine leaks if consumer stops early func Generate() <-chan int { out := make(chan int) go func() { for i := 0; ; i++ { out <- i // Blocks forever if no consumer } }() return out } // RIGHT: Accept done channel for cancellation func Generate(done <-chan struct{}) <-chan int { out := make(chan int) go func() { defer close(out) for i := 0; ; i++ { select { case <-done: return case out <- i: } } }() return out }

What You Learned

You now understand that:

- Worker pools limit concurrency: Fixed workers, controlled resources

- Fan out/in maximizes parallelism: Split work, merge results

- Pipelines chain stages: Each stage runs concurrently

- Semaphores are flexible limiters: No pre created workers needed

- Or channel races operations: First completion wins

- Error handling needs structure: Wrap results with errors

Your Next Steps

- Build: Create a web scraper using worker pool pattern

- Read Next: Learn about context for cancellation propagation

- Experiment: Combine patterns for complex workflows

These patterns are your toolkit for concurrent Go. Mix and match them to solve real problems. Start with the simplest pattern that fits your needs, and evolve as requirements grow.