Go Garbage Collector: How Go Cleans Up After Your Code

The Memory That Never Returned

Your Go service starts with 100MB of memory. A week later, it's using 4GB. Nothing changed in the code. No memory leak according to pprof. Yet memory keeps growing. Users complain about slowness. You restart the service daily hoping it helps.

This is what happens when you don't understand Go's garbage collector. It's not broken. It's doing exactly what it's designed to do. You're just not speaking its language.

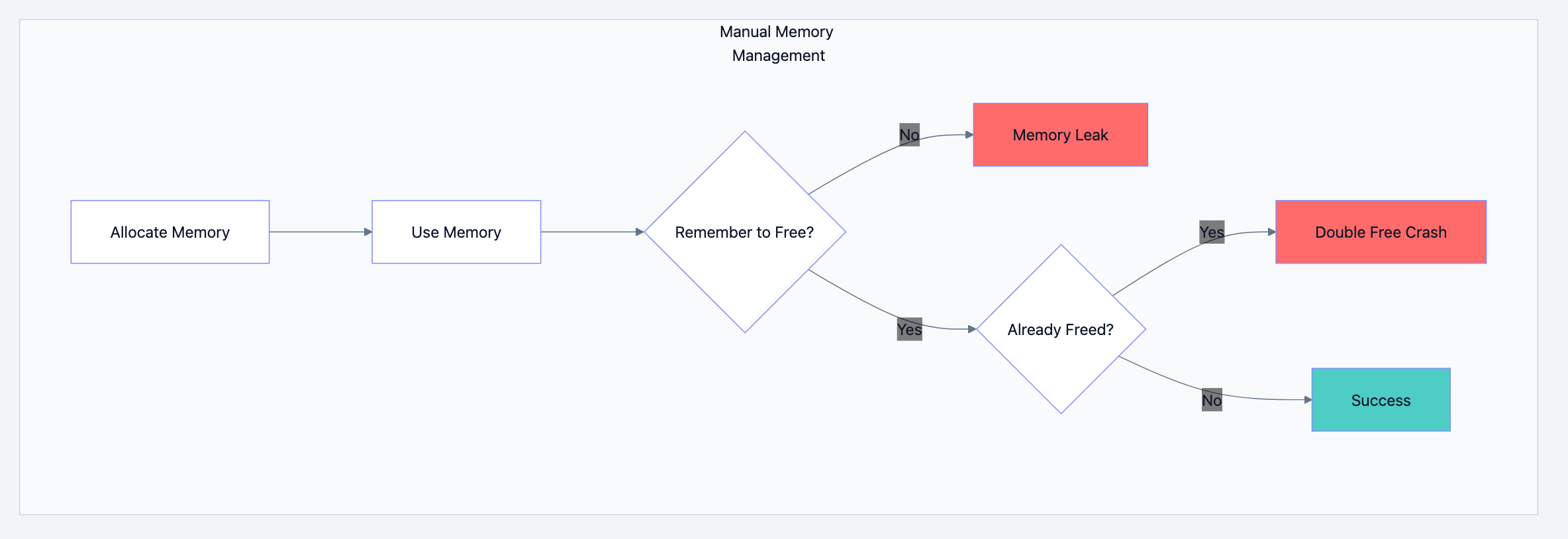

Why Manual Memory Management Is Hard

Languages like C require you to free every piece of memory you allocate. Forget once? Memory leak. Free twice? Crash. Use after free? Security vulnerability.

Go blog diagram 1

Go's garbage collector handles this automatically. You allocate, you use, you forget. The GC figures out when memory is no longer needed and reclaims it.

The Cleaning Crew Analogy

Think of it like this: The garbage collector is like a cleaning crew in an office building. They don't enter occupied offices. Instead, they mark empty offices, then sweep them clean. Work continues in occupied offices uninterrupted. The crew works in the background, identifying and cleaning spaces that are no longer in use.

Go's GC is concurrent. Your program keeps running while garbage collection happens. No "stop the world" pauses that freeze your entire application for seconds.

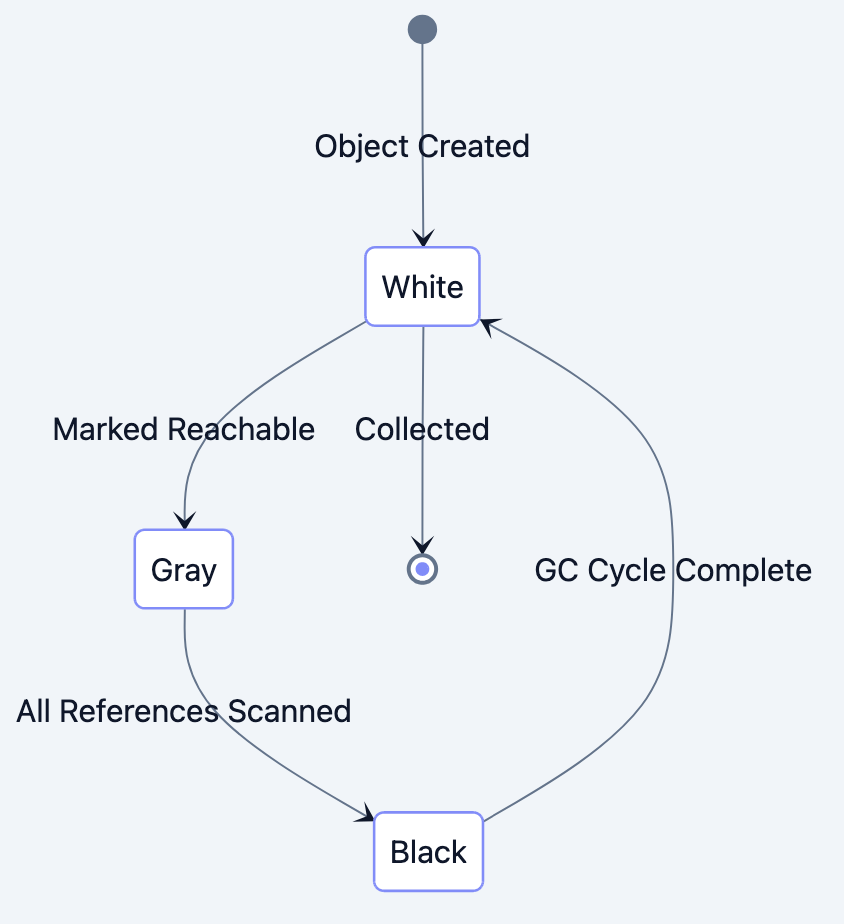

Understanding Go's Tricolor Algorithm

Go uses a tricolor mark and sweep algorithm. Every object in memory gets one of three colors:

- White: Unknown status, potentially garbage

- Gray: Needs to be scanned (has pointers that haven't been checked)

- Black: Scanned and alive (all pointers checked)

Go blog diagram 2

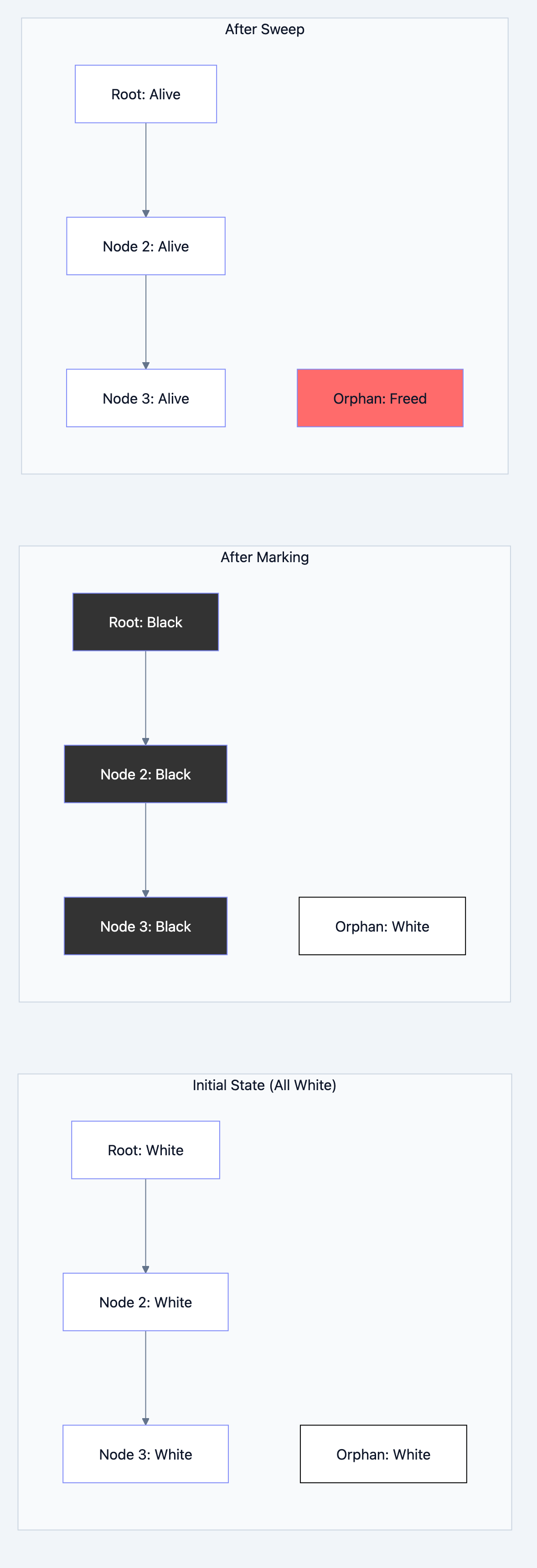

The Process Step by Step

go// Filename: gc_concept.go package main // Step 1: Initially all objects are white type Node struct { Value int Next *Node } func main() { // Root set: Stack variables and globals // These are our starting points root := &Node{Value: 1} // Created as white root.Next = &Node{Value: 2} // Created as white root.Next.Next = &Node{Value: 3} // Created as white orphan := &Node{Value: 999} // Created as white _ = orphan // No reference from root! // When GC runs: // 1. root turns gray (reachable from stack) // 2. root turns black, root.Next turns gray // 3. root.Next turns black, root.Next.Next turns gray // 4. root.Next.Next turns black // 5. orphan stays white (unreachable) // 6. Sweep: white objects collected // After GC, orphan's memory is reclaimed }

Go blog diagram 3

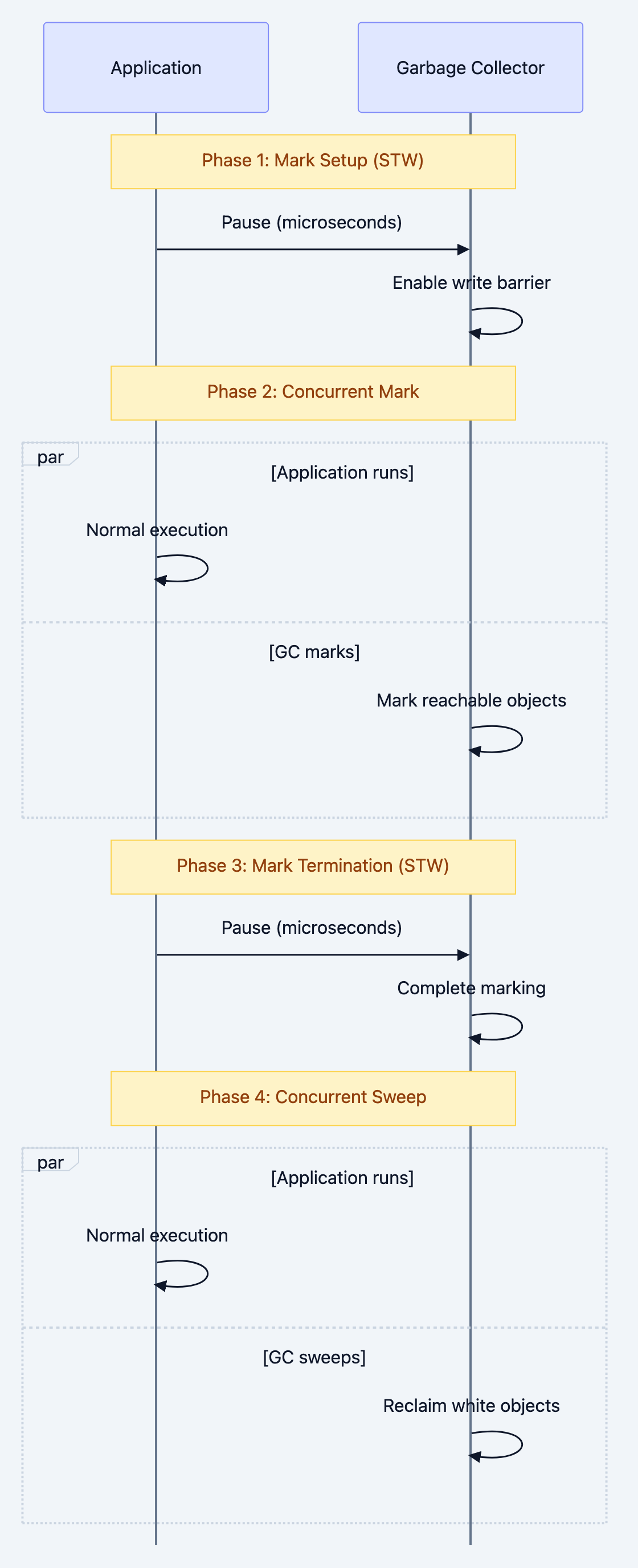

GC Phases Explained

Go's GC runs in phases:

Phase 1: Mark Setup (Stop the World)

Brief pause to prepare for marking. Usually microseconds.

Phase 2: Concurrent Marking

GC runs alongside your program, marking reachable objects. Uses 25% of available CPU by default.

Phase 3: Mark Termination (Stop the World)

Brief pause to finish marking. Handles objects modified during concurrent marking.

Phase 4: Concurrent Sweep

Reclaims white objects. Runs entirely concurrently.

Go blog diagram 4

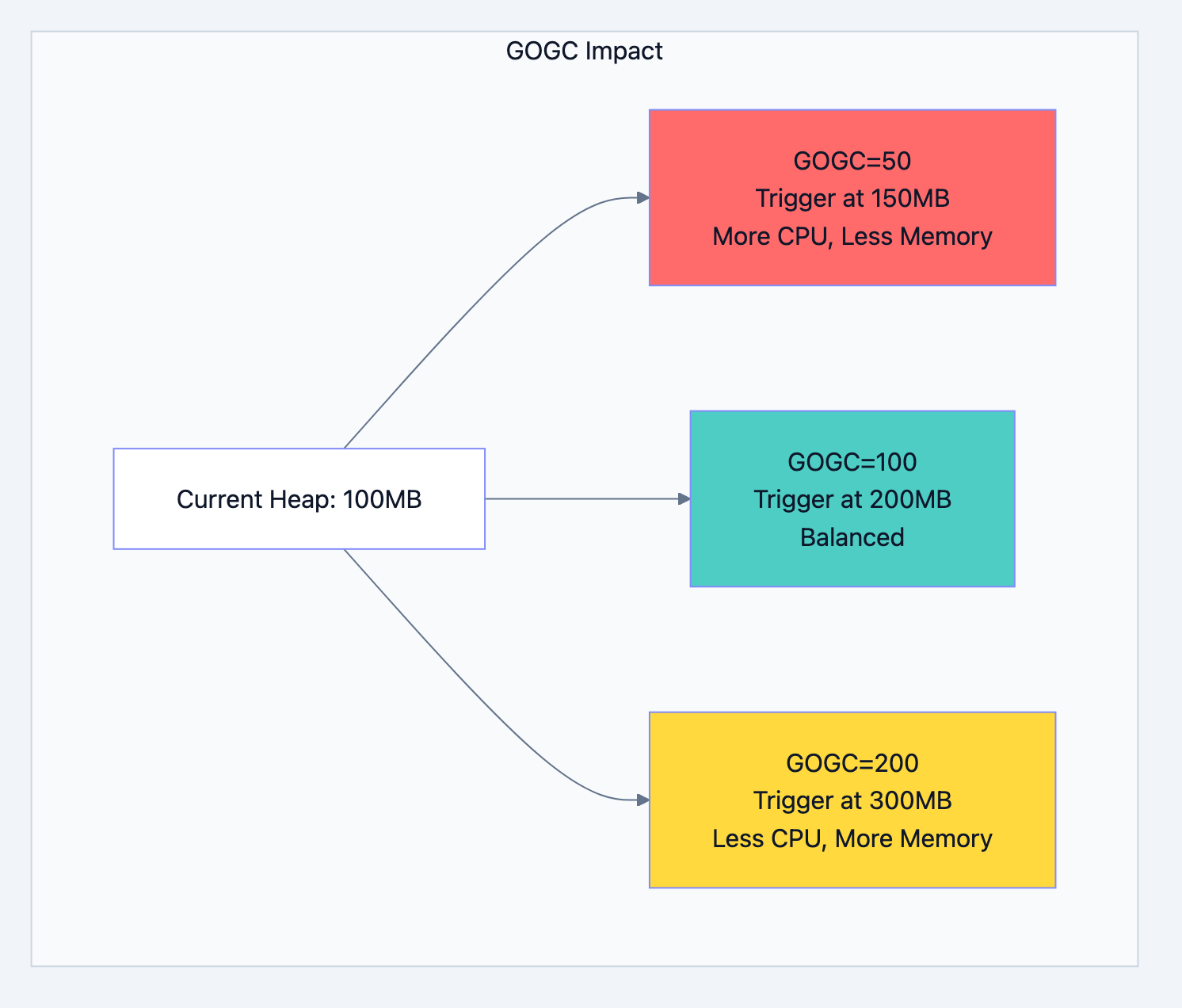

Understanding GOGC

GOGC controls when garbage collection triggers. Default is 100, meaning GC runs when heap doubles.

go// Heap at 100MB, GOGC=100 // GC triggers when heap reaches 200MB // Heap at 100MB, GOGC=50 // GC triggers when heap reaches 150MB (more frequent) // Heap at 100MB, GOGC=200 // GC triggers when heap reaches 300MB (less frequent)

Go blog diagram 5

Setting GOGC

go// Filename: gogc_example.go package main import ( "fmt" "os" "runtime" "runtime/debug" ) func main() { // Method 1: Environment variable os.Setenv("GOGC", "50") // Method 2: Runtime debug.SetGCPercent(50) // Check current setting gcPercent := debug.SetGCPercent(-1) // -1 returns current without changing fmt.Printf("Current GOGC: %d%%\n", gcPercent) // Get memory stats var stats runtime.MemStats runtime.ReadMemStats(&stats) fmt.Printf("Heap Alloc: %d MB\n", stats.HeapAlloc/1024/1024) fmt.Printf("Num GC: %d\n", stats.NumGC) }

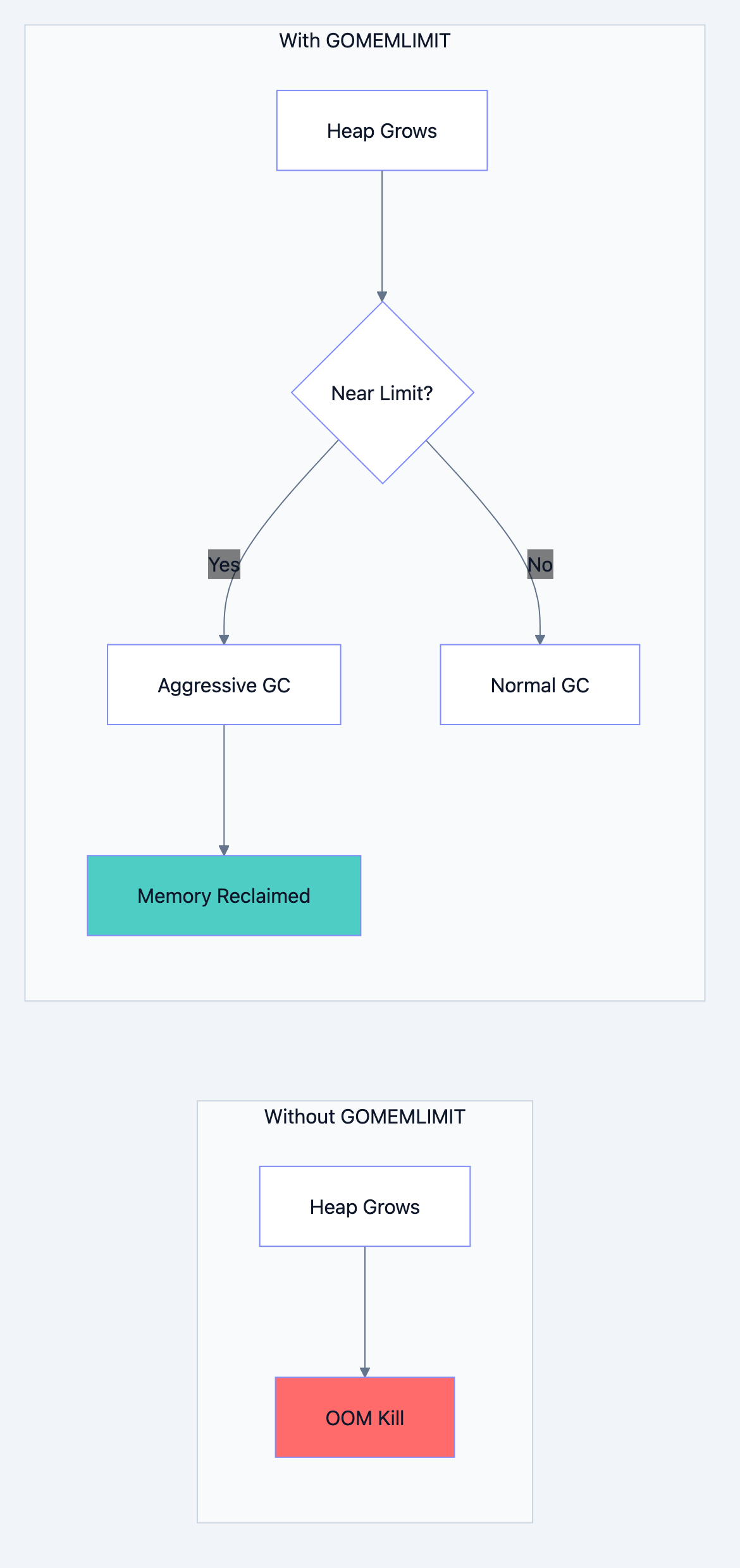

Memory Limit (Go 1.19+)

Go 1.19 introduced GOMEMLIMIT, a soft memory limit that helps prevent OOM kills.

go// Filename: memlimit_example.go package main import ( "runtime/debug" ) func main() { // Set soft memory limit to 1GB // Why: Prevents OOM by running GC more aggressively when approaching limit debug.SetMemoryLimit(1 << 30) // 1GB in bytes // Your application code here }

Go blog diagram 6

Writing GC Friendly Code

Reduce Allocations

Every allocation is eventual work for the GC. Fewer allocations mean less GC pressure.

go// Filename: allocation_optimization.go package main import "fmt" // WRONG: Creates new slice on every call func appendBad(items []int, val int) []int { return append(items, val) } // BETTER: Pre-allocate with expected capacity func appendGood() []int { // Pre-allocate for 1000 items // Why: Avoids repeated growing and copying items := make([]int, 0, 1000) for i := 0; i < 1000; i++ { items = append(items, i) } return items } // WRONG: String concatenation creates garbage func buildStringBad(parts []string) string { result := "" for _, p := range parts { result += p // Creates new string each time } return result } // BETTER: Use strings.Builder func buildStringGood(parts []string) string { var builder strings.Builder // Pre-grow if you know the size for _, p := range parts { builder.WriteString(p) // No allocation } return builder.String() } func main() { items := appendGood() fmt.Println("Items:", len(items)) }

Object Pooling

Reuse objects instead of allocating new ones.

go// Filename: object_pool.go package main import ( "bytes" "sync" ) // Buffer pool prevents repeated allocations // Why: Reusing buffers is much faster than allocating new ones var bufferPool = sync.Pool{ New: func() interface{} { return new(bytes.Buffer) }, } func processData(data []byte) string { // Get buffer from pool buf := bufferPool.Get().(*bytes.Buffer) buf.Reset() // Clear previous content // Use buffer buf.Write(data) result := buf.String() // Return to pool bufferPool.Put(buf) return result }

Avoid Pointer Happy Structs

Pointers create work for the GC. It must trace each pointer.

go// GC HEAVY: Many pointers to trace type BadStruct struct { Name *string Age *int Active *bool Created *time.Time } // GC FRIENDLY: Values instead of pointers type GoodStruct struct { Name string Age int Active bool Created time.Time }

Monitoring GC Performance

Runtime Stats

go// Filename: gc_monitoring.go package main import ( "fmt" "runtime" "time" ) func monitorGC() { var stats runtime.MemStats for { runtime.ReadMemStats(&stats) fmt.Printf("=== GC Stats ===\n") fmt.Printf("Heap Alloc: %d MB\n", stats.HeapAlloc/1024/1024) fmt.Printf("Heap Sys: %d MB\n", stats.HeapSys/1024/1024) fmt.Printf("Heap Objects: %d\n", stats.HeapObjects) fmt.Printf("GC Runs: %d\n", stats.NumGC) fmt.Printf("Last GC Pause: %v\n", time.Duration(stats.PauseNs[(stats.NumGC+255)%256])) fmt.Printf("Total GC Time: %v\n", time.Duration(stats.PauseTotalNs)) fmt.Println() time.Sleep(5 * time.Second) } } func main() { go monitorGC() // Your application code select {} }

GODEBUG Tracing

bash# Enable GC tracing GODEBUG=gctrace=1 ./myapp # Output format: # gc 1 @0.012s 2%: 0.018+1.2+0.003 ms clock, 0.14+0.52/1.8/0+0.024 ms cpu, 4->4->1 MB, 5 MB goal, 8 P # ^ ^ ^ ^ ^ ^ ^ ^ # | | | | | | | + processors # | | | | | | + goal heap size # | | | | | + heap: before -> after -> live # | | | | + CPU time breakdown # | | | + wall clock time # | | + CPU % used by GC # | + time since start # + GC cycle number

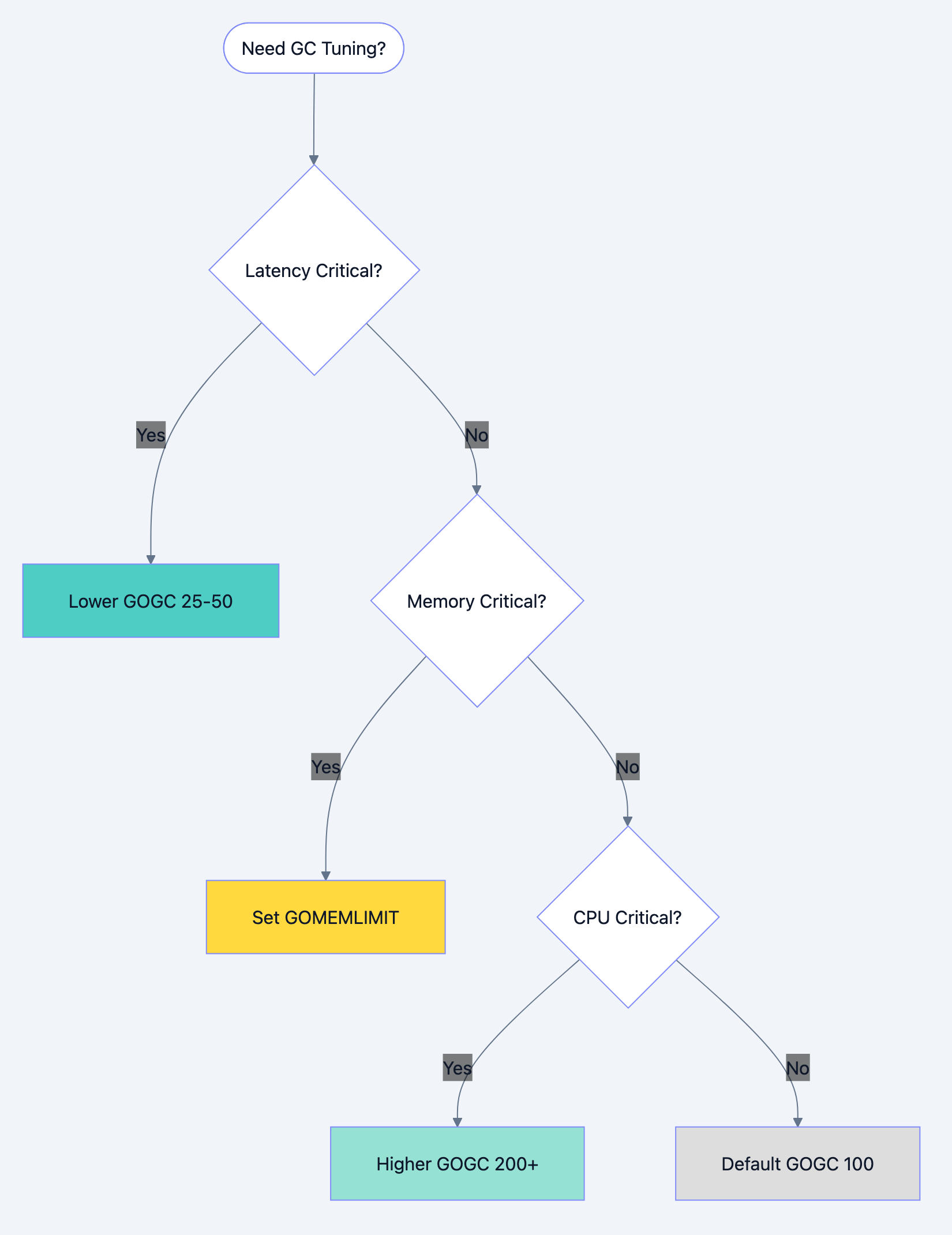

GC Tuning Strategies

| Scenario | GOGC | GOMEMLIMIT | Reason |

|---|---|---|---|

| Latency sensitive | 25-50 | Set based on container | Frequent, smaller pauses |

| Throughput focused | 200-400 | High | Less GC overhead |

| Memory constrained | 100 | Set to limit | Balanced within constraints |

| Batch processing | 400+ | High | Let heap grow, GC at end |

Go blog diagram 7

Common GC Misconceptions

Myth 1: Calling runtime.GC() improves performance

go// WRONG: Forcing GC is almost never helpful func processRequest() { // do work runtime.GC() // Wastes CPU! } // RIGHT: Let Go decide when to GC func processRequest() { // do work // Go handles GC automatically }

Myth 2: More goroutines cause GC problems

Goroutines themselves are cheap. It's the allocations within goroutines that matter.

Myth 3: GC pauses are seconds long

Modern Go (1.5+) has sub millisecond pauses. If you're seeing long pauses, something else is wrong.

What You Learned

You now understand that:

- Go's GC is concurrent: Most work happens alongside your program

- Tricolor marking is the algorithm: White, gray, black categorize objects

- GOGC controls frequency: Higher means less frequent, more memory

- GOMEMLIMIT prevents OOM: Soft limit triggers aggressive GC

- Allocations are the cost: Fewer allocations mean less GC work

- Object pools help: Reuse expensive objects

Your Next Steps

- Profile: Use

go tool pprofto find allocation hot spots - Read Next: Learn about escape analysis to understand stack vs heap

- Experiment: Run your app with

GODEBUG=gctrace=1and analyze output

The garbage collector isn't magic. It's a sophisticated cleaning system that works best when you understand it. Write GC friendly code, tune appropriately, and your Go applications will run smoothly at any scale.