Go Sync Package: Coordinating Goroutines Like a Pro

The Bank Account That Lost Money

Your banking app has 1000 concurrent users. Each performs a simple operation: read balance, add deposit, write new balance. Sounds foolproof. Except at the end of the day, $50,000 is missing. No hackers. No bugs in math. Just concurrent access gone wrong.

This is the classic race condition. Two goroutines read the same balance simultaneously. Both add their amounts. Both write back. One write overwrites the other. Money vanishes into thin air.

Go's sync package prevents exactly this disaster.

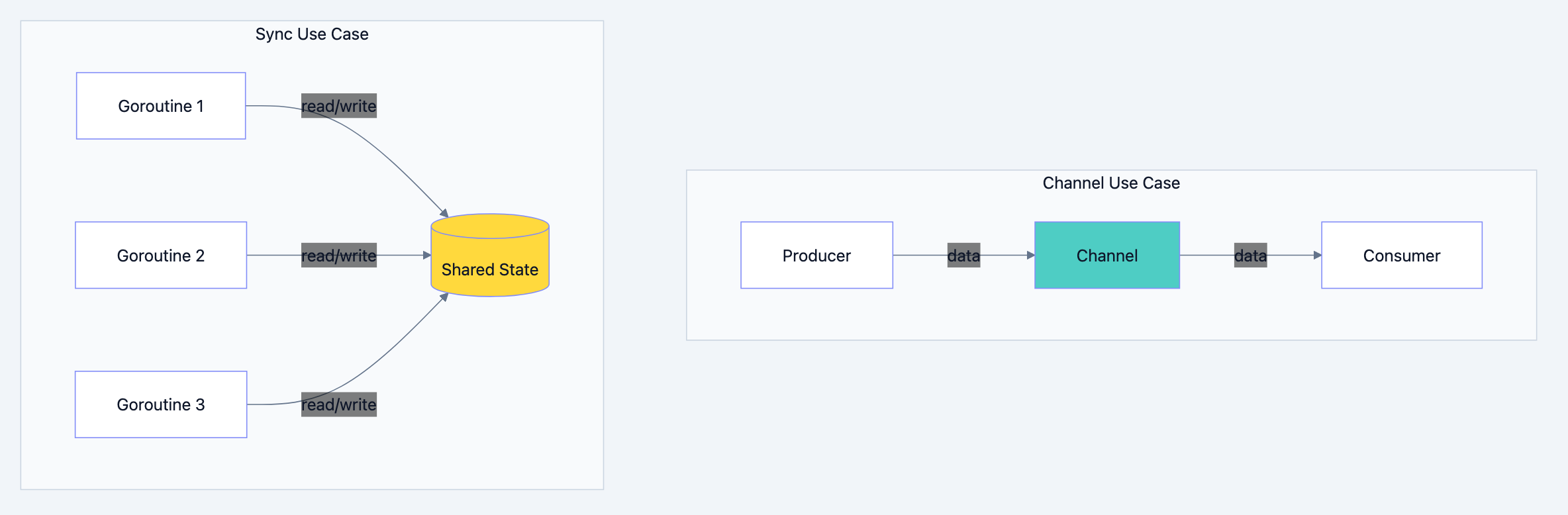

When Channels Aren't Enough

Channels are fantastic for passing data between goroutines. But sometimes you need to protect shared state rather than transfer it. Consider:

- A cache that multiple goroutines read and occasionally update

- A counter tracking active connections

- Configuration that rarely changes but is read constantly

Go blog diagram 1

Protecting shared state with channels feels awkward and slow. The sync package gives you the right tools.

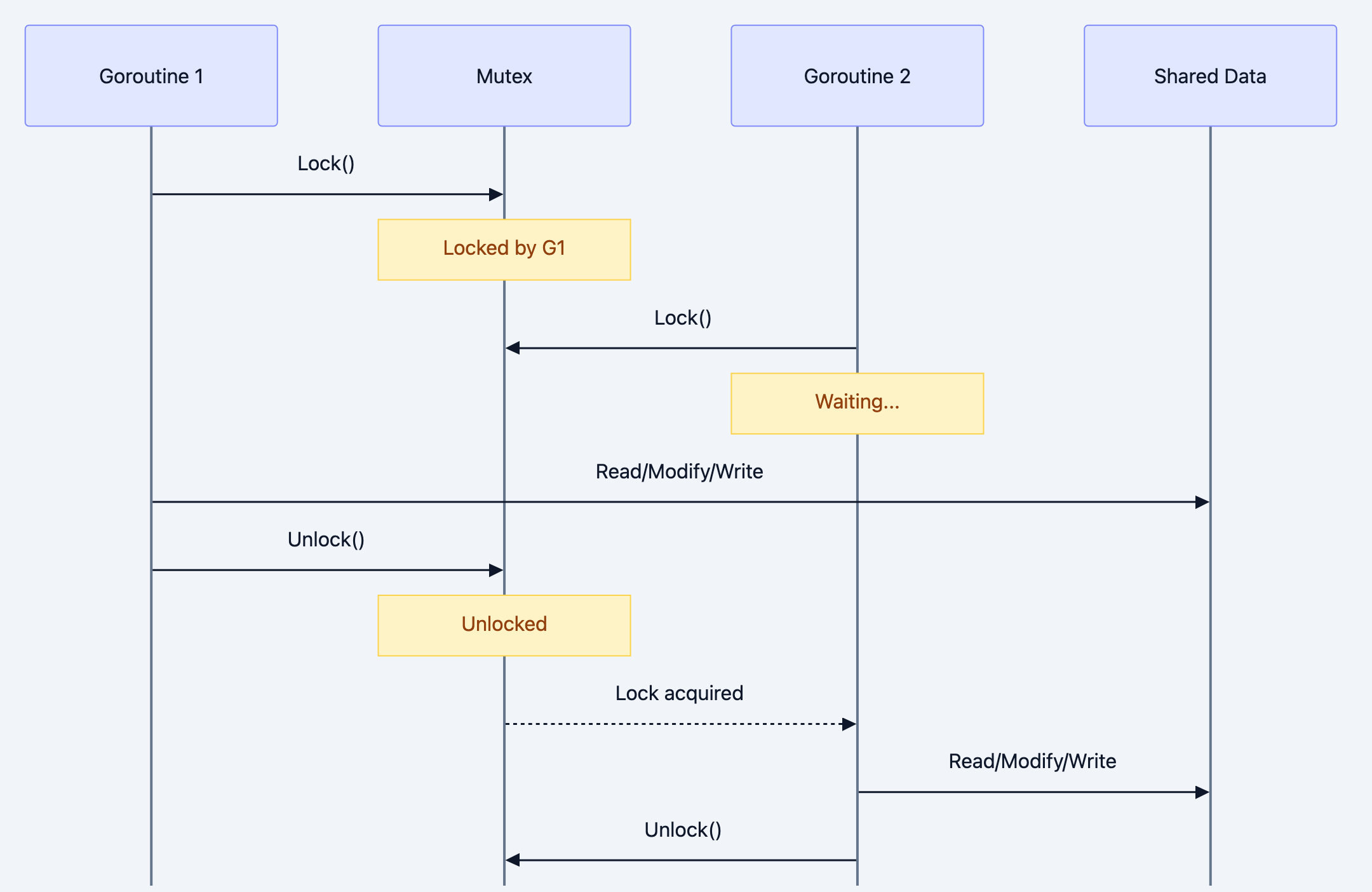

The Traffic Light System

Think of it like this: A Mutex is like a traffic light at a single lane bridge. Only one car crosses at a time. When a car enters, it "locks" the bridge. Other cars wait. When it exits, it "unlocks" for the next car. Without this traffic light, cars collide.

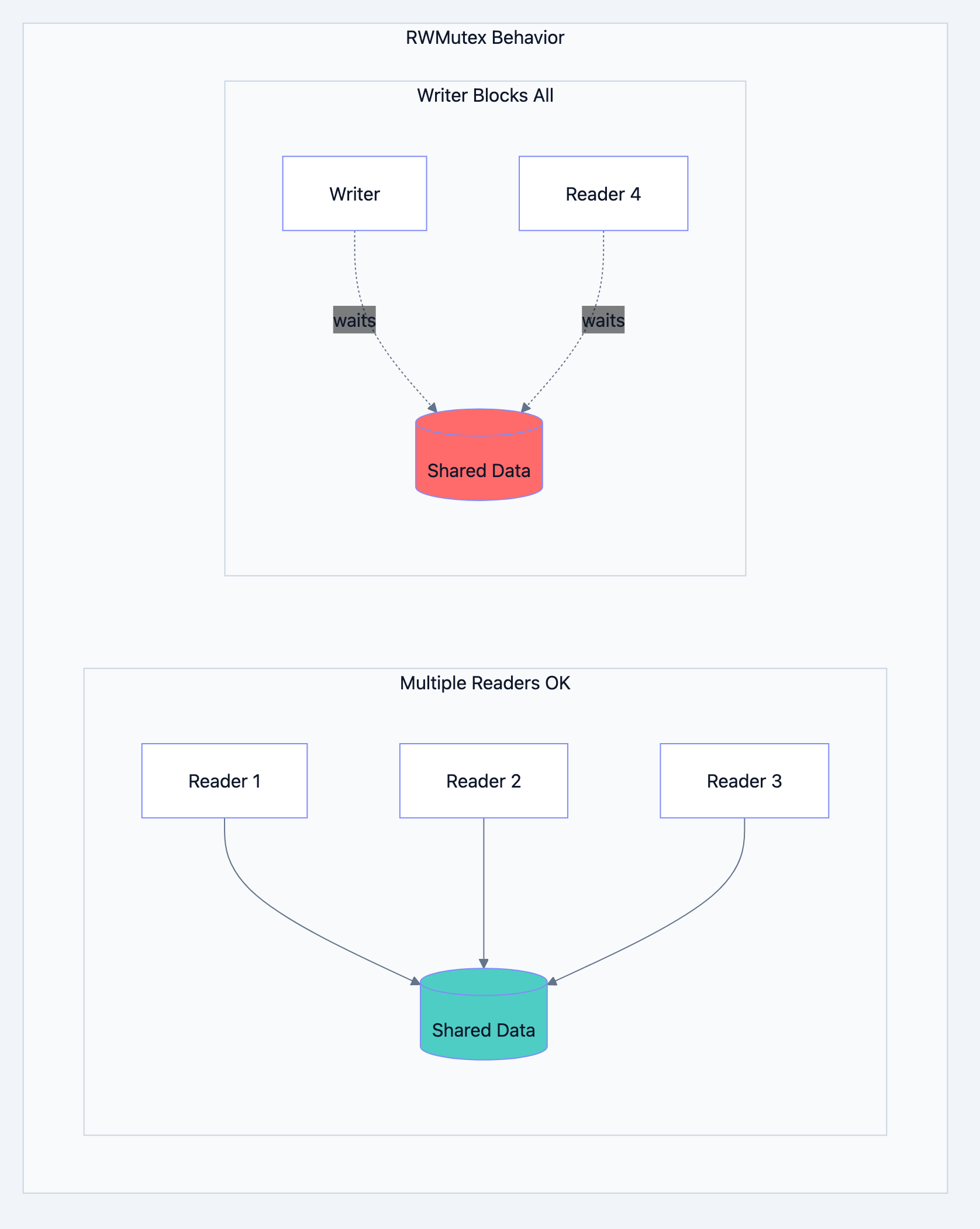

RWMutex is smarter. It's like a library rule: many people can read books simultaneously, but when someone needs to add new books to the shelf, everyone waits until they're done.

Mutex: The Exclusive Lock

A Mutex (mutual exclusion) ensures only one goroutine accesses a section of code at a time.

go// Filename: mutex_basics.go package main import ( "fmt" "sync" ) // Counter with mutex protection // Why: Prevents race conditions when multiple goroutines increment type SafeCounter struct { mu sync.Mutex value int } // Increment safely adds to the counter // Why: Lock ensures atomic read-modify-write func (c *SafeCounter) Increment() { c.mu.Lock() // Acquire lock c.value++ // Safe to modify c.mu.Unlock() // Release lock } // Value safely reads the counter // Why: Lock ensures we read a consistent value func (c *SafeCounter) Value() int { c.mu.Lock() defer c.mu.Unlock() // Ensures unlock even if panic occurs return c.value } func main() { counter := SafeCounter{} var wg sync.WaitGroup // 1000 goroutines incrementing concurrently for i := 0; i < 1000; i++ { wg.Add(1) go func() { defer wg.Done() counter.Increment() }() } wg.Wait() fmt.Println("Final count:", counter.Value()) }

Expected Output:

Final count: 1000Without the Mutex, you'd get random values less than 1000.

Go blog diagram 2

RWMutex: Readers Welcome

When reads are frequent and writes are rare, RWMutex shines. Multiple readers can proceed simultaneously, but writers get exclusive access.

go// Filename: rwmutex_example.go package main import ( "fmt" "sync" "time" ) // Cache demonstrates read-heavy workload // Why: Multiple readers shouldn't block each other type Cache struct { mu sync.RWMutex data map[string]string } func NewCache() *Cache { return &Cache{data: make(map[string]string)} } // Get reads from cache (many can read simultaneously) // Why: RLock allows concurrent reads func (c *Cache) Get(key string) (string, bool) { c.mu.RLock() // Read lock defer c.mu.RUnlock() // Read unlock val, ok := c.data[key] return val, ok } // Set writes to cache (exclusive access) // Why: Lock blocks all readers and writers func (c *Cache) Set(key, value string) { c.mu.Lock() // Write lock defer c.mu.Unlock() // Write unlock c.data[key] = value } func main() { cache := NewCache() cache.Set("name", "Gopher") var wg sync.WaitGroup // 10 concurrent readers for i := 0; i < 10; i++ { wg.Add(1) go func(id int) { defer wg.Done() val, _ := cache.Get("name") fmt.Printf("Reader %d got: %s\n", id, val) }(i) } wg.Wait() }

Expected Output:

Reader 0 got: Gopher Reader 3 got: Gopher Reader 1 got: Gopher ... (all 10 readers complete)

Go blog diagram 3

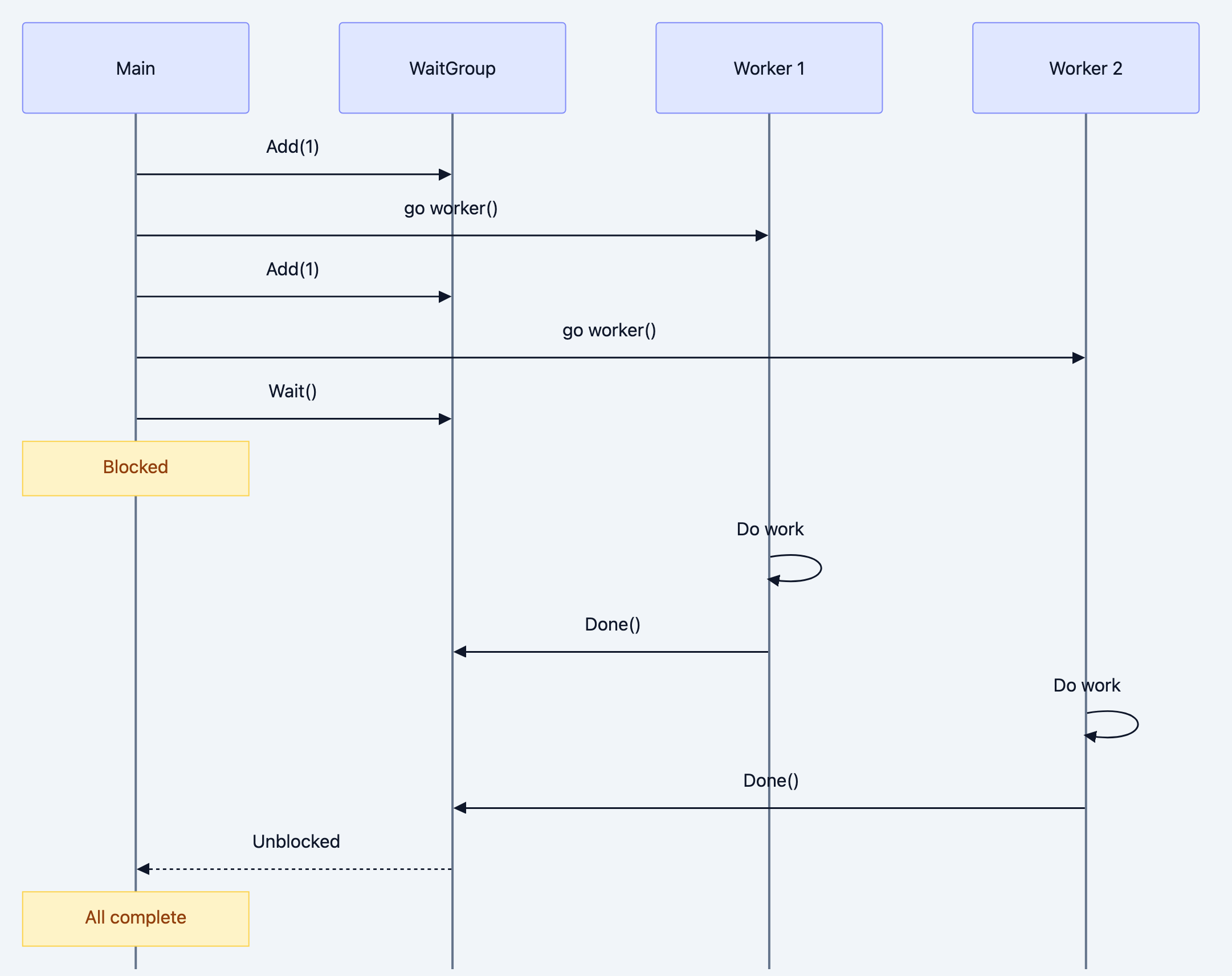

WaitGroup: Waiting for Everyone

WaitGroup solves the "wait for all goroutines to finish" problem elegantly.

go// Filename: waitgroup_example.go package main import ( "fmt" "sync" "time" ) func worker(id int, wg *sync.WaitGroup) { defer wg.Done() // Decrease counter when done fmt.Printf("Worker %d starting\n", id) time.Sleep(time.Second) fmt.Printf("Worker %d done\n", id) } func main() { var wg sync.WaitGroup for i := 1; i <= 5; i++ { wg.Add(1) // Increase counter before starting goroutine go worker(i, &wg) } wg.Wait() // Block until counter reaches zero fmt.Println("All workers completed!") }

Expected Output:

Worker 5 starting Worker 1 starting Worker 3 starting Worker 2 starting Worker 4 starting Worker 1 done Worker 5 done Worker 3 done Worker 4 done Worker 2 done All workers completed!

Go blog diagram 4

Critical Rules:

- Call

Add()before starting the goroutine - Pass WaitGroup by pointer

- Use

defer wg.Done()for safety

Once: Do It Exactly Once

sync.Once guarantees a function runs exactly once, no matter how many goroutines call it. Perfect for initialization.

go// Filename: once_example.go package main import ( "fmt" "sync" ) var ( config map[string]string once sync.Once ) // loadConfig runs exactly once // Why: Expensive initialization should happen only once func loadConfig() { fmt.Println("Loading configuration...") config = map[string]string{ "host": "localhost", "port": "8080", } } // GetConfig returns configuration, initializing if needed // Why: Thread-safe lazy initialization func GetConfig() map[string]string { once.Do(loadConfig) // Only first call executes loadConfig return config } func main() { var wg sync.WaitGroup // 10 goroutines all trying to get config for i := 0; i < 10; i++ { wg.Add(1) go func(id int) { defer wg.Done() cfg := GetConfig() fmt.Printf("Goroutine %d got host: %s\n", id, cfg["host"]) }(i) } wg.Wait() }

Expected Output:

Loading configuration... Goroutine 0 got host: localhost Goroutine 2 got host: localhost ... (all goroutines get config, but "Loading" prints once)

Pool: Reuse Expensive Objects

sync.Pool maintains a pool of reusable objects. When you need a buffer, get one from the pool. When done, put it back. Reduces garbage collection pressure.

go// Filename: pool_example.go package main import ( "bytes" "fmt" "sync" ) // bufferPool recycles byte buffers // Why: Creating large buffers repeatedly is expensive var bufferPool = sync.Pool{ New: func() interface{} { fmt.Println("Creating new buffer") return new(bytes.Buffer) }, } func processData(data string) string { // Get buffer from pool // Why: Reuses existing buffer if available buf := bufferPool.Get().(*bytes.Buffer) buf.Reset() // Clear for reuse // Use buffer buf.WriteString("Processed: ") buf.WriteString(data) result := buf.String() // Return buffer to pool // Why: Available for next Get() call bufferPool.Put(buf) return result } func main() { results := make([]string, 5) for i := 0; i < 5; i++ { results[i] = processData(fmt.Sprintf("data-%d", i)) } for _, r := range results { fmt.Println(r) } }

Expected Output:

Creating new buffer Processed: data-0 Processed: data-1 Processed: data-2 Processed: data-3 Processed: data-4

Notice only one buffer is created and reused.

Real World Example: Rate Limited API Client

go// Filename: rate_limiter.go package main import ( "fmt" "sync" "time" ) // RateLimiter controls request rate type RateLimiter struct { mu sync.Mutex tokens int maxTokens int ticker *time.Ticker } // NewRateLimiter creates a rate limiter // Why: Controls how many requests per interval func NewRateLimiter(rate int, interval time.Duration) *RateLimiter { rl := &RateLimiter{ tokens: rate, maxTokens: rate, ticker: time.NewTicker(interval), } // Replenish tokens periodically go func() { for range rl.ticker.C { rl.mu.Lock() rl.tokens = rl.maxTokens rl.mu.Unlock() } }() return rl } // Allow checks if request is permitted // Why: Thread-safe token bucket check func (rl *RateLimiter) Allow() bool { rl.mu.Lock() defer rl.mu.Unlock() if rl.tokens > 0 { rl.tokens-- return true } return false } func main() { limiter := NewRateLimiter(3, time.Second) var wg sync.WaitGroup // Simulate 10 requests for i := 1; i <= 10; i++ { wg.Add(1) go func(id int) { defer wg.Done() if limiter.Allow() { fmt.Printf("Request %d: Allowed\n", id) } else { fmt.Printf("Request %d: Rate limited\n", id) } }(i) } wg.Wait() }

Expected Output:

Request 1: Allowed Request 2: Allowed Request 3: Allowed Request 4: Rate limited Request 5: Rate limited ... (remaining are rate limited)

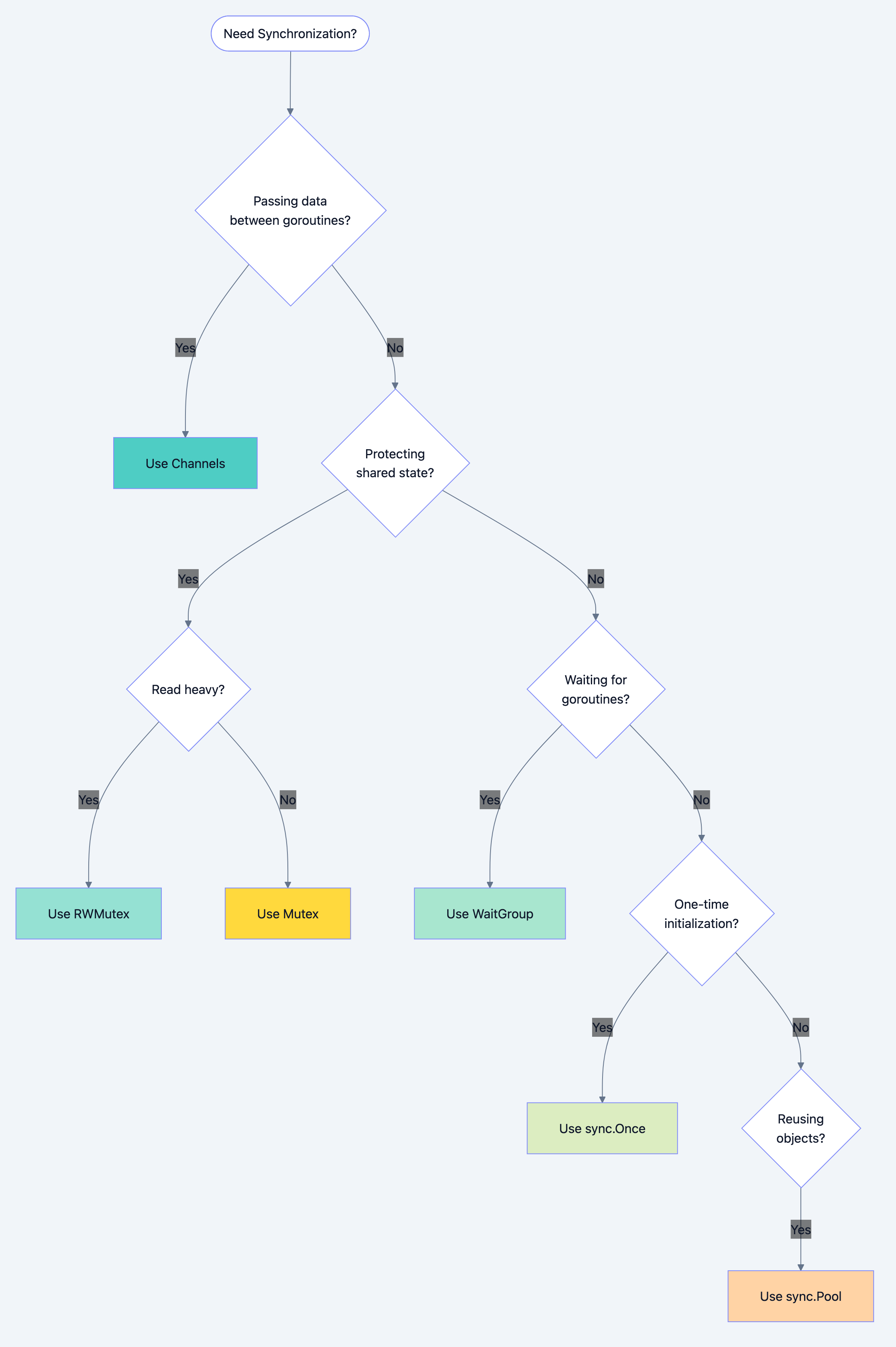

Choosing the Right Tool

Go blog diagram 5

| Primitive | Use Case | Overhead |

|---|---|---|

| Mutex | Exclusive access to shared data | Low |

| RWMutex | Read-heavy, write-light workloads | Medium |

| WaitGroup | Wait for goroutine completion | Very Low |

| Once | Single initialization | Very Low |

| Pool | Object reuse | Medium |

| Channels | Data transfer between goroutines | Low-Medium |

Deadly Mistakes to Avoid

Mistake 1: Copying Mutex

go// WRONG: Mutex copied, protection lost type Counter struct { sync.Mutex value int } func bad(c Counter) { // Copies mutex! c.Lock() c.value++ c.Unlock() } // RIGHT: Pass by pointer func good(c *Counter) { c.Lock() c.value++ c.Unlock() }

Mistake 2: Forgetting to Unlock

go// WRONG: Lock never released func dangerous() { mu.Lock() if someCondition { return // Oops! Lock still held } mu.Unlock() } // RIGHT: defer ensures unlock func safe() { mu.Lock() defer mu.Unlock() if someCondition { return // defer runs, lock released } }

Mistake 3: Recursive Lock

go// WRONG: Deadlock - same goroutine locks twice func problematic() { mu.Lock() helper() // Also tries to lock mu.Unlock() } func helper() { mu.Lock() // Deadlock! defer mu.Unlock() }

What You Learned

You now understand that:

- Mutex provides exclusive access: One goroutine at a time

- RWMutex optimizes for readers: Multiple readers, exclusive writers

- WaitGroup coordinates completion: Wait for all goroutines

- Once guarantees single execution: Thread-safe initialization

- Pool recycles objects: Reduces allocation pressure

Your Next Steps

- Build: Create a thread-safe LRU cache using RWMutex

- Read Next: Learn about the context package for cancellation

- Experiment: Benchmark Mutex vs RWMutex for your read/write ratio

The sync package turns dangerous concurrent code into safe, predictable behavior. Choose the right primitive for your use case, and your goroutines will work together in harmony.